information theory

Generated by DeepSeek V3.2

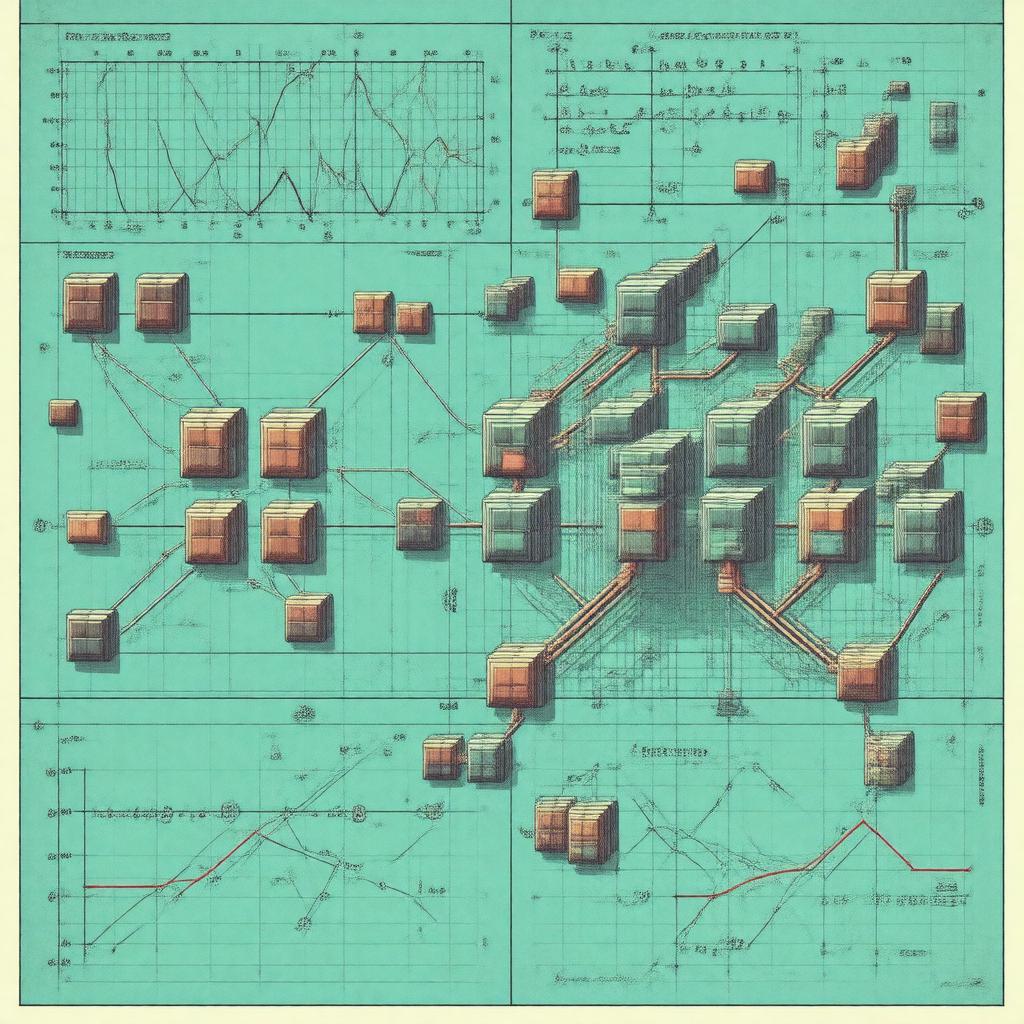

Generated by DeepSeek V3.2Expansion Funnel Raw 54 → Dedup 25 → NER 18 → Enqueued 17

| information theory | |

|---|---|

| |

| Name | Information theory |

| Founded | 1948 |

| Founder | Claude Shannon |

| Key people | Harry Nyquist, Ralph Hartley, Warren Weaver, John von Neumann |

| Notable works | A Mathematical Theory of Communication |

| Influenced | Computer science, Electrical engineering, Statistics, Quantum mechanics |

information theory. Information theory is a mathematical framework for quantifying, storing, and communicating information, fundamentally established by Claude Shannon in his landmark 1948 paper. It provides the theoretical underpinnings for modern digital communication systems, data compression, and cryptography. The field rigorously defines core concepts like entropy and channel capacity, which have found profound applications far beyond its origins in Bell Labs.

Introduction

The formal foundations were laid by Claude Shannon while working at Bell Labs, culminating in his seminal monograph A Mathematical Theory of Communication, later published as a book with commentary by Warren Weaver. While earlier work by figures like Harry Nyquist and Ralph Hartley addressed related problems in telegraphy, Shannon's synthesis created a unified discipline. This work immediately influenced the design of communication systems during the Cold War and the Space Race, and its principles became essential for the development of the ARPANET and modern Internet Protocol. The field's birth is often associated with the Macy Conferences, where its ideas intersected with cybernetics and early artificial intelligence.

Fundamental concepts

The central measure is entropy, introduced by Shannon, which quantifies the uncertainty or information content in a message from a source, such as the English language. Related to this is mutual information, which measures the amount of information shared between systems, a concept critical for understanding communication channels. The noisy-channel coding theorem, another cornerstone result from Shannon's work, establishes the fundamental limits on reliable data transmission. Key mathematical tools for deriving these results involve probability theory, stochastic processes, and concepts from statistical mechanics, notably the H-theorem developed by Ludwig Boltzmann.

Channel capacity and coding

Channel capacity defines the maximum rate of reliable information transfer over a noisy channel, such as a telephone line or a deep-space network link used by NASA. To approach this limit, error-correcting codes are essential; pioneering work includes the Hamming code developed by Richard Hamming and more powerful codes like Turbo codes and LDPC codes. Source coding, exemplified by algorithms like the Lempel–Ziv–Welch compression used in the GIF format, removes redundancy without loss. The practical implementation of these theories relies on sophisticated algorithms and hardware developed by organizations like the Institute of Electrical and Electronics Engineers and companies such as Qualcomm.

Applications

Applications are ubiquitous in modern technology. Data compression standards like JPEG, MP3, and MPEG-4 are direct implementations of source coding principles. In telecommunications, modulation schemes from Frequency-shift keying to Orthogonal frequency-division multiplexing are designed with channel capacity in mind. Cryptography, including protocols like the Data Encryption Standard and Advanced Encryption Standard, uses concepts of entropy and unicity distance. The field also underpins machine learning algorithms, such as those used by Google and OpenAI, and is crucial for quantum information science pursued at institutions like the Massachusetts Institute of Technology and the University of California, Berkeley.

Relationship to other fields

It shares deep connections with thermodynamics and statistical mechanics, as highlighted by the work of Edwin Jaynes on the maximum entropy principle. In computer science, it influences algorithm design, notably in the analysis of the Huffman coding algorithm and Kolmogorov complexity. It provides foundational concepts for statistical inference and Bayesian probability, as explored by David MacKay. Furthermore, it intersects with neuroscience in studying the neural code, a research area advanced by scientists like Horace Barlow, and with linguistics through the analysis of the Zipf's law distribution in corpora like the Brown Corpus.

Category:Information theory Category:Applied mathematics Category:Telecommunication theory