Hamming code

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 55 → Dedup 0 → NER 0 → Enqueued 0

| Hamming code | |

|---|---|

| |

| Name | Hamming code |

| Type | Linear code |

| Block length | 2r − 1 |

| Message length | 2r − r − 1 |

| Rate | 1 − r / (2r − 1) |

| Notation | [2r − 1, 2r − r − 1, 3]2 |

| Discovered | Richard Hamming |

| Year | 1950 |

Hamming code is a family of linear error-correcting codes that can detect and correct single-bit errors in digital data. Invented by Richard Hamming at Bell Labs in the early 1950s, it was a foundational development in the field of coding theory and information theory. The code's elegant structure, based on parity-check bits placed at power-of-two positions, allows for efficient syndrome decoding. Hamming codes are perfect codes for single-error correction and have seen extensive use in computer memory systems, telecommunications, and digital communication.

Overview

Hamming codes are a class of binary codes characterized by their ability to correct a single error within a fixed-length block. The fundamental parameters are defined by an integer *r* ≥ 2, yielding a codeword length of *n* = 2r − 1 and a message length of *k* = 2r − r − 1. This structure places them among the first practical implementations of forward error correction, a concept central to reliable data transmission pioneered by Claude Shannon in his seminal work. The codes are systematic, meaning the original data bits are directly visible within the codeword, interspersed with calculated parity bits. Their minimum Hamming distance is 3, which is the property that guarantees single-error correction and double-error detection. This mathematical framework connects deeply to other constructs in discrete mathematics, such as incidence structures and projective geometry.

History

The impetus for the code's invention arose from the practical frustrations of Richard Hamming while working on the early IBM 701 computer at Bell Labs. Weekend computations on the machine, which used punched card and paper tape input, would often fail due to undetected errors in the relay-based memory systems. Motivated by these repeated failures, Hamming sought a method for the machine to automatically correct errors without operator intervention. His work, conducted around 1950, was contemporaneous with other major advances at Bell Labs, including the development of the transistor and foundational work in information theory by Claude Shannon. Hamming published his results in the Bell System Technical Journal, cementing its place in the annals of computer science and electrical engineering. This period also saw related work by Marcel J. E. Golay, who developed the Golay code.

Hamming codes

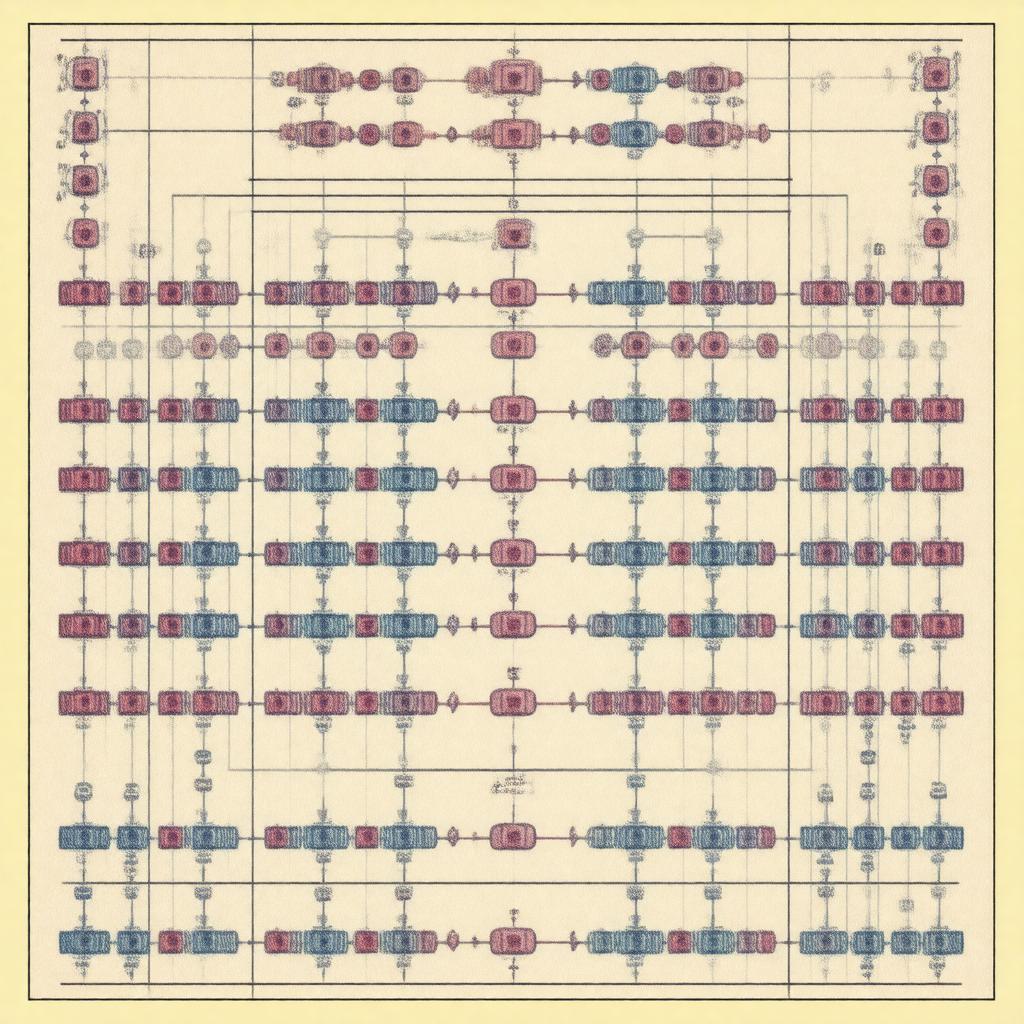

A Hamming code is completely specified by its parity-check matrix **H**. For a code of length *n* = 2r − 1, the columns of **H** consist of all non-zero binary vectors of length *r*. This construction ensures that the syndrome, computed by multiplying the received vector by **H**T, directly corresponds to the binary representation of the error position. The related dual code of a Hamming code is a simplex code, and extended Hamming codes, which add an overall parity bit, are equivalent to Reed–Muller codes of first order. The mathematical theory of these codes is deeply intertwined with the properties of finite fields, particularly GF(2), and their vector space structure.

Hamming(7,4) code

The most famous instantiation is the Hamming(7,4) code, where 4 data bits are encoded into a 7-bit codeword. In this code, bits at positions 1, 2, and 4 (powers of two) are used as parity bits, calculated over specific subsets of the three data bits placed at positions 3, 5, 6, and 7. The generator matrix **G** and parity-check matrix **H** for this code have standard forms that facilitate both encoding and the efficient syndrome decoding process. This particular code was historically significant for its use in early computer memory systems, such as those in the IBM 650 and DEC PDP-11, to protect against single-bit errors in core memory. It also serves as a canonical example in textbooks on subjects like digital design and computer organization.

General algorithm

The encoding and decoding algorithm for Hamming codes follows a systematic procedure. During encoding, parity bits are placed at positions that are powers of two within the codeword. Each parity bit is computed as the XOR sum over a specific set of data bit positions, determined by the binary representation of the position indices. Decoding involves recalculating these parity checks upon receipt to form a syndrome; a non-zero syndrome is interpreted as the binary address of the erroneous bit, which is then flipped to correct the error. This algorithm is a prime example of the practical application of concepts from Boolean algebra and modular arithmetic. The process is mathematically equivalent to solving a set of linear equations over GF(2), showcasing the power of algebraic methods in coding theory.

Applications

While largely supplanted by more powerful codes like Reed–Solomon and LDPC codes in modern high-performance systems, Hamming codes remain in use in applications where simplicity and low latency are paramount. They were historically crucial for error correction in RAM modules, notably in ECC memory for servers and workstations. The codes are also embedded in communication protocols for serial communication and some flash memory controllers. Furthermore, the principles of the Hamming code directly influenced the development of more advanced block code families and are a staple in the curriculum of institutions like MIT and Stanford University for courses in digital communication and network protocol design. Their conceptual elegance ensures their continued relevance as a teaching tool and a component in legacy and embedded systems.

Category:Error detection and correction Category:Coding theory Category:Binary codes