Rate–distortion theory

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 52 → Dedup 0 → NER 0 → Enqueued 0

| Rate–distortion theory | |

|---|---|

| |

| Name | Rate–distortion theory |

| Field | Information theory |

| Founder | Claude Shannon |

| Introduced | 1959 |

| Key concepts | Source coding, lossy compression, mutual information, distortion measure |

| Notable contributors | Claude Shannon, David Slepian, Jacob Wolfowitz, Thomas Cover, Ilan Grinberg |

Rate–distortion theory provides a mathematical framework describing the minimum data rate required to represent a source within a prescribed fidelity, linking compressibility and allowable distortion. Originating in work by Claude Shannon and developed alongside results by David Slepian and Jacob Wolfowitz, the theory underpins lossy source coding methods used in practical systems designed by organizations such as Bell Labs and standardized by bodies like ISO/IEC JTC 1.

Introduction

Rate–distortion theory grew from the interaction of ideas introduced by Claude Shannon and later expansions by scholars such as Thomas Cover and Jacob Wolfowitz in mid‑20th century information research institutions like Bell Labs and the Institute of Electrical and Electronics Engineers. It formalizes tradeoffs between bitrate and fidelity for sources modeled by probabilistic laws studied in contexts including Gaussian distribution sources, discrete alphabet models inspired by Norbert Wiener and Andrey Kolmogorov, and practical signals processed by labs such as MIT Lincoln Laboratory and companies including AT&T. Connections to channel coding theorems from Richard Hamming and algorithmic developments influenced standards work at International Telecommunication Union and European Telecommunications Standards Institute.

Mathematical Formulation

Rate–distortion theory models a source as a stochastic process over an alphabet with probability law akin to investigations by Andrey Kolmogorov and Andrei Markov, and employs information measures developed by Claude Shannon and formalized by contributors like Thomas Cover. The setup uses a distortion measure d(x, x̂) inspired by quadratic cost studied in Carl Friedrich Gauss's least squares tradition and Hamming cost related to Richard Hamming's coding. The central optimization involves mutual information I(X; X̂) between source random variable X and reconstruction X̂, a quantity analyzed in texts by Cover and Thomas and applied in research at Bell Labs and Bellcore. Variational techniques used in proofs draw on methods from work by Leonid Kantorovich and John von Neumann, while existence results leverage convexity results tied to Ludwig Boltzmann-era inequalities and modern functional analysis applied in research groups at Courant Institute.

Rate–Distortion Function and Properties

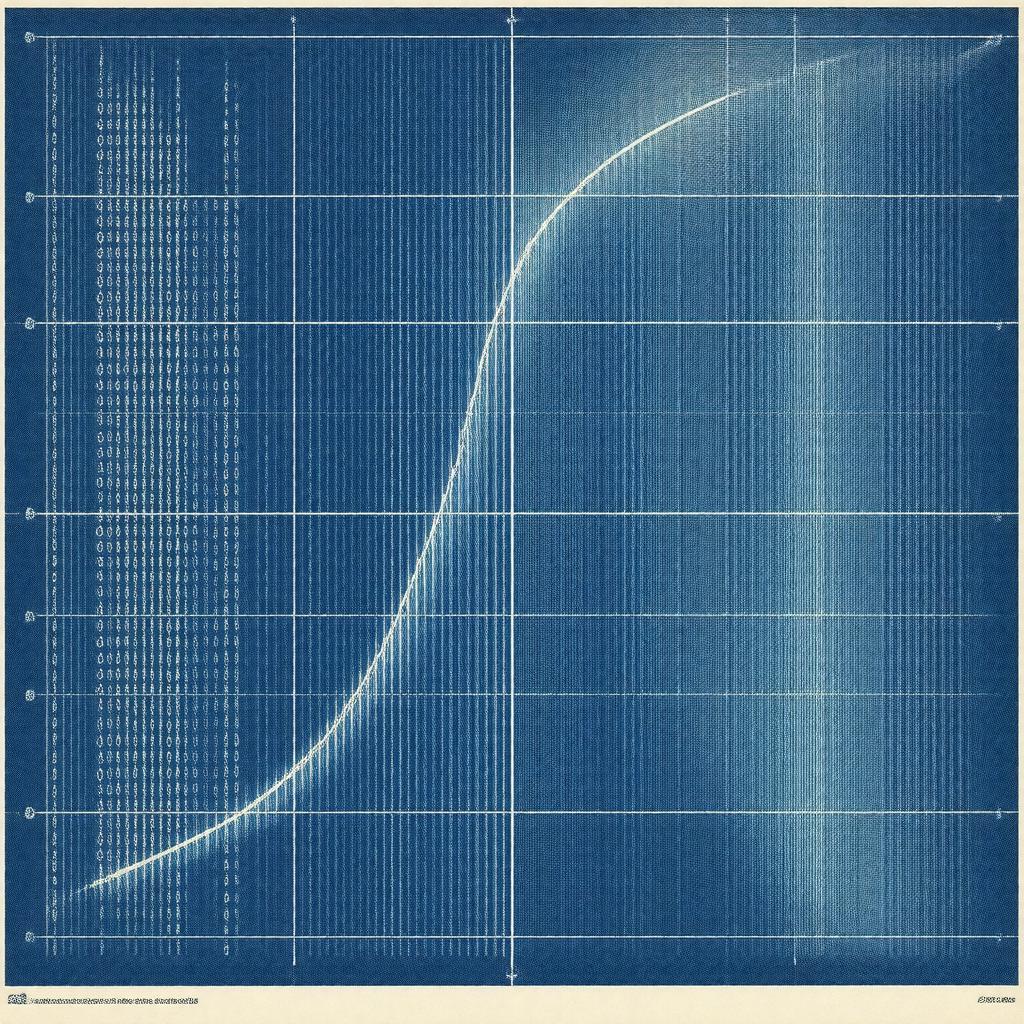

The rate–distortion function R(D) is defined as the infimum of I(X; X̂) over conditional distributions p(x̂|x) satisfying E[d(X, X̂)] ≤ D; this formulation follows principles articulated by Claude Shannon and further elaborated by Jacob Wolfowitz and David Slepian. R(D) inherits convexity and nonincreasing behavior properties akin to convex analysis found in works by Léonard Euler and studied in optimization literature at Stanford University and Massachusetts Institute of Technology. For memoryless sources including the Gaussian distribution with squared‑error distortion the closed form R(D) resembles results familiar from Nyquist sampling bounds and parallels rate bounds in channel capacity results by C. E. Shannon. Achievability and converse bounds relate to typicality and large deviations principles explored by Harold Jeffreys and modern exponents investigated by groups at Bell Labs and Microsoft Research.

Coding Theorems and Achievability

Coding theorems in rate–distortion theory parallel channel coding theorems by Richard Hamming and capacity results by Claude Shannon, establishing that for any D above the distortion floor there exist sequence of codes achieving rates arbitrarily close to R(D). Proof techniques employ random coding arguments reminiscent of Shannon's random coding method and concentration results akin to work by Andrey Kolmogorov and Paul Lévy, with constructive algorithms informed by iterative optimization methods from Richard Bellman and matrix decomposition work related to Carl Friedrich Gauss. Practical encoders such as vector quantizers and scalar quantizers derive from these proofs and have been implemented in products by companies including Sony Corporation and Philips under standards influenced by ISO/IEC JTC 1.

Examples and Applications

Classic examples include the memoryless binary source with Hamming distortion studied alongside early information work by Richard Hamming and the continuous Gaussian source under squared error tied to developments by Norbert Wiener and Harry Nyquist. Applications span perceptual audio codecs developed by teams at Dolby Laboratories and image codecs standardized by Joint Photographic Experts Group, video coding architectures influenced by research from MPEG and telecommunications engineering at Nokia and Ericsson. In machine learning contexts, rate–distortion ideas inform representation learning research at institutions such as Google Research and DeepMind, and they intersect with privacy and compression tradeoffs investigated at Carnegie Mellon University and Stanford University.

Extensions and Generalizations

Extensions include multiterminal rate–distortion problems studied by Thomas Cover and David Slepian, successive refinement concepts linked to work at Bell Labs, and networked settings inspired by the distributed source coding problem advanced by Slepian–Wolf theory. Nonstationary and nonergodic source analyses build on early stochastic process results by Andrey Kolmogorov and techniques adopted in contemporary research at Princeton University and University of California, Berkeley. Connections to lossy compression bounds in quantum information draw upon quantum generalizations from researchers at Perimeter Institute and Caltech, while algorithmic implementations leverage convex optimization frameworks developed at MIT and numerical methods advanced at Los Alamos National Laboratory.