Gaussian distribution

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 104 → Dedup 0 → NER 0 → Enqueued 0

| Gaussian distribution | |

|---|---|

| |

| Name | Gaussian distribution |

| Type | Continuous |

| Support | (-\infty,\infty) |

| Parameters | mean μ, variance σ^2 |

Gaussian distribution The Gaussian distribution is a continuous probability distribution widely used in statistics, physics, and engineering. It models random variation in phenomena studied by Isaac Newton, James Clerk Maxwell, Albert Einstein, Carl Friedrich Gauss, and practitioners at institutions such as Bell Labs, Massachusetts Institute of Technology, University of Cambridge, Princeton University, and University of Göttingen. The distribution underlies methods used by Pierre-Simon Laplace, Andrey Kolmogorov, Ronald Fisher, Karl Pearson, and organizations like the Royal Society and the National Institute of Standards and Technology.

Definition and notation

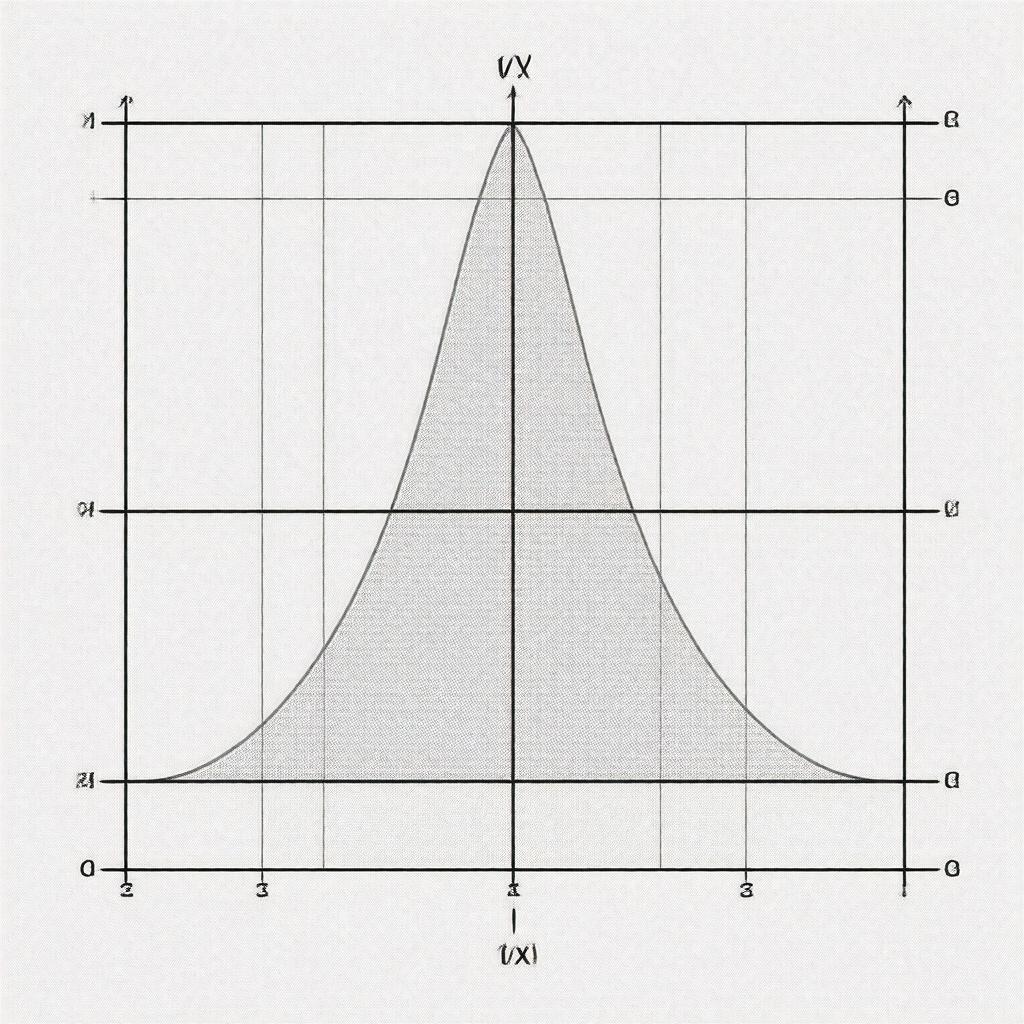

The Gaussian distribution is defined by the probability density function f(x) = (1/(σ√(2π))) exp(-(x-μ)^2/(2σ^2)), where μ∈ℝ and σ>0 parameterize location and scale; these parameters appear in textbooks by William Feller, Sheldon Ross, David Cox, Bradley Efron, and John Tukey. Notation commonly used includes N(μ, σ^2), as in works from Harvard University, Stanford University, Columbia University, Yale University, and research by Emil Artin. The cumulative distribution function Φ(x) = ∫_{-∞}^x f(t) dt is tabulated in resources produced by National Bureau of Standards, Cambridge University Press, Oxford University Press, and computing environments at IBM and Microsoft Research.

Properties

The Gaussian distribution is symmetric about μ, unimodal, and has moments fully determined by μ and σ^2; these properties are discussed in monographs by Andrei Kolmogorov, Paul Lévy, Norbert Wiener, Richard Feynman, and Claude Shannon. It maximizes entropy among distributions with fixed mean and variance, a fact used in analyses at Bell Labs, Los Alamos National Laboratory, Princeton Plasma Physics Laboratory, and in proofs by E. T. Jaynes, John von Neumann, Srinivasa Ramanujan, and Harald Cramér. The characteristic function φ(t) = exp(iμt - ½σ^2 t^2) and moment-generating function M(t) = exp(μt + ½σ^2 t^2) are standard in treatments by Kolmogorov, Friedrich Engels (historical statistical methods), Andrey Markov, Siegmund Freud (historical references), and are implemented in libraries at National Institutes of Health, European Space Agency, NASA, and Siemens.

Parameter estimation and inference

Estimators for μ and σ^2 include the sample mean and sample variance; foundational work appears in papers by Ronald Fisher, Karl Pearson, W. S. Gosset, Jerzy Neyman, and Egon Pearson. Maximum likelihood estimation for N(μ,σ^2) yields closed forms used in algorithms at AT&T Bell Laboratories, Google Research, Facebook AI Research, and in classical tests developed by Fisher and Neyman-Pearson. Confidence intervals and hypothesis tests for Gaussian parameters are central in treatises by Jerzy Neyman, E. S. Pearson, Harald Cramér, Gertrude Cox, and methodologies adopted at Centers for Disease Control and Prevention, World Health Organization, and United Nations statistical divisions. Robust alternatives and Bayesian inference with conjugate priors are discussed by Dennis Lindley, Bradley Efron, Thomas Bayes, Pierre-Simon Laplace, and implemented in probabilistic frameworks by Stanford University and University of California, Berkeley.

Transformations and related distributions

Linear transformations of Gaussian variables produce Gaussian variables; this linearity is exploited in theories by Claude Shannon, Wiener, Norbert Wiener, Hendrik Lorentz, and in control systems at General Electric, Siemens, and Honeywell. Squared Gaussian variables yield chi-squared and noncentral chi-squared distributions used by Ronald Fisher and Harald Cramér, while ratios lead to Student's t-distribution and Cauchy distribution treated by William Sealy Gosset and Augustin-Louis Cauchy. Convolutions of independent Gaussians produce Gaussians, a property used in the Central Limit Theorem proofs by Pierre-Simon Laplace, Andrey Kolmogorov, Pafnuty Chebyshev, and applied in analyses at Bell Labs and Los Alamos National Laboratory.

Multivariate and conditional Gaussian

The multivariate normal distribution N(μ, Σ) generalizes to vectors and matrices; foundational expositions appear in works by Harold Hotelling, Andrey Kolmogorov, C. R. Rao, Sir Ronald A. Fisher, and are implemented in software from MathWorks, R Project, Python Software Foundation, and SAS Institute. Properties of conditional and marginal distributions, linear regression, and Gaussian processes are central to research by Carl Edward Rasmussen, George E. P. Box, Peter J. Green, David MacKay, and used in projects at Google DeepMind, Microsoft Research, IBM Research, and Amazon Web Services.

Applications and examples

Gaussian models are ubiquitous in signal processing, thermodynamics, finance, and measurement theory, with applications developed by Claude Shannon, Norbert Wiener, John von Neumann, Ludwig Boltzmann, and Louis Bachelier. In physics, Gaussian profiles describe diffusion and Brownian motion studied by Albert Einstein, Marian Smoluchowski, and Robert Brown; in engineering, they appear in noise modeling at AT&T, Bell Labs, NASA, and European Organisation for Nuclear Research. In econometrics and actuarial science, models referencing Gaussians appear in work by John Maynard Keynes, Paul Samuelson, Milton Friedman, and Edward J. Kane; in machine learning, Gaussian mixtures, Gaussian processes, and kernel methods are used at Google Research, DeepMind, OpenAI, and universities such as Stanford University and Massachusetts Institute of Technology.

History and development

Early recognition of the Gaussian form dates to work by Abraham de Moivre and Pierre-Simon Laplace; formalization and naming are associated with Carl Friedrich Gauss and subsequent treatments by Adrien-Marie Legendre, Peter Gustav Lejeune Dirichlet, Andrey Kolmogorov, Ronald Fisher, Karl Pearson, and Harald Cramér. The distribution's role in the Central Limit Theorem and statistical inference was advanced by contributions at École Polytechnique, University of Göttingen, University of Cambridge, Trinity College, Cambridge, Royal Society, and research groups at Bell Labs and Los Alamos National Laboratory.