Information Theory

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 79 → Dedup 25 → NER 24 → Enqueued 21

| Information Theory | |

|---|---|

| |

| Name | Information Theory |

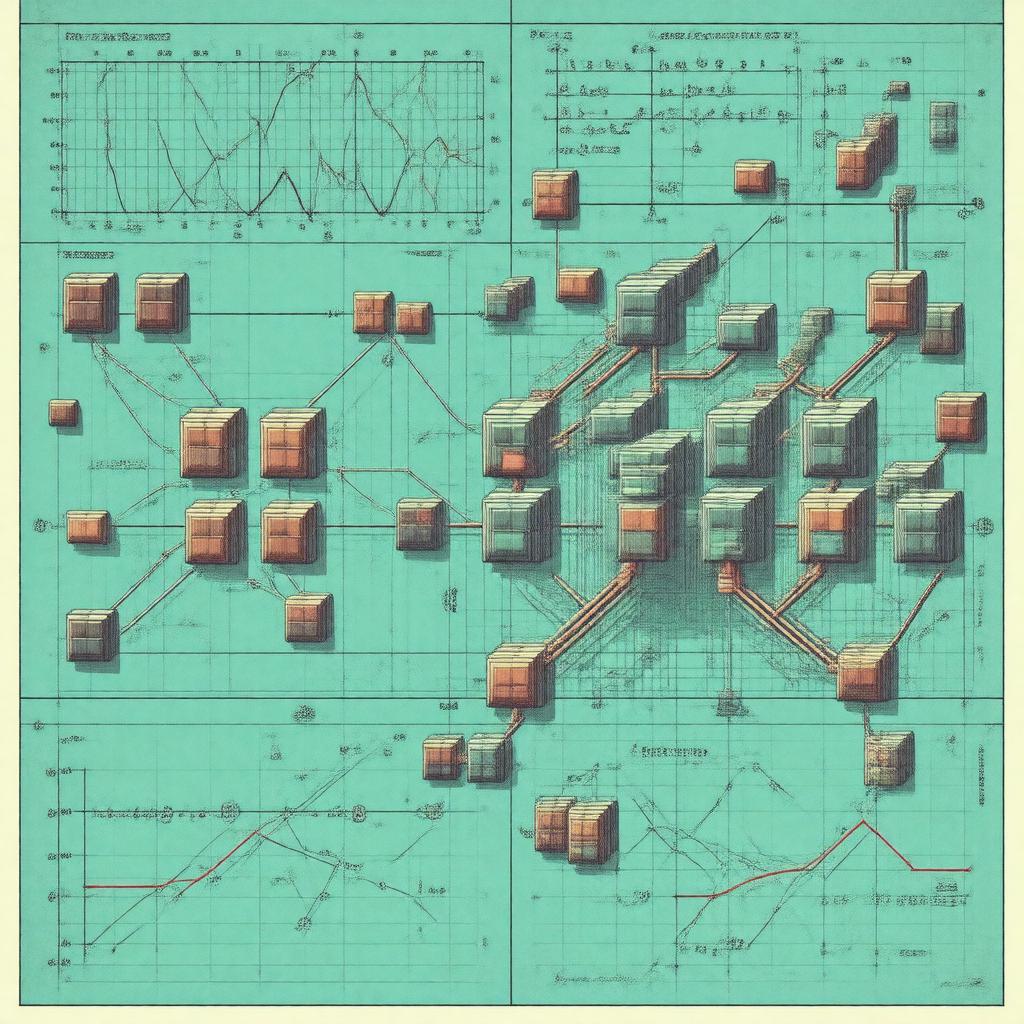

| Caption | Mathematical abstraction of signal encoding |

| Field | Shannon Wiener Nyquist Hartley |

| Introduced | 1948 |

| Notable contributors | Shannon, Wiener, Nyquist, Hartley, Turing, von Neumann, Huffman, Hamming, Weil |

Information Theory Information Theory is a mathematical framework for quantifying, encoding, transmitting, and compressing information developed to model communication systems and uncertainty. It provides rigorous limits and constructive algorithms that influenced Bell Labs research, MIT engineering, Bell Telephone Laboratories practice, and subsequent developments in computing, cryptography, and statistical inference. The field connects to diverse institutions and figures such as IAS, RAND, Stanford, Princeton, Harvard.

Introduction

Information Theory formalizes concepts originally motivated by practical problems at Bell Laboratories and theoretical problems at Princeton and MIT. Early work by Shannon synthesized prior results from Hartley and Nyquist and was concurrent with foundational contributions from Wiener in cybernetics and Turing in computability. The field’s aims intersect with research agendas at Bell Labs, AT&T, IBM Research, Microsoft Research, and national laboratories such as Los Alamos.

Mathematical Foundations

The theory is built on probability theory and measure theory developed at École Normale Supérieure mathematics, with influences from Weil and axiomatic probability from Kolmogorov. Core tools draw on Shannon’s coding theorem proofs that use large deviations techniques related to work by Ramanujan-era analytic methods and limit theorems from Markov and Kolmogorov. Linear algebra and functional analysis from von Neumann underpin spectral approaches and operator-theoretic formulations used in source models at Stanford and Caltech. Optimization tools from Dantzig and convex analysis connected to Kantorovich support code design and rate–distortion theory explored at Bell Labs.

Core Concepts and Measures

Entropy as introduced by Shannon quantifies average uncertainty and relates to combinatorial bounds studied earlier by Hartley; relative entropy (Kullback–Leibler divergence) connects to statistical work by Kullback and Leibler. Mutual information links to channel capacity results expanded in collaboration with researchers at Bell Telephone Laboratories and formalized using ideas from Kolmogorov complexity and algorithmic information by Chaitin and Kolmogorov. Fisher information and Cramér–Rao bounds echo statistical traditions at Cambridge and Oxford from figures like Fisher and Cramér. Entropy rates for stochastic processes use ergodic theory developed by Morse, Birkhoff, and von Neumann; spectral measures tie to work by Wiener on signal processing.

Source and Channel Coding

Lossless compression algorithms such as Huffman coding were developed by Huffman; arithmetic coding and Lempel–Ziv algorithms trace to contributors at IBM Research and Waterloo. Error-detecting and error-correcting codes grew from Hamming’s work at Bell Labs and later algebraic constructions from Hamming, Berlekamp, Gallager, Zyablov, and Pless supported by advances at Lincoln Laboratory. Channel capacity theorems exploit random coding arguments that evolved through collaborations at Princeton and Bell Labs and were extended into network information theory by researchers at Stanford and Berkeley such as Cover and Thomas. Modern capacity-achieving constructions include low-density parity-check codes associated with MacKay and turbo codes linked to industrial labs and university groups including Berrou.

Applications and Interdisciplinary Impact

Practically, Information Theory underlies compression standards like JPEG and MPEG developed by consortia including MPEG and interoperable protocols implemented by ITU and EBU. Cryptography, influenced by Shannon’s secrecy systems, advanced through work at NSA and academic centers such as Stanford and Berkeley with figures like Diffie and Hellman. Machine learning and statistical estimation fields at Google Research, DeepMind, OpenAI, and university labs reuse entropy and mutual information for representation learning researched by teams including LeCun, Hinton, and Ng. Bioinformatics applications interact with Human Genome Project datasets; neuroscience collaborations at Max Planck and Allen Institute apply coding theory concepts to neural coding problems advanced by researchers influenced by Hubel and Wiesel and Hubel. Economics and finance groups at LSE and Harvard use information-theoretic measures for market microstructure and portfolio selection, while control theorists at Caltech and ETH Zurich combine rate–distortion ideas with results from Wiener and Kalman.

Historical Development and Key Figures

Foundational milestones include Shannon’s 1948 landmark that synthesized work of Nyquist, Hartley, and others during a postwar research boom at Bell Labs and academic programs at MIT and Princeton. Subsequent generations—Huffman, Hamming, Gallager, Cover, MacKay, Berrou—expanded coding theory, while theoreticians like Viterbi and Reed linked algebraic structures from Galois theory and finite fields studied by Galois to practical decoders. Institutional influence came from Bell Labs, IBM Research, AT&T, and university centers at Stanford, Berkeley, Princeton, and MIT. Prizes and recognition connected to the field include awards from IEEE and honors shared among contributors like Shannon and Wiener.