Cauchy distribution

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 50 → Dedup 0 → NER 0 → Enqueued 0

| Cauchy distribution | |

|---|---|

| |

| Name | Cauchy distribution |

| Type | Continuous probability distribution |

| Support | (−∞, ∞) |

| Parameters | location, scale |

Cauchy distribution The Cauchy distribution is a continuous probability distribution notable for heavy tails and undefined mean and variance, named after Augustin-Louis Cauchy. It arises in contexts ranging from resonance phenomena in Albert Einstein's work on Brownian motion to ratio distributions encountered in analyses by Pierre-Simon Laplace, and it played a role in debates involving Karl Pearson and Ronald Fisher about statistical estimation. The distribution is central to examples contrasting classical results by Andrey Kolmogorov and William Feller concerning convergence of probability measures and provides counterexamples used by Paul Lévy, Emil Artin, and Harald Cramér in probabilistic limit theory.

Definition and basic properties

The distribution is defined by two parameters: a real location parameter often denoted by a name linked historically to Simeon Poisson-style shifts, and a positive scale parameter connected to dispersion as in studies by Thomas Bayes and George Boole. It is stable under convolution in the same way as the Lévy distribution family studied by Jean-Baptiste-Joseph Fourier and is an example of a stable distribution investigated by Paul Lévy and William Feller. Its heavy tails are similar to those analyzed by Francis Galton and later by John Tukey in robust statistics, and those tails invalidate the applicability of classical central-limit assertions as discussed by Andrey Kolmogorov and Piero Sraffa.

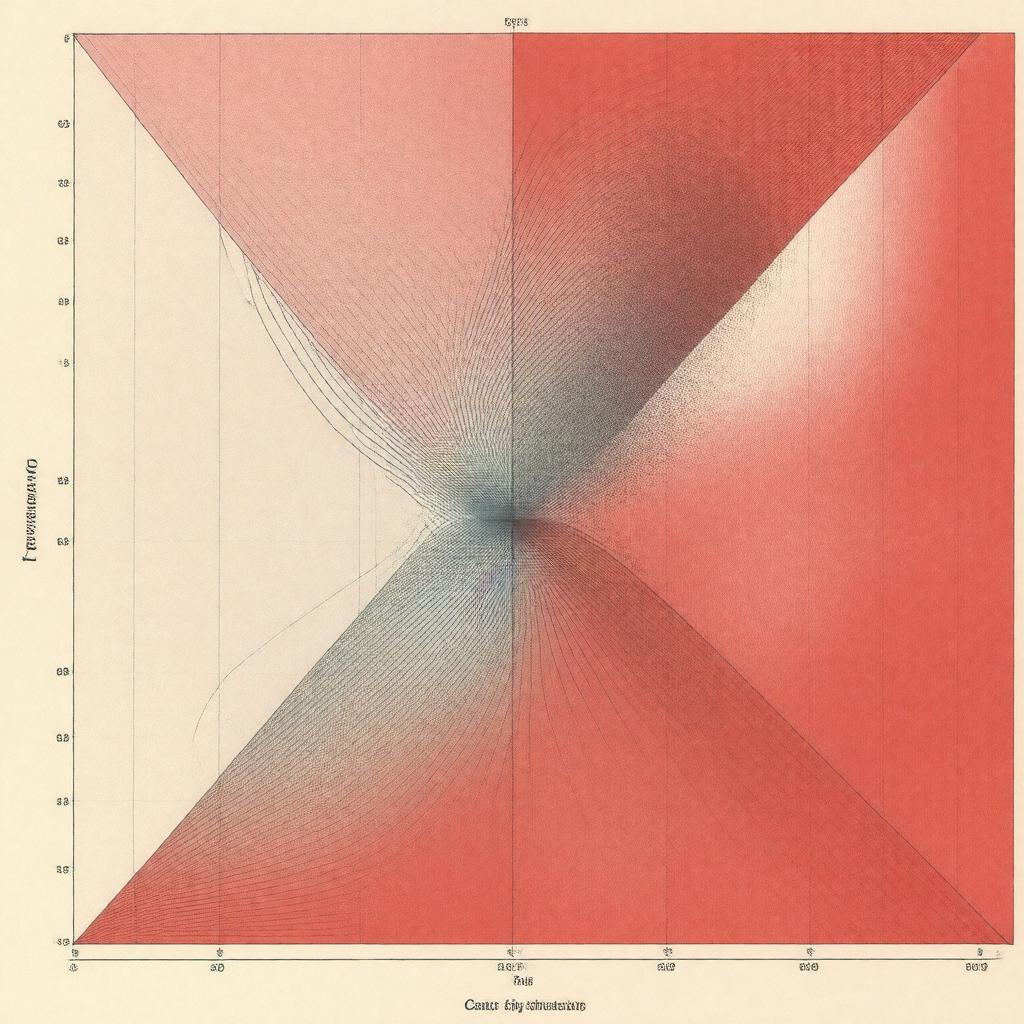

Probability density and cumulative distribution

The probability density function has a simple rational form often presented alongside canonical examples such as the densities treated by Carl Friedrich Gauss in his work on the normal distribution and by Abraham de Moivre in early probability. The cumulative distribution function can be expressed using inverse trigonometric functions, an approach reminiscent of transformations used by Joseph-Louis Lagrange and Adrien-Marie Legendre. Graphical depictions of the density are compared in texts by Karl Pearson and Florence Nightingale when illustrating contrasts with the normal curve and with distributions used by Ronald Fisher in experimental design.

Parameterizations and variants

Beyond the standard location–scale parameterization, alternative parameterizations appear in multivariate and wrapped forms connected to work by László Révész and Eugene Wigner. The multivariate extension, often compared with Multivariate normal distribution formulations by Harold Hotelling and Andrey Kolmogorov, shares stability properties studied by Paul Lévy. The wrapped Cauchy distribution used in circular statistics appears alongside models developed by R. A. Fisher and P. L. Huber for angular data, while mixtures and truncated forms are discussed in the context of mixture-model literature advanced by Karl Pearson and Geoffrey McLachlan.

Moments, median, and characteristic function

Unlike the Normal distribution analyzed by Carl Friedrich Gauss, the Cauchy distribution has undefined mean and variance, a cautionary example used by Ronald Fisher and Jerzy Neyman in courses on estimation. The median and mode coincide with the location parameter, an observation appearing in summaries by S. N. Bernstein and texts by A. N. Kolmogorov. Its characteristic function takes a simple exponential form linked in presentation to characteristic-function methods developed by Paul Lévy and William Feller, and these tools are used in proofs by C. R. Rao and Harald Cramér concerning limit theorems.

Relations to other distributions and limit theorems

The Cauchy law appears as the ratio of two independent Normal distribution variates—an identity often attributed in pedagogy to examples by Adolphe Quetelet and presented alongside ratio results by Karl Pearson. It is a special case of the family of stable distributions classified by Paul Lévy and contrasts with the domain-of-attraction conditions in the Central limit theorem as formalized by Andrey Kolmogorov and Alexander Lyapunov. Connections to the Lévy distribution and to alpha-stable laws are explored in monographs by Olivier D. Muir and Benoît Mandelbrot.

Statistical inference and estimation

Estimation for the location parameter was debated historically by Ronald Fisher and Karl Pearson, and robust methods advocated by Peter Huber and John Tukey are often applied because sample means are not consistent. Maximum likelihood estimation has nonstandard properties noted in analyses by Jerzy Neyman and Egon Pearson, and Bayesian treatments invoking priors as in work by Thomas Bayes and Harold Jeffreys are used to obtain posterior location inferences. Hypothesis tests and confidence-set procedures must account for heavy tails, as discussed by David Cox and in applied expositions by Bradley Efron concerning bootstrap methods.

Applications and examples

Practical appearances include resonance phenomena in physics where analyses reference Albert Einstein and Max Planck, fits to empirical data in finance examined in studies by Benoît Mandelbrot and Eugene Fama, and circular-data modeling in oceanography and meteorology with methods traceable to Ronald Fisher and Sir Gilbert Walker. Pedagogical examples comparing sample-behavior to the Normal distribution are common in textbooks by William Feller and George Casella, while counterexamples in probability theory attributed to Paul Lévy and Andrey Kolmogorov continue to illustrate the necessity of moment conditions in asymptotic theory.