Normal distribution

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 68 → Dedup 3 → NER 1 → Enqueued 0

| Normal distribution | |

|---|---|

| |

| Name | Normal distribution |

| Other names | Gaussian distribution, Laplace–Gauss distribution |

| Type | continuous |

| Support | (−∞, ∞) |

| Parameters | mean μ, variance σ² |

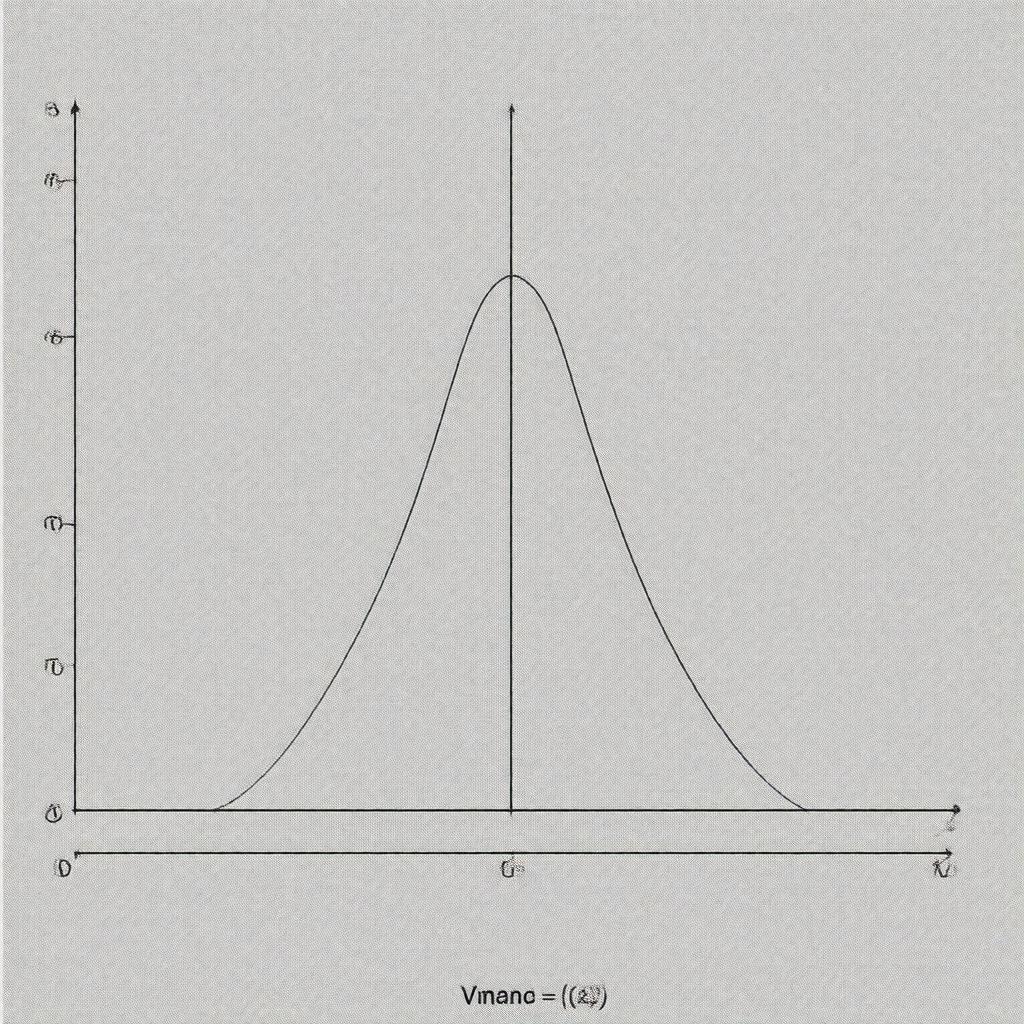

Normal distribution The normal distribution is a continuous probability distribution central to Pierre-Simon Laplace, Carl Friedrich Gauss, and Abraham de Moivre’s work, characterized by its symmetric bell-shaped curve and ubiquity across empirical studies in Isaac Newton-era statistical practice, Francis Galton’s anthropometric investigations, and modern applications in Karl Pearson-inspired statistics. Its mathematical development influenced the methods used at institutions such as the Royal Society, University of Göttingen, and University of Cambridge, and underpins statistical techniques used by organizations including International Monetary Fund, World Health Organization, and National Aeronautics and Space Administration.

Definition and basic properties

The distribution is defined for real-valued variables with density shaped by mean μ and variance σ²; this form was formalized in work connected to Carl Friedrich Gauss’s curve-fitting for astronomical observations at the University of Göttingen and to Pierre-Simon Laplace’s analytic probability methods at the École Normale Supérieure. Key properties include symmetry about μ, unimodality, and closure under affine transformations, features exploited in analyses by Ronald Fisher, Jerzy Neyman, and Wald in hypothesis testing and interval estimation at institutions like University of Cambridge and Columbia University. The distribution’s moment-generating function and characteristic function were developed in contexts involving Andrey Kolmogorov and Paul Lévy’s probability theory, and its role in error propagation links to techniques used at CERN and Bell Labs.

Parameters and forms (standard, multivariate, truncation)

The standard (canonical) form uses μ = 0 and σ² = 1; this standardized variant is central to tables and software employed by groups such as National Institute of Standards and Technology and Statistical Office of the European Communities. The multivariate normal extends to vectors with mean vector μ and covariance matrix Σ, foundational in multivariate analysis work by Harold Hotelling and applied by researchers at Princeton University and University of Chicago. Truncated normal distributions arise in censoring problems studied in contexts like Johns Hopkins University epidemiology and Stanford University economics, where truncation or censoring modifies support and normalization constants, issues addressed in publications from Institute for Advanced Study and Max Planck Society researchers.

Probability density, cumulative distribution, and moments

The probability density function involves an exponential quadratic form, historically tabulated for the standard case in manuals used at Royal Observatory, Greenwich and later computerized in projects by IBM and Bell Labs. The cumulative distribution function lacks a closed-form elementary antiderivative and is expressed via the error function developed by Adrien-Marie Legendre and applied in analyses by Simeon Poisson; numerical approximation methods were improved by Hastings and implemented in software from MathWorks and R Project. Moments (mean, variance, skewness zero, kurtosis three) were leveraged in moment-based inference by Karl Pearson and in method-of-moments estimators used at institutions like Harvard University and Yale University.

Statistical inference and estimation

Estimation of μ and σ² uses maximum likelihood and unbiased estimators, paradigms formalized by Ronald Fisher and developed further by Jerzy Neyman and Egon Pearson within frameworks adopted at University College London and Biometrika-affiliated research. Confidence intervals and hypothesis tests for normal samples underlie the Student’s t-distribution work by William Sealy Gosset at Guinness Brewery and the F-distribution by Ronald Fisher; these inferential tools are standard in regulatory methods at Food and Drug Administration and European Medicines Agency. Bayesian conjugate analysis with normal likelihoods and normal priors is central to approaches used at Bayesian Research Unit-affiliated groups and in probabilistic modeling efforts at Google and Microsoft Research.

Transformations, sums, and limiting theorems

Linear combinations of independent normal variables remain normal, a closure property exploited in linear models developed by Francis Galton and formalized in regression theory at University of Chicago and Columbia University. The central limit theorem, with roots in work by Abraham de Moivre, Pierre-Simon Laplace, and formal proofs by Andrey Kolmogorov, establishes convergence to the normal under broad conditions, impacting fields from demography studied by Thomas Malthus to finance models at Goldman Sachs and J.P. Morgan. Log-normal and other transformed distributions connect to modeling in studies from World Bank and International Labour Organization where multiplicative processes are natural.

Applications and examples

Normal models appear in measurement error analyses in projects at CERN and European Space Agency, in psychometrics following Francis Galton’s trait studies now practiced at University of Oxford and University of Cambridge, and in quality control methods originating at Bell Labs and Western Electric. In economics and finance, Gaussian assumptions influenced models at Federal Reserve System and Bank of England though later work by Robert Engle and Clive Granger expanded beyond normality. In engineering, signal processing algorithms at Massachusetts Institute of Technology and Stanford University utilize Gaussian noise models; in genetics, quantitative trait analysis at Cold Spring Harbor Laboratory employs normal-based methods. The distribution’s influence extends to machine learning systems developed at DeepMind and OpenAI, and to environmental modeling used by United Nations Environment Programme and National Oceanic and Atmospheric Administration.