Central Limit Theorem

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 64 → Dedup 0 → NER 0 → Enqueued 0

| Central Limit Theorem | |

|---|---|

| |

| Name | Central Limit Theorem |

| Field | Probability theory, Statistics |

| Introduced | 18th–19th century |

| Contributors | Abraham de Moivre; Pierre-Simon Laplace; Aleksandr Lyapunov; Andrey Kolmogorov; Pafnuty Chebyshev |

Central Limit Theorem

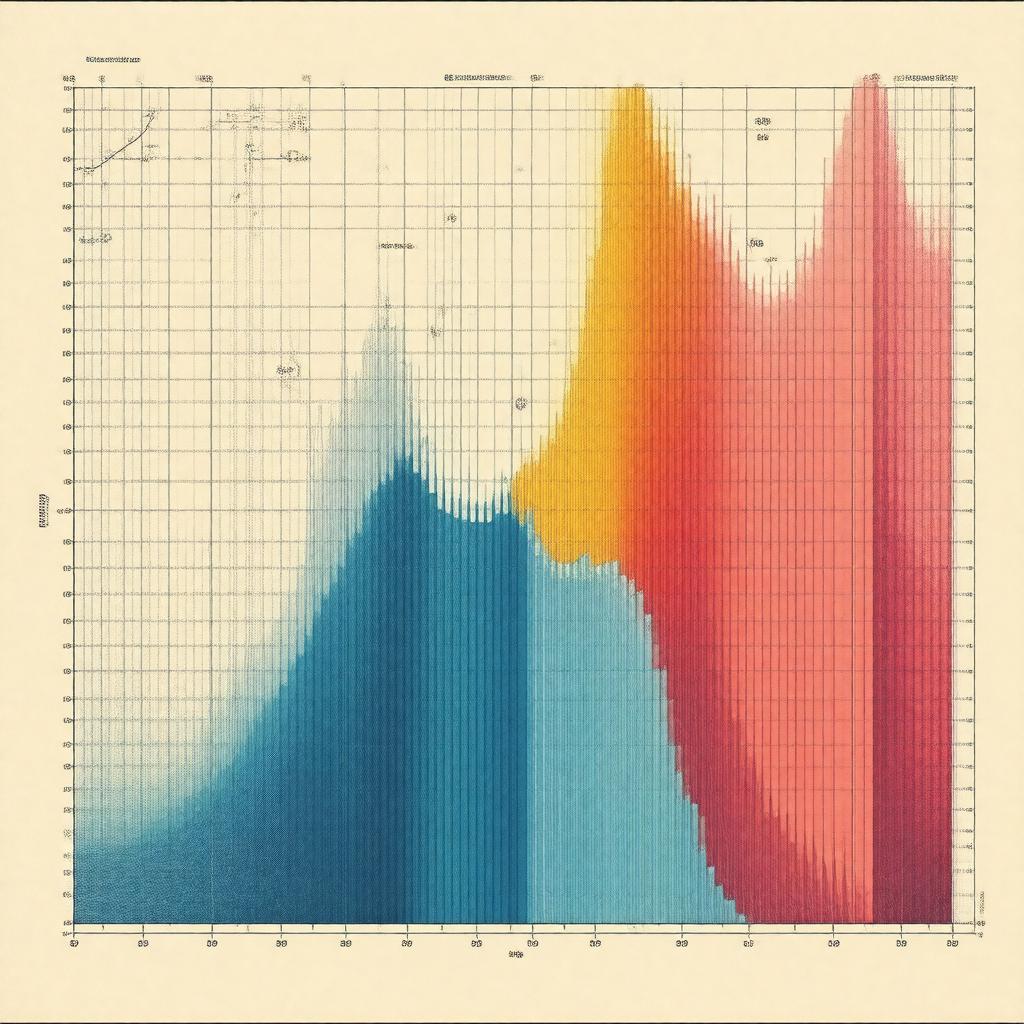

The Central Limit Theorem describes how sums or averages of many independent random variables tend toward a normal distribution under broad circumstances. It underpins statistical inference used by practitioners in finance, meteorology, genetics, and engineering, and it connects foundational work by Abraham de Moivre, Pierre-Simon Laplace, Pafnuty Chebyshev, Aleksandr Lyapunov, and Andrey Kolmogorov.

Statement and Variants

The classical formulation asserts that for a sequence of independent, identically distributed random variables with finite mean and variance, suitably normalized sums converge in distribution to the normal law, a result developed by Abraham de Moivre, extended by Pierre-Simon Laplace, and formalized in modern form by Aleksandr Lyapunov and Andrey Kolmogorov. Variants include the Lindeberg–Feller theorem attributed to Jørgen Lindeberg and William Feller, the Lyapunov version of Aleksandr Lyapunov, and the martingale central limit theorem linked to work by Joseph Doob and Meyer P. Hall. Other forms are the classical de Moivre–Laplace approximation used in studies by Carl Friedrich Gauss and the multivariate central limit theorem promoted in texts by H. Cramér and Harold Hotelling.

Conditions and Assumptions

Typical assumptions involve independence or weak dependence, identical distribution or triangular arrays as in Lindeberg–Feller, and finite second moments as in Chebyshev-type criteria connected to Pafnuty Chebyshev and Andrey Kolmogorov. Strengthened conditions appear in the Lyapunov condition of Aleksandr Lyapunov and in mixing conditions studied by Maruyama, Kolmogorov, and Ibragimov. For dependent structures, martingale conditions by Joseph Doob and stationarity hypotheses used by Norbert Wiener and Wold describe when convergence holds. Heavy-tailed distributions arising in work of Paul Lévy and Benoît Mandelbrot require stable law generalizations due to infinite variance.

Proofs and Methods

Classic proofs use characteristic functions attributed to Paul Lévy and inversion techniques refined by Harold Cramér and Emil Artin, while alternative approaches use cumulants in the lineage of Ronald Fisher and saddlepoint approximations linked to Frank Daniels and David Cox. Stein's method, created by Charles Stein, provides probabilistic bounds and has been extended in works by Louis Chen and Persi Diaconis. Coupling arguments developed in the probabilistic tradition of William Feller and embedding techniques due to Skořohod yield constructive proofs; martingale proofs leverage results by Joseph Doob and Dale Williams.

Rates of Convergence and Refinements

Berry–Esseen bounds quantify rates of convergence first proven by Andrew Berry and Carl-Gustav Esseen and later improved in studies by Sazonov, Petrov, and Nathaniel Chernoff. Edgeworth expansions, developed in the work of Francis Ysidro Edgeworth and systematized by Harold Cramér, provide asymptotic corrections using cumulants as in methods used by Ronald Fisher and Jerzy Neyman. Nonuniform bounds and moderate deviation results reference contributions by S. V. Nagaev, Michel Ledoux, and Boris Tsirelson, while empirical process refinements connect to work by Vladimir Vapnik and A. N. Kolmogorov.

Applications and Examples

Applications span sampling theory used by Jerzy Neyman and E. S. Pearson, quality control influenced by Walter A. Shewhart and W. Edwards Deming, and econometrics pioneered by Trygve Haavelmo and Clive Granger. In biostatistics, experimental design and inference draw on techniques from Ronald Fisher and Austin Bradford Hill; in physics, statistical mechanics employs Gaussian approximations in treatments by Ludwig Boltzmann and Josiah Willard Gibbs. Signal processing uses central-limit approximations in work by Norbert Wiener and Harry Nyquist, while telecommunications and information theory build on foundational research by Claude Shannon and Richard Hamming. Financial mathematics applies limit results in models developed by Louis Bachelier and Robert C. Merton.

Generalizations and Extensions

Generalizations include convergence to stable laws studied by Paul Lévy and Gennady Samorodnitsky, functional central limit theorems (invariance principles) such as Donsker's theorem associated with M. D. Donsker and subsequent developments by Kurt Johansson and Terry Tao, and non-i.i.d. frameworks like triangular arrays treated by Jørgen Lindeberg and William Feller. Multivariate extensions reference Harold Hotelling and random matrix analogues connect to work by Eugene Wigner and Terence Tao, while concentration inequalities and high-dimensional limits have been advanced by Michel Ledoux, Roman Vershynin, and Piero Nicolò. Stable-process generalizations and heavy-tail techniques follow lines of Benoît Mandelbrot and Murray Rosenblatt.