McCulloch–Pitts neuron

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 50 → Dedup 0 → NER 0 → Enqueued 0

| McCulloch–Pitts neuron | |

|---|---|

| |

| Name | McCulloch–Pitts neuron |

| Inventors | Warren Sturgis McCulloch, Walter Pitts |

| Year | 1943 |

| Influenced | Artificial neural network, Perceptron, Threshold logic unit |

McCulloch–Pitts neuron. The McCulloch–Pitts neuron is a foundational, simplified mathematical model of a biological neuron, first proposed in a seminal 1943 paper by neurophysiologist Warren Sturgis McCulloch and logician Walter Pitts. This abstract model formalized the idea of a neuron as a binary threshold unit that processes inputs and produces a single output, establishing a crucial bridge between neurophysiology and the emerging field of automata theory. Its logical formalism provided the theoretical underpinnings for the development of artificial neural networks and significantly influenced early research in cybernetics and artificial intelligence.

Mathematical model

The McCulloch–Pitts neuron is defined as a simple, discrete-time, binary-state device. It receives inputs, \(x_1, x_2, ..., x_n\), which are typically binary values (0 or 1). Each input is associated with a synaptic weight, often simplified to be either excitatory (+1) or inhibitory (-1) in the original formulation. The neuron sums these weighted inputs. This sum is then compared to a predefined threshold value, \(\theta\). The output, \(y\), is determined by a threshold function: \(y = 1\) if the weighted sum meets or exceeds \(\theta\), and \(y = 0\) otherwise. This operation can be described by the function \(y = H(\sum_{i=1}^n w_i x_i - \theta)\), where \(H\) is the Heaviside step function. Networks of such neurons, with defined connection patterns and a synchronous update rule, were shown to be capable of implementing any Boolean function or finite-state automaton, linking neural activity to symbolic logic.

Historical context and development

The model was developed during a period of intense interdisciplinary collaboration, heavily influenced by the work of Norbert Wiener on cybernetics and earlier research in mathematical logic. McCulloch, influenced by the neuroanatomical theories of Ramon y Cajal, sought a formal theory of brain activity. Pitts, a prodigy in logic, contributed expertise from Alfred North Whitehead and Bertrand Russell's Principia Mathematica. Their 1943 paper, "A Logical Calculus of the Ideas Immanent in Nervous Activity," published in the Bulletin of Mathematical Biophysics, was a direct response to critiques from philosophers like Rudolf Carnap regarding the logical consistency of psychological concepts. The work attracted immediate attention from figures like John von Neumann, who cited it in his draft report on the EDVAC and used its concepts in the design of self-replicating automata.

Biological inspiration and limitations

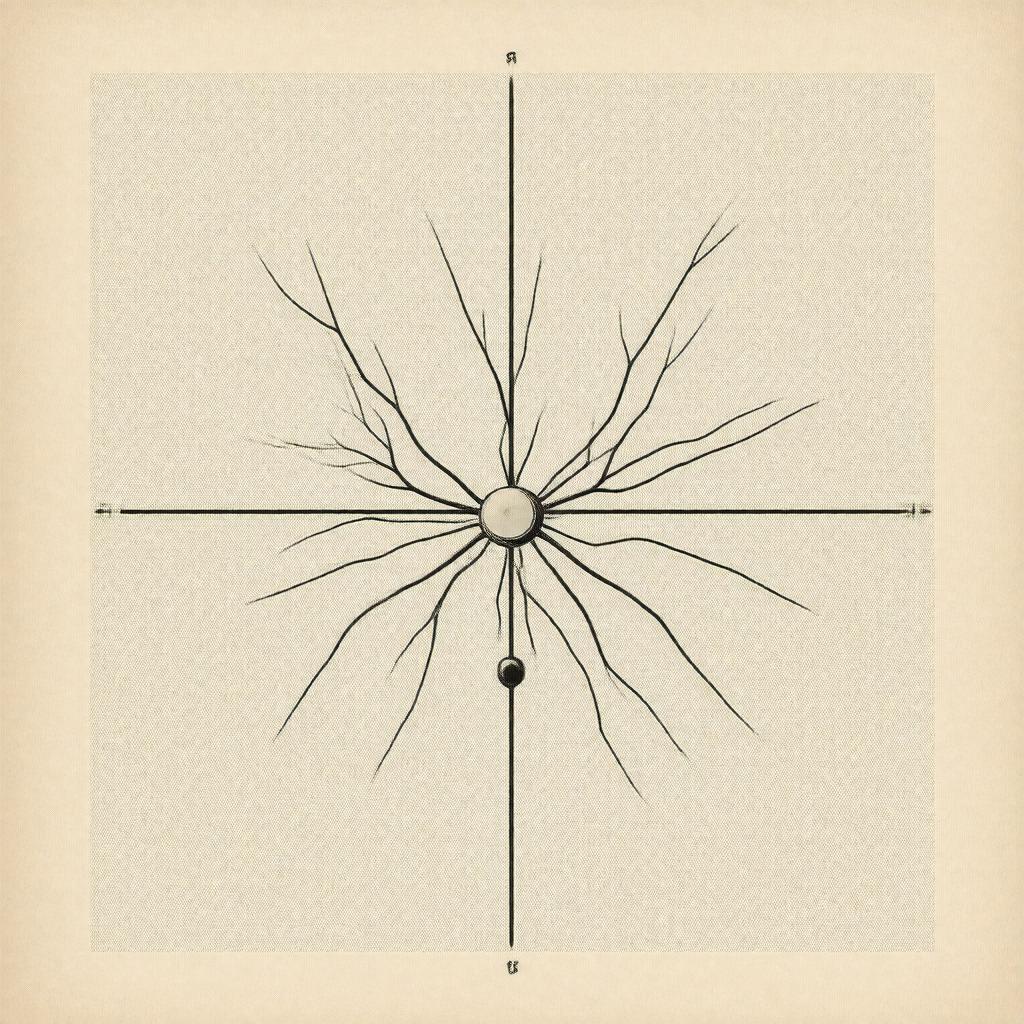

The model was directly inspired by the basic physiology of the biological neuron as understood in the early 20th century, incorporating concepts like synaptic summation and the all-or-none law associated with the action potential. It abstracted key features: the dendritic tree as input collectors, the cell body as the summation and thresholding unit, and the axon as the output pathway. However, it made severe simplifying assumptions that limit its biological realism. It ignored the continuous and graded nature of many neural signals, the complex temporal dynamics of synaptic transmission, the role of neurotransmitters, and the adaptive nature of synaptic weights, a process later central to Hebbian theory. The model treated time as discrete, synchronous steps, unlike the continuous, asynchronous firing of real neurons.

Influence on later neural network models

The McCulloch–Pitts neuron is the direct progenitor of virtually all subsequent artificial neuron models. It laid the groundwork for Frank Rosenblatt's perceptron in the 1950s, which introduced adjustable weights and a learning rule. The concept evolved into the threshold logic unit, a key component in early machine learning. The fundamental architecture of summing weighted inputs and applying a non-linear activation function remains central to modern deep learning networks, though using continuous functions like the sigmoid function or ReLU. Its theoretical demonstration that networks of simple units could perform complex computations inspired connectionist models in cognitive science and provided a blueprint for parallel distributed processing.

Applications and computational significance

While not used directly in practical applications due to its simplicity, the computational significance of the McCulloch–Pitts neuron is profound. It provided the first rigorous proof that networks of neuron-like elements could, in principle, perform any Turing-computable function, establishing a theoretical foundation for neuromorphic computing. This insight was instrumental for John von Neumann in the development of the von Neumann architecture, drawing parallels between the brain and computing machines. The model's legacy is evident in the design of early artificial intelligence systems, the theoretical analysis of circuit complexity, and the ongoing development of spiking neural networks, which seek to incorporate more biological timing while retaining its core logical principles. Category:Artificial neural networks Category:Cybernetics Category:History of artificial intelligence