von Neumann architecture

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 64 → Dedup 27 → NER 6 → Enqueued 3

| von Neumann architecture | |

|---|---|

| |

| Name | von Neumann architecture |

| Designer | John von Neumann |

| Introduced | 1945 |

| Design | Stored-program computer |

| Type | Register–memory architecture |

| Predecessor | Harvard architecture |

| Successor | Modified Harvard architecture |

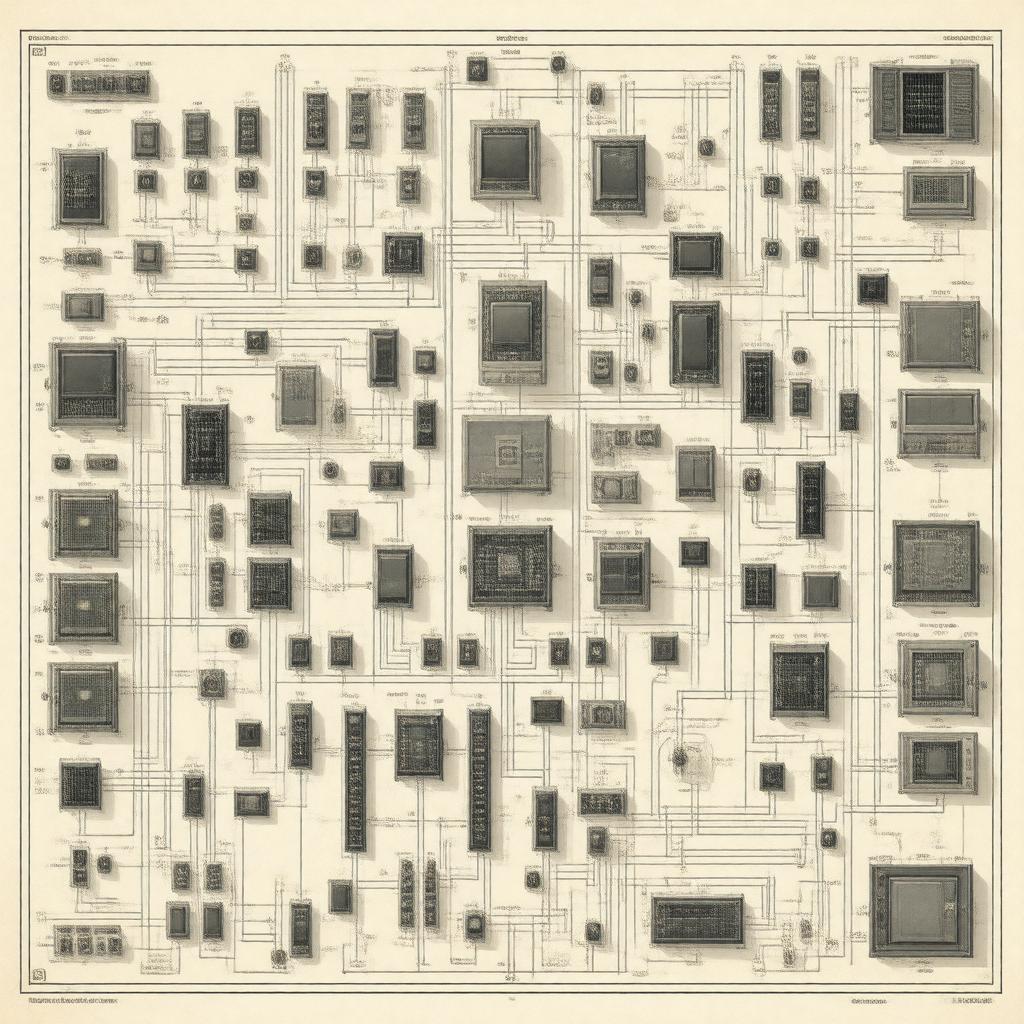

von Neumann architecture. It is a foundational design model for stored-program computers where a central processing unit, a memory unit, and input/output mechanisms are interconnected. The architecture is named after the mathematician and physicist John von Neumann, who described the concept in the 1945 First Draft of a Report on the EDVAC. This model unified program instructions and data in the same memory space, a revolutionary departure from earlier computing machines like the Harvard Mark I and enabled the development of general-purpose, programmable digital computers that underpin modern computing.

Overview

The core principle is the stored-program concept, where both machine code instructions and the data they manipulate reside in a single, unified main memory. This design allows the central processing unit to fetch instructions sequentially from memory, decode them, and execute operations, which may involve reading or writing data from the same memory space. This sequential instruction cycle is controlled by a program counter, a register that holds the address of the next instruction to be executed. The architecture's simplicity and generality, as formalized in von Neumann's report for the EDVAC project, provided a clear blueprint that influenced nearly all subsequent computer designs, from early systems like the IAS machine and Manchester Baby to contemporary personal computers and servers.

Key components

The model defines several essential, interconnected units. The arithmetic logic unit performs mathematical calculations and logical operations, while the control unit directs the operation of the processor by interpreting instructions. These two units together constitute the central processing unit. A single main memory stores both program instructions and data, accessible via a shared bus system. Input/output devices, such as those pioneered for the UNIVAC I, allow communication between the computer and the external world. The processor registers, including the program counter and instruction register, provide high-speed storage locations within the CPU for managing the execution cycle.

Operation and instruction cycle

Execution follows a repetitive cycle, often termed the fetch-decode-execute cycle. The control unit uses the program counter to fetch an instruction from memory into the instruction register. It then decodes this instruction to determine the required operation, which may involve the arithmetic logic unit. If the instruction requires data, the control unit fetches operands from specified memory addresses. The arithmetic logic unit performs the computation, and the result is then written back to either a register or a memory location. Finally, the program counter is updated to point to the next instruction, typically through simple incrementing unless altered by a jump instruction or branch (computer science) operation.

Historical context and development

The concept emerged from work on early electronic computers during World War II. While working on the ENIAC project, its designers, including John Presper Eckert and John William Mauchly, recognized the limitations of manually rewiring the machine for new tasks. Discussions with John von Neumann at the Moore School of Electrical Engineering led to the seminal First Draft of a Report on the EDVAC. Although similar ideas were concurrently explored by others like Alan Turing in his design for the Automatic Computing Engine, von Neumann's report provided the most comprehensive and influential description. The first complete working computer built on these principles was the Manchester Baby, developed at the University of Manchester by Frederic Calland Williams and Tom Kilburn.

Impact and legacy

The architecture's standardization provided a universal framework that dramatically accelerated the development of the computer industry. It enabled the creation of high-level programming languages, such as FORTRAN and COBOL, and operating systems by providing a consistent hardware model. Virtually all general-purpose computers, from IBM System/360 mainframes to modern x86 and ARM architecture processors, are based on this foundational model. Its principles are taught in core computer science curricula worldwide and underpin the design of everything from supercomputers like those at Lawrence Livermore National Laboratory to embedded systems in smartphones.

Limitations and alternatives

A primary limitation is the von Neumann bottleneck, where the shared bus for instructions and data restricts the speed of the CPU, as it cannot fetch both simultaneously. This has led to various performance-enhancing techniques, including cache (computing) hierarchies and pipeline (computing). The primary historical alternative is the Harvard architecture, which uses physically separate memories and buses for instructions and data, as seen in early Harvard Mark I and many modern microcontrollers like some PIC microcontrollers. Other significant departures include dataflow architecture and neural network-inspired designs, which aim to overcome the sequential execution model. Furthermore, quantum computers, such as those researched by IBM and Google, represent a fundamentally different computational paradigm not bound by these classical constraints.

Category:Computer architecture Category:Computing models Category:John von Neumann