Perceptron

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 58 → Dedup 0 → NER 0 → Enqueued 0

| Perceptron | |

|---|---|

| |

| Name | Perceptron |

| Inventor | Frank Rosenblatt |

| Invented | 1957 |

| Institution | Cornell Aeronautical Laboratory |

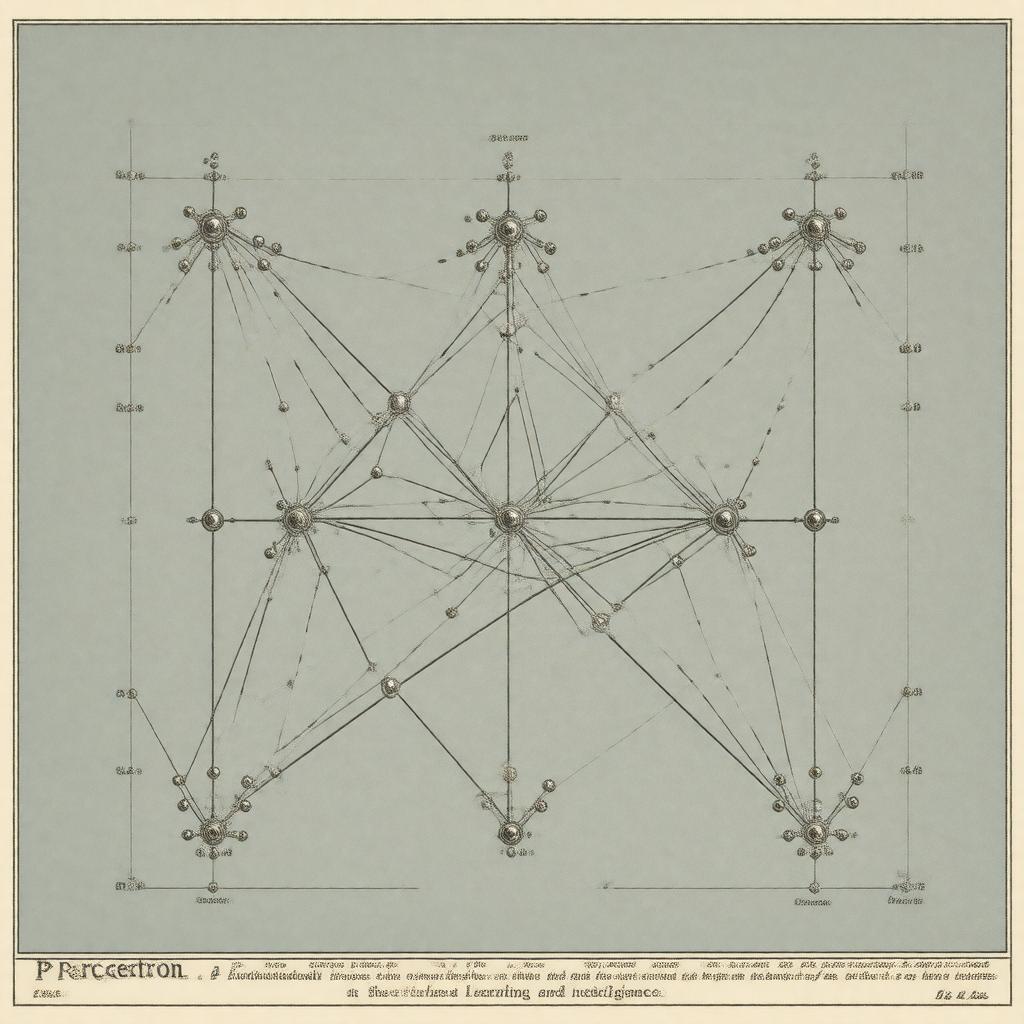

Perceptron. In machine learning and artificial neural networks, the perceptron is a foundational algorithm for binary classification. Conceived as a simplified model of a biological neuron, it functions as a linear classifier that makes predictions by computing a weighted sum of its inputs and passing the result through a step function. Its development at the Cornell Aeronautical Laboratory marked a seminal moment in the early history of artificial intelligence, inspiring decades of subsequent research into connectionism and deep learning.

Overview

The perceptron is a fundamental building block in the field of artificial intelligence, specifically within the paradigm of supervised learning. It represents one of the earliest and simplest forms of an artificial neural network, designed to mimic the basic decision-making process of a single neuron. The algorithm's primary function is to classify data into one of two categories by finding a linear separator in the feature space. While its capabilities are limited to linearly separable problems, its introduction provided a crucial proof-of-concept that intelligent behavior could arise from interconnected simple units, influencing later work on multilayer perceptrons and more complex architectures like those developed at the Massachusetts Institute of Technology and Stanford University.

History

The perceptron was invented in 1957 by the American psychologist Frank Rosenblatt while he was working at the Cornell Aeronautical Laboratory. His work was heavily influenced by earlier models of neural activity, such as the McCulloch–Pitts neuron proposed by Warren McCulloch and Walter Pitts. Rosenblatt's research was funded by the United States Office of Naval Research, reflecting the high expectations for this early artificial intelligence technology. The initial hardware implementation, known as the Mark I Perceptron, was a physical machine that used an array of photocells and potentiometers to learn to recognize simple patterns. The 1969 book Perceptrons by Marvin Minsky and Seymour Papert of the Massachusetts Institute of Technology famously analyzed its mathematical limitations, which contributed to the onset of the first AI winter.

Structure and function

A single-layer perceptron consists of three primary components: input nodes, adjustable weights, and an activation function. The input nodes receive numerical features, analogous to dendrites in a biological neuron. Each input is multiplied by a corresponding weight, parameters that are adjusted during training to capture the importance of each feature. These weighted inputs are then summed, and a bias term is added to shift the decision boundary. The resulting sum is fed into a non-linear activation function, historically a Heaviside step function, which produces the final binary output. This process mathematically defines a hyperplane in the input space, separating it into two regions corresponding to the different classes.

Learning algorithm

The perceptron learning algorithm is an online, error-correcting procedure for adjusting the model's weights. It operates under the principle of supervised learning, where it is presented with a set of training examples from a dataset like the Iris flower data set. For each example, the algorithm computes an output prediction and compares it to the true label. If a misclassification occurs, it updates the weights and bias using the perceptron update rule, which moves the decision boundary in the direction that would correct the error for that specific sample. This process iterates until a stopping criterion is met, such as all training samples being classified correctly or a maximum number of epochs being reached, assuming the data is linearly separable.

Limitations

The most significant limitation of the basic perceptron, as rigorously proven by Marvin Minsky and Seymour Papert, is its inability to solve problems that are not linearly separable. A classic example is the XOR logic function, which requires a non-linear decision boundary. This fundamental constraint meant that single-layer perceptrons could not learn many essential patterns, dampening the initial enthusiasm surrounding connectionism. Furthermore, if the training data is not separable, the learning algorithm will never converge and will oscillate indefinitely. These limitations were a primary catalyst for the development of more sophisticated models, such as the multilayer perceptron which can approximate any continuous function, as later established by the universal approximation theorem.

Variations and extensions

To overcome its inherent constraints, numerous variations and extensions of the basic perceptron have been developed. The most pivotal is the multilayer perceptron, which introduces one or more hidden layers between the input and output layers, enabling the network to model complex, non-linear relationships through techniques like backpropagation. Other important adaptations include the pocket algorithm, which retains the best set of weights encountered during training for non-separable data, and the voted perceptron. The support-vector machine can be viewed as a maximum-margin extension of the perceptron concept. Modern deep learning architectures, such as those pioneered by researchers at Google Brain and Facebook AI Research, are direct intellectual descendants of these early neural models, utilizing advanced activation functions like the ReLU and sophisticated optimization algorithms like stochastic gradient descent. Category:Artificial neural networks Category:Machine learning algorithms Category:1957 in science