von Neumann architecture

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 57 → Dedup 6 → NER 8 → Enqueued 2

| von Neumann architecture | |

|---|---|

| |

| Name | von Neumann architecture |

| Inventor | John von Neumann |

| Introduced | 1945 |

| Influence | Stored-program concept |

von Neumann architecture is a computer architecture model that describes a system where a processing unit, memory, and input/output devices share a common communication pathway. It originated from mid-20th-century work on electronic computing and guided development of early digital computers and modern processors. The model influenced designs across industry and academia, shaping microprocessor, mainframe, and supercomputer evolution.

History

Early conceptual roots trace to wartime and postwar collaborations among mathematicians and engineers including John von Neumann, Alan Turing, Claude Shannon, and Howard Aiken who interacted with institutions like the Institute for Advanced Study, Bell Labs, Harvard University, and Los Alamos National Laboratory. The 1945 "First Draft of a Report on the EDVAC" by von Neumann synthesized ideas from contemporaneous projects such as the ENIAC, the EDSAC, and the Manchester Mark 1, catalyzing interest at organizations including Princeton University and Moore School of Electrical Engineering. Funding and implementation were influenced by agencies and programs including the Office of Scientific Research and Development and later projects at IBM, Intel, and national laboratories like Lawrence Livermore National Laboratory, facilitating transition from relay and vacuum tube machines to transistorized systems developed at firms such as Bell Labs and Fairchild Semiconductor.

Architecture and Components

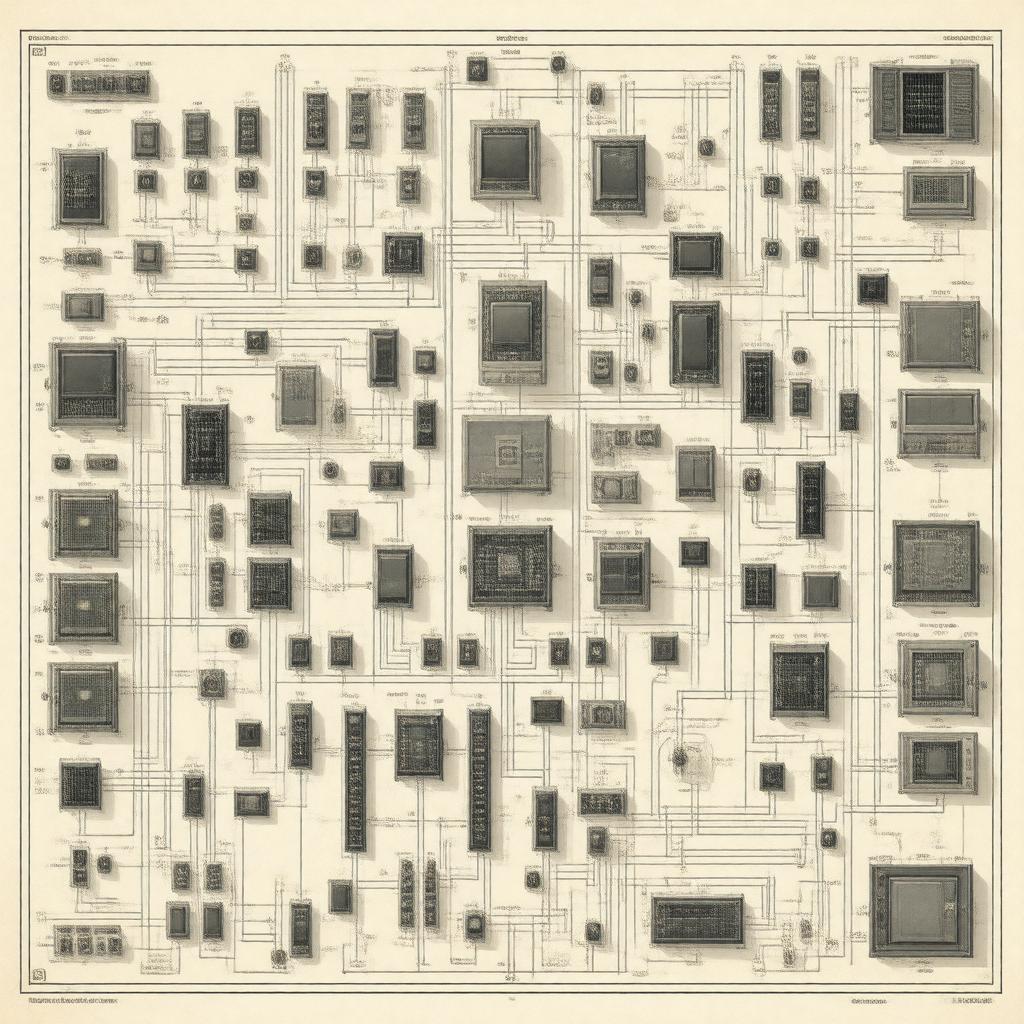

The model specifies core components: a central processing unit (ALU and control unit), a single shared memory storing both instructions and data, input/output mechanisms, and a bus system. Designers such as John Backus and Maurice Wilkes implemented arithmetic and control concepts in languages and machines associated with IBM, UNIVAC, and Cambridge Computer Laboratory. Key implementers across industry include engineers from Intel, AMD, Motorola, and research groups at MIT and Carnegie Mellon University. The ALU concept drew on earlier work by figures including George Stibitz and Konrad Zuse, while control-unit designs evolved alongside microprogramming introduced by Maurice Wilkes and formalized in architectures produced by companies like DEC.

Instruction Processing and Control Flow

Instruction sequencing in the model follows a fetch–decode–execute cycle, supervised by a control unit that coordinates with registers and program counters. Early instruction set designs were developed in environments at Bell Labs, Los Alamos National Laboratory, and Princeton University and influenced by theorists such as Alonzo Church and Stephen Kleene whose formal work underpinned computability approaches used by Alan Turing. Control flow mechanisms including conditional branching, subroutine linkage, interrupts, and pipelining were refined by researchers at IBM, Xerox PARC, Intel, and Texas Instruments, while compiler and language work by John Backus, Grace Hopper, and Dennis Ritchie mapped high-level constructs onto von Neumann instruction models.

Memory and the von Neumann Bottleneck

Because instructions and data share a common memory and bus, throughput can be limited by bandwidth and latency—a constraint historically known as the von Neumann bottleneck. Efforts to mitigate this involved cache hierarchies, virtual memory, and parallel memory architectures developed by teams at IBM, Intel, Sun Microsystems, and research centers such as SRI International and Hewlett-Packard. Innovations like dynamic RAM from DRAM pioneers at Intel and error-correcting schemes used at Sandia National Laboratories and Los Alamos National Laboratory addressed scaling, while theoretical analysis by scholars at Stanford University, UC Berkeley, and Cornell University examined memory consistency models and bandwidth optimization.

Implementations and Variants

Commercial and academic implementations include serial and parallel machines produced by UNIVAC, IBM, Digital Equipment Corporation, Cray Research, and microprocessor lines from Intel, AMD, and Motorola. Variants and departures—such as Harvard architecture, dataflow machines, and reduced instruction set computing (RISC) designs—were advanced at MIT, Stanford University, UC Berkeley, and ARM Holdings. Research into non-von Neumann paradigms appeared in projects at Los Alamos National Laboratory, Lawrence Berkeley National Laboratory, IBM Research, and European labs like Fraunhofer Society, while quantum computing initiatives at IBM Q, Google Quantum AI, and D-Wave Systems explored alternative models.

Impact and Legacy

The model shaped computer engineering curricula at institutions like MIT, Stanford University, and Carnegie Mellon University and informed the work of architects and language designers at IBM, Intel, Microsoft Research, and Bell Labs. Its influence extends to supercomputing centers such as Oak Ridge National Laboratory and Argonne National Laboratory, and continues to intersect with advances in parallelism, compiler design, and hardware security tackled by researchers at DARPA, NSA, and leading universities. Debates about energy efficiency, concurrency, and alternative architectures persist in conferences hosted by IEEE, ACM, and international workshops where historical threads from von Neumann-era projects remain central to ongoing innovation.