Szilard engine

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 56 → Dedup 8 → NER 6 → Enqueued 5

| Szilard engine | |

|---|---|

| |

| Name | Szilard engine |

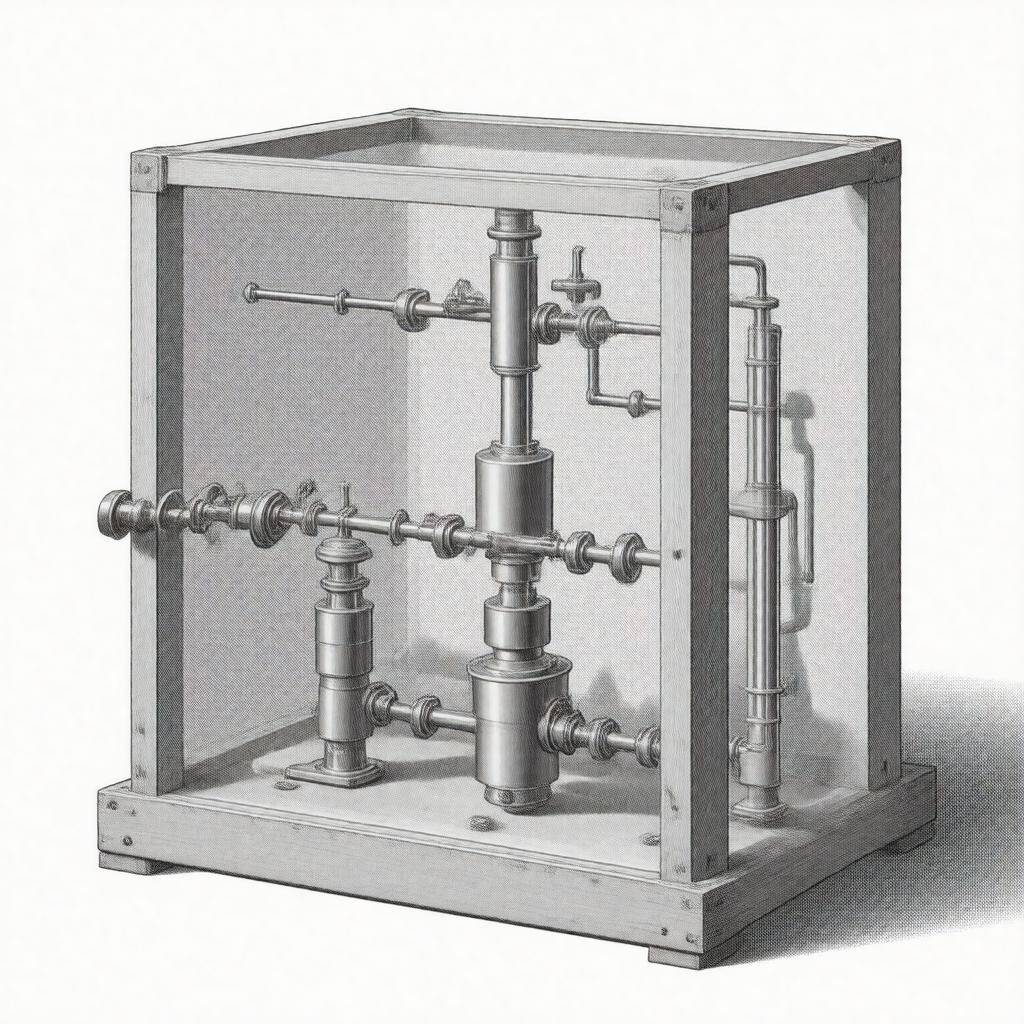

| Caption | Conceptual diagram of a single-particle heat engine with measurement and feedback |

| Inventor | Leo Szilard |

| Introduced | 1929 |

| Field | Statistical mechanics, Thermodynamics, Quantum information |

| Components | Partition, single particle, piston, heat bath, memory register |

Szilard engine The Szilard engine is a theoretical heat engine conceived by Leo Szilard as a thought experiment linking thermodynamics and information theory. It models how measurement and feedback of a single-particle gas can apparently extract work from a heat bath, provoking debates involving James Clerk Maxwell's Maxwell's demon, Ludwig Boltzmann's statistical ideas, and the foundations of statistical mechanics. The construction and analysis of the Szilard engine influenced developments in quantum mechanics, information theory, and the thermodynamic cost of computation studied by researchers associated with Rolf Landauer, Charles H. Bennett, and institutions such as Bell Labs and IBM Research.

Introduction

Szilard introduced the device in 1929 while working in the milieu of University of Berlin physics and industrial research linked to Siemens. The engine considers a single molecule of an ideal gas in a box coupled to a thermal reservoir at temperature T, a movable partition, and an idealized measurement apparatus connected to a memory register analogous to devices developed later at Bell Labs and in IBM Research laboratories. Szilard’s analysis raised questions at the intersection of ideas from Boltzmann's H-theorem, Josiah Willard Gibbs' ensemble concepts, and the thermodynamic implications later formalized by Rolf Landauer and operationalized by Charles H. Bennett in discussions of reversible computation and logical irreversibility.

Thought experiment and model

The canonical model places a single particle in a one-dimensional box, where an agent inserts an impenetrable partition at mid-box, measures which side the particle occupies, and then uses a piston to extract work by allowing isothermal expansion against the partition while coupled to a heat bath maintained at temperature T by devices inspired by experimental platforms from NIST and MIT. Key elements echo apparatus and principles from the Sackur–Tetrode equation context and the conceptual lineage that includes Maxwell's demon and the debates engaged by figures such as Erwin Schrödinger and Albert Einstein. Szilard's argument examined how information acquisition, embodied by a memory correlate, could reduce entropy locally and whether that would violate the second law of thermodynamics as articulated in Rudolf Clausius' formulation.

Thermodynamic analysis and information cost

Szilard quantified extractable work as k_B T ln 2 per measurement for a binary outcome, tying thermodynamic work to a measure later formalized by Claude Shannon as information entropy. This bridge motivated Rolf Landauer to propose that erasing one bit of memory costs at least k_B T ln 2 of dissipated heat, a principle debated and refined by Charles H. Bennett in analyses of reversible computing and thermodynamic reversibility relevant to von Neumann's architectures. Subsequent formal treatments used frameworks from Ludwig Boltzmann-style microcanonical analyses, Gibbs ensembles, and fluctuation relations developed by Gavin Crooks, Christopher Jarzynski, and others to reconcile measurement, feedback, and the second law in accordance with results obtained at institutions such as Los Alamos National Laboratory and Caltech.

Quantum Szilard engine

Extensions into quantum regimes prompted studies relating the model to Werner Heisenberg's uncertainty relations, John von Neumann's measurement postulate, and quantum information measures associated with Niels Bohr's complementarity and Paul Dirac's formalism. Quantum variants consider particles described by Schrödinger equation wavefunctions in confining potentials and involve quantum correlations, entanglement, and decoherence issues treated by researchers at Perimeter Institute and Institute for Quantum Optics and Quantum Information. Analyses employ quantum channels, density matrix formalisms, and resource theories from Peter Shor-era quantum information to generalize Landauer bounds and examine work extraction constrained by quantum measurement backaction and trade-offs articulated in studies by Vlatko Vedral and Sergio Popescu.

Experimental realizations and implementations

Experimental work has implemented Szilard-like cycles using colloidal particles in optical traps, single-electron boxes in CERN-style mesoscopic setups, and superconducting circuits developed at MIT and Yale University. Investigations at facilities like Max Planck Institute laboratories and EPFL have demonstrated information-to-energy conversion consistent with Landauer bounds using microscopic feedback control, while nanopore and cold-atom platforms at Harvard University and University of California, Berkeley explored quantum and classical limits. Techniques draw on optical tweezers technology pioneered by Arthur Ashkin and microfabrication methods from Bell Labs-spawned MEMS initiatives.

Implications for Maxwell's demon and statistical mechanics

The Szilard engine is central to modern resolutions of the Maxwell's demon paradox, informing how measurement, information storage, and erasure restore compliance with the second law through mechanisms discussed by Rolf Landauer and Charles H. Bennett. It has catalyzed research linking thermodynamic irreversibility to logical irreversibility, influenced studies in nonequilibrium statistical mechanics pursued by scholars at Princeton University and University of Cambridge, and shaped operational perspectives in quantum thermodynamics relevant to Nobel Prize-level discussions. By explicitly connecting bitwise information and k_B T ln 2 energetic terms, Szilard’s idea continues to guide theoretical and experimental efforts across communities at Caltech, Stanford University, Imperial College London, and national laboratories studying the limits of computation, measurement, and energy conversion.

Category:Thermodynamics Category:Thought experiments Category:Quantum information theory