McCulloch–Pitts neuron

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 81 → Dedup 0 → NER 0 → Enqueued 0

| McCulloch–Pitts neuron | |

|---|---|

| |

| Name | McCulloch–Pitts neuron |

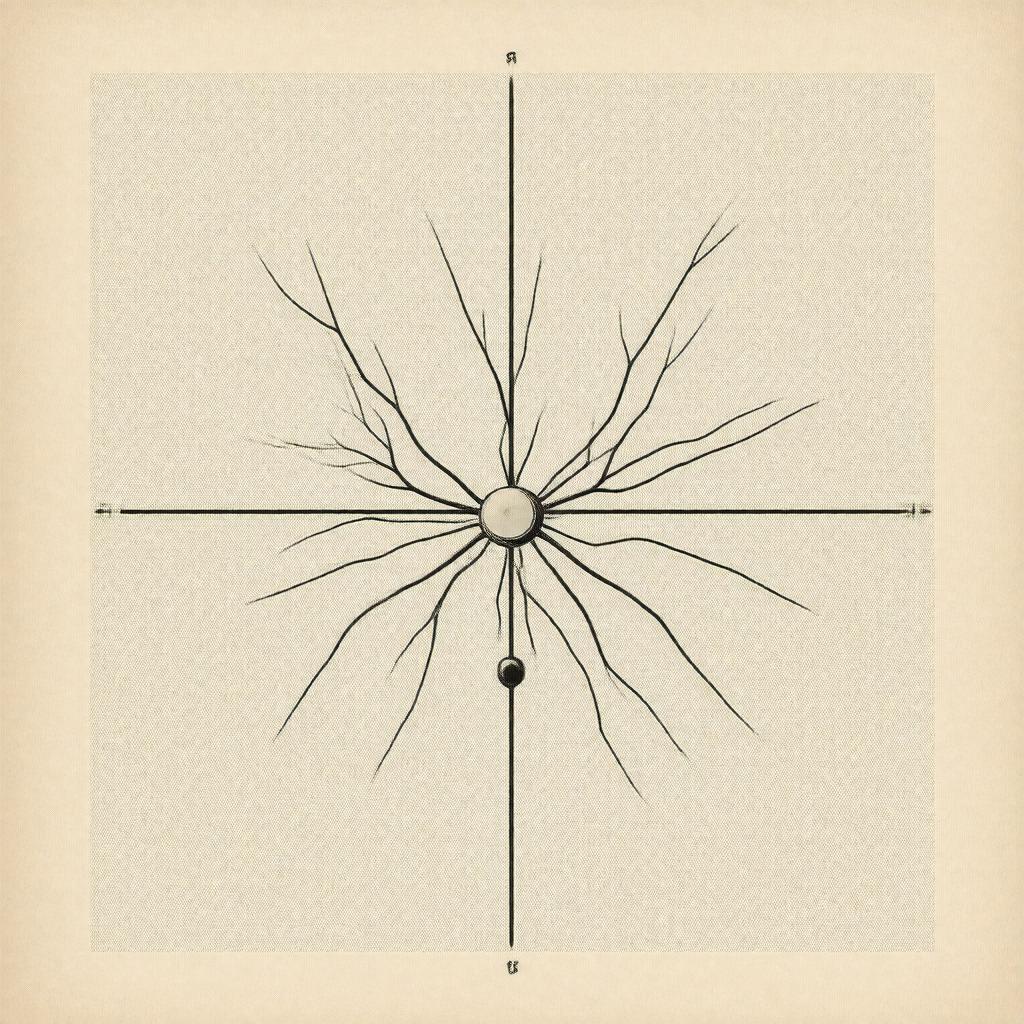

| Caption | Early schematic representation |

| Invented by | Warren S. McCulloch; Walter Pitts |

| Year | 1943 |

| Fields | Neuroscience; Computer science; Cybernetics |

| Notable works | "A Logical Calculus of the Ideas Immanent in Nervous Activity" |

McCulloch–Pitts neuron The McCulloch–Pitts neuron is a seminal formal model of a binary threshold unit introduced by Warren S. McCulloch and Walter Pitts in 1943. It provided an abstract mathematical description linking ideas from Warren S. McCulloch, Walter Pitts, Norbert Wiener, John von Neumann and early Harvard University and University of Chicago networks, situating the model at the intersection of Neuroscience, Mathematics, Philosophy, Cybernetics, and Logic research communities. The model's simplicity enabled subsequent developments in Artificial intelligence, Computer science, Signal processing, Cognitive science, and debates involving figures such as Alan Turing, Claude Shannon, Marvin Minsky, and John McCarthy.

History and development

McCulloch and Pitts proposed the neuron in their 1943 paper coauthored with influences from discussions among Warren S. McCulloch, Walter Pitts, Norbert Wiener, Alan Turing, and contemporaries at institutions such as Massachusetts Institute of Technology, Harvard University, University of Chicago, and research groups associated with U.S. Armed Forces priorities. The formulation drew on logical systems developed by Kurt Gödel, Alfred North Whitehead, and Bertrand Russell and computational ideas in the work of John von Neumann and Alonzo Church. Early reception included commentary by Claude Shannon and incorporation into cybernetics conferences led by W. Ross Ashby and Claude E. Shannon, while later historical treatments connected it to accounts by Marvin Minsky, Seymour Papert, Oliver Selfridge, and historians such as Jerome Lettvin.

Mathematical model

The McCulloch–Pitts neuron is defined as a binary-valued unit that sums weighted binary inputs and applies a threshold activation to produce an output, formulated within a propositional logic framework influenced by Gottlob Frege and Ludwig Wittgenstein's logical investigations. Inputs correspond to firing events analogous to descriptions used by Charles Sherrington and Alan Hodgkin in neurophysiology, while weights and thresholds were treated as integers to enable equivalence with finite automatons studied by Noam Chomsky and Emil Post. The model was shown to emulate basic logical gates such as conjunction, disjunction, and negation—paralleling constructions in George Boole's algebra and early computing circuits by Howard Aiken and John von Neumann—and was formalized using methods akin to those in Alonzo Church's lambda calculus.

Computational properties and expressiveness

McCulloch–Pitts networks were proved capable of representing any finite propositional logic formula when arranged in feedforward architectures, a result resonant with universality themes found in Alan Turing's work and later formalized by Marvin Minsky and John McCarthy. Compositions of threshold units realize finite-state machines related to studies by Michael Rabin and Dana Scott on automata, and can simulate combinational logic used in Claude Shannon's switching theory and Norbert Wiener's cybernetic feedback systems. The model established early connections to computational complexity questions explored by Stephen Cook and Richard Karp through later developments, and it underpinned proofs of representational completeness that influenced Paul Erdős-style combinatorial constructions and later universal approximation results contextualized by researchers like Cybenko and George Cybenko.

Learning and training limitations

McCulloch–Pitts neurons lack intrinsic mechanisms for adaptive weight adjustment; their formulation presumes fixed integer synaptic weights and thresholds set by design, an approach differing from learning algorithms developed later by Frank Rosenblatt, Werbos, Geoffrey Hinton, David Rumelhart, and Yann LeCun. Early attempts to introduce plasticity invoked ideas from Donald Hebb and experimental neurophysiology from Eric Kandel and Rodolfo Llinás, but the original model provided no internal gradient-based or statistical learning rule analogous to Stochastic gradient descent or backpropagation formulated by Paul Werbos. Consequently, training large McCulloch–Pitts networks necessitated combinatorial design or external procedures such as those in Perceptron systems and rule-extraction methods pursued by Marvin Minsky and Seymour Papert.

Applications and influence on neural networks

Despite simplicity, the McCulloch–Pitts neuron influenced early computing machines at Bell Labs, RAND Corporation, MIT Lincoln Laboratory, and conceptual models in Psychology and Cognitive science labs led by Jerome Bruner, Ulric Neisser, Donald Hebb, and Hubel and Wiesel-style sensory studies. The model shaped architectures used in symbolic AI advanced by John McCarthy and Allen Newell, provided a substrate for cybernetic control ideas by Norbert Wiener and W. Ross Ashby, and inspired hardware implementations in switching networks developed by Claude Shannon and John von Neumann. Its legacy is visible in modern deep learning lineages traced through Frank Rosenblatt's perceptron, Marvin Minsky's critiques, the resurgence led by Yann LeCun, Yoshua Bengio, and Geoffrey Hinton, and industrial applications in IBM, Google, Microsoft Research, Facebook AI Research, and OpenAI.

Criticisms and theoretical limitations

Critics noted that the binary, synchronous, and deterministic nature of the McCulloch–Pitts neuron omits stochastic, temporal, and graded dynamics emphasized by experimentalists like Hodgkin and Adrian and theoretical neuroscientists such as Christof Koch and Eve Marder. Philosophers and logicians including Bertrand Russell-influenced commentators and Ludwig Wittgenstein-inspired critics argued the model's logicalist framing oversimplified cognitive phenomena addressed by Noam Chomsky and Jerry Fodor. Subsequent theoretical work by Seymour Papert, Marvin Minsky, Donald Michie, and computational complexity theorists such as Leslie Valiant highlighted scalability, learnability, and representation issues that required richer models—arguments that motivated stochastic, recurrent, and continuous-activation formulations pursued by David Rumelhart, Paul Werbos, Juergen Schmidhuber, and Hinton.