Perceptron

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 60 → Dedup 5 → NER 5 → Enqueued 3

| Perceptron | |

|---|---|

| |

| Name | Perceptron |

| Invented | 1957 |

| Author | Frank Rosenblatt |

| Field | Artificial intelligence, Machine learning |

| Institutions | Cornell Aeronautical Laboratory, IBM, DARPA |

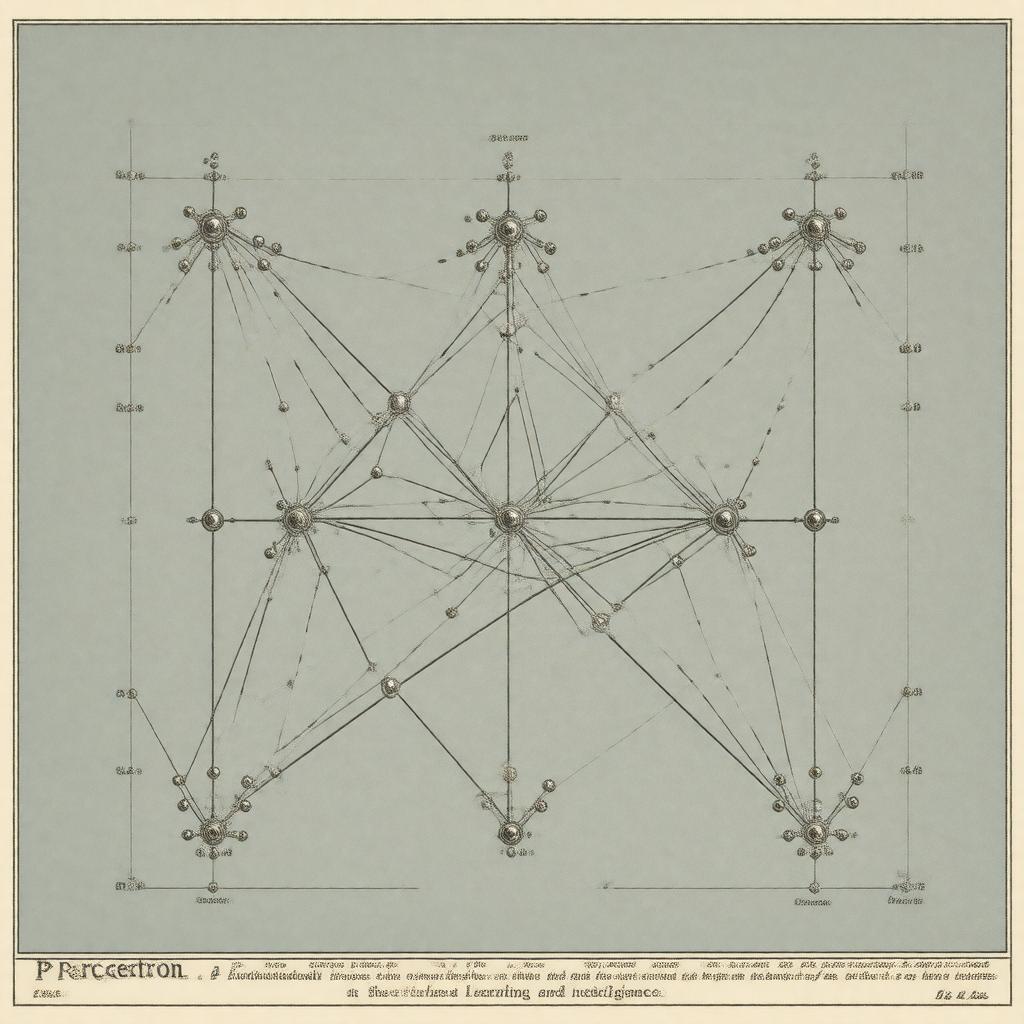

Perceptron The perceptron is a foundational linear binary classifier and one of the earliest artificial neural network models, introduced in the late 1950s by Frank Rosenblatt at the Cornell Aeronautical Laboratory and later developed with support from Office of Naval Research and United States Navy. It influenced research at institutions such as IBM, Massachusetts Institute of Technology, and projects funded by DARPA, and played a central role in debates involving figures like Marvin Minsky, Seymour Papert, John McCarthy, Claude Shannon, and Norbert Wiener. The model catalyzed developments in pattern recognition work at Bell Labs, Stanford University, and Harvard University and spurred criticism that affected funding decisions by agencies including the National Science Foundation.

History

Rosenblatt proposed the perceptron in 1957 while at Cornell Aeronautical Laboratory, publishing demonstrations and hardware implementations that attracted attention from researchers at Harvard University, Massachusetts Institute of Technology, Bell Labs, and IBM. Early experiments connected to projects at United States Navy laboratories and grants from the Office of Naval Research produced electromechanical and optical devices that were reported at conferences attended by representatives from DARPA, National Aeronautics and Space Administration, and Defense Advanced Research Projects Agency. The 1969 book by Marvin Minsky and Seymour Papert analyzed limitations of single-layer networks and influenced policymakers at the National Science Foundation and the U.S. Department of Defense, contributing to a downturn in neural network funding and a shift toward research at institutions like Carnegie Mellon University and Stanford University. Renewed interest during the 1980s and 1990s at centers such as University of Toronto and University of California, Berkeley—and later breakthroughs at Google and DeepMind—recontextualized the perceptron as a pedagogical building block for multilayer networks used by researchers including Geoffrey Hinton, Yann LeCun, and Yoshua Bengio.

Model and Learning Algorithm

The perceptron model, conceived by Rosenblatt, comprises an input vector, a weight vector, a bias term, and a step activation; its online learning rule updates weights based on misclassified examples, connecting to learning theories studied at Princeton University, Columbia University, and Caltech. The original algorithm minimizes classification error via incremental updates that resemble procedures later formalized in statistical learning theory by figures at Bell Labs, Cambridge University, and ETH Zurich; these developments influenced work by Vladimir Vapnik and Alexey Chervonenkis on generalization bounds. Hardware realizations at IBM and proposals at Stanford Research Institute illustrated implementation concerns, while algorithmic variants informed research at Rutgers University, University of Chicago, and University of Michigan. The perceptron learning rule inspired perceptron convergence proofs studied alongside optimization theory at New York University and Imperial College London and linked to convex loss minimization approaches pursued at Microsoft Research, AT&T Bell Labs, and Yahoo Research.

Mathematical Properties and Limitations

Mathematically, the perceptron is a linear classifier whose decision boundary is a hyperplane determined by weights and bias, a fact central to analyses developed at University of Cambridge, Princeton University, and ETH Zurich. The perceptron convergence theorem guarantees finite convergence for linearly separable data, a result refined in work associated with Vladimir Vapnik, Alexey Chervonenkis, and researchers at Columbia University; conversely, the model cannot represent nonlinearly separable functions such as XOR, a limitation highlighted by Marvin Minsky and Seymour Papert and discussed in seminars at MIT and Harvard University. Capacity measures related to the Vapnik–Chervonenkis dimension were formalized by Vladimir Vapnik and influenced complexity analyses at Stanford University and University of California, Berkeley, while error bounds and sample complexity were examined in collaborations involving Microsoft Research and IBM Research. Connections between perceptron dynamics and stochastic gradient descent were explored in theoretical work at Courant Institute and University of Washington.

Variants and Extensions

Extensions of the perceptron include the multilayer perceptron popularized in textbooks and courses at University of Toronto, Columbia University, and University of California, Berkeley and trained with backpropagation as advanced by researchers at Bell Labs, Stanford University, and AT&T Bell Labs. Kernelized perceptrons and online large-margin variants emerged from research groups at UC Berkeley, Microsoft Research, and Yahoo Research and relate to support vector machines developed by Vladimir Vapnik and teams at AT&T Bell Labs. Other adaptations include averaged perceptron methods used in natural language processing at Carnegie Mellon University and University of Pennsylvania, voted and committee perceptrons examined at University of Oxford and University of Cambridge, and stochastic and regularized versions studied at ETH Zurich and Imperial College London. Hardware and neuromorphic implementations inspired work at IBM Research, Intel Labs, and Neuromorphic Computing initiatives associated with DARPA and European Commission programs.

Applications and Impact

The perceptron influenced early applications in pattern recognition, optical character recognition projects at Bell Labs and IBM, and signal detection efforts funded by the United States Navy and NASA. It seeded methods used in speech recognition at AT&T Bell Labs and natural language processing at Carnegie Mellon University and University of Pennsylvania, and its principles underpin classifiers deployed by companies such as Google, Facebook, and Microsoft. The debates sparked by critiques at MIT and publications by Marvin Minsky and Seymour Papert reshaped funding priorities at the National Science Foundation and led to institutional shifts at Stanford University and Carnegie Mellon University; later resurgence driven by researchers like Geoffrey Hinton and institutions including University of Toronto and DeepMind integrated perceptron concepts into deep learning systems used in industry and academia across organizations such as OpenAI, Amazon, and Apple Inc..