Law of large numbers

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 63 → Dedup 0 → NER 0 → Enqueued 0

| Law of large numbers | |

|---|---|

| |

| Name | Law of large numbers |

| Named after | Jakob Bernoulli |

| Field | Probability theory |

| First proved | 1713 |

| Related | Central limit theorem, Chebyshev's inequality, Kolmogorov |

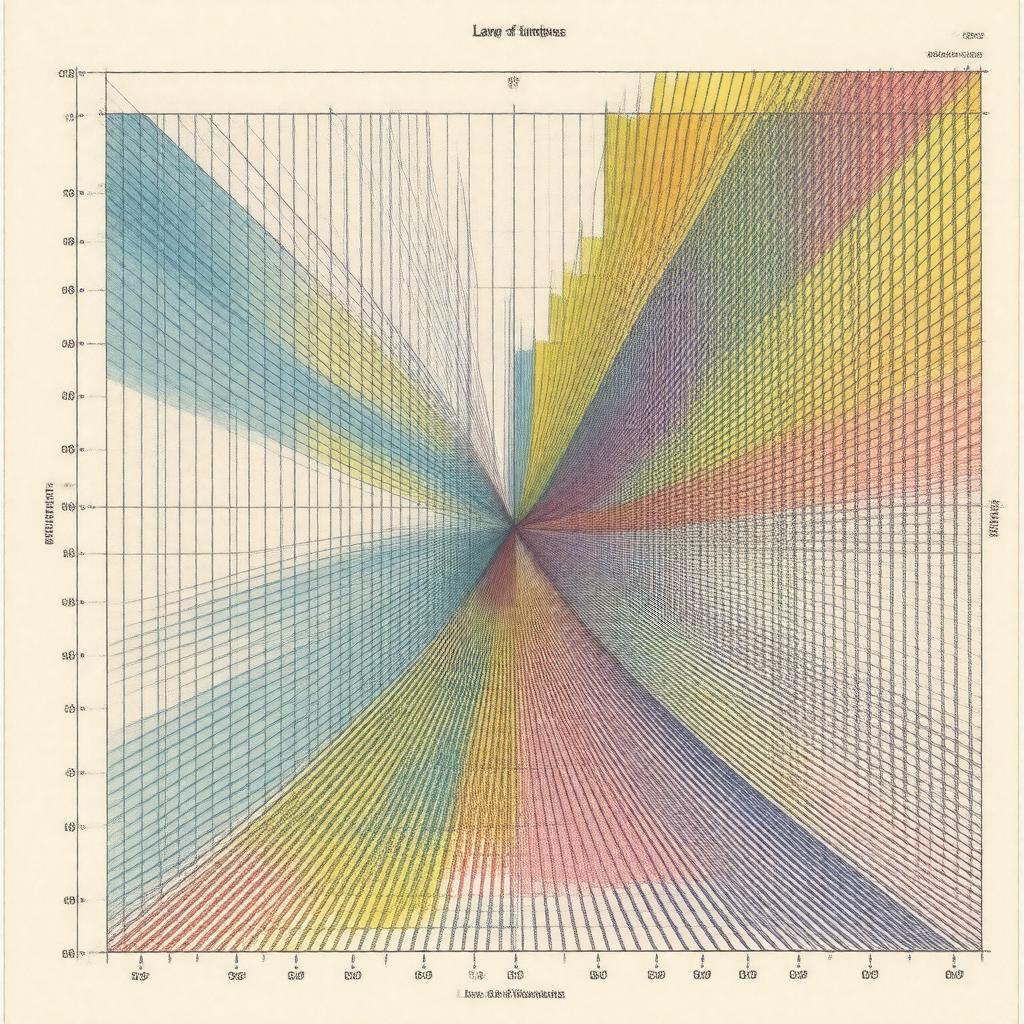

Law of large numbers The Law of large numbers describes how averages of repeated independent observations converge toward expected values as sample size increases. It connects formal results in Probability theory with applications across Finance, Statistics, and experimental sciences, grounding long-run frequency interpretations associated with Thomas Bayes, Pierre-Simon Laplace, and Andrey Kolmogorov. The principle underlies methods used by institutions such as the Royal Society, Bank of England, and World Health Organization for empirical estimation and risk assessment.

Statement and variants

The most commonly cited formulations are the weak and strong variants proved by figures like Chebyshev, Kolmogorov, and Jakob Bernoulli. The Weak Law (WLLN) states that for a sequence of independent identically distributed random variables with finite expectation, sample means converge in probability to the common expectation; proofs often invoke Chebyshev's inequality, Markov's inequality, or characteristic functions associated with Paul Lévy. The Strong Law (SLLN) strengthens convergence to almost sure convergence and was established in progressively general forms by S.N. Bernstein, Andrey Kolmogorov, and later generalized by Etemadi and Kolmogorov's zero–one law. Variants include versions for nonidentically distributed sequences such as the Kolmogorov SLLN, triangular arrays treated by Lindeberg and Feller, and ergodic formulations proved within Ergodic theory by George B. Thomas and Birkhoff.

History and development

The earliest rigorous statement is attributed to Jakob Bernoulli in Ars Conjectandi (1713), motivated by problems in actuarial tables used by the Dutch East India Company and institutions such as the Bank of Amsterdam. Nineteenth-century contributors include Siméon Denis Poisson and Pierre-Simon Laplace, who used the idea in celestial mechanics and demographic studies linked to the French Academy of Sciences. In the early twentieth century, Pafnuty Chebyshev provided inequalities that formalized convergence rates; subsequent refinements came from Andrey Kolmogorov, who integrated measure-theoretic probability via the Moscow State University school and the influence of Sergei N. Bernstein. The development intersected with work by William Feller, Jesse Douglas, and John von Neumann during expansion of rigorous probability in the United States and Scandinavia.

Mathematical formalism and proofs

Formal statements rely on measure theory introduced by Émile Borel and Henri Lebesgue, with probability spaces axiomatised by Andrey Kolmogorov. Let X1, X2, ... be independent random variables with common expectation μ; the sample mean S_n/n converges in probability (WLLN) or almost surely (SLLN) to μ under conditions expressed via variance bounds or summability conditions such as ΣVar(Xn)/n^2 < ∞. Proof techniques include truncation and maximal inequalities by Kolmogorov, martingale convergence theorems developed by Joseph Doob, and characteristic function methods used by Paul Lévy and Harold Hotelling. Extensions use mixing conditions studied by Bradley and ergodic theorems due to George David Birkhoff to handle dependent sequences encountered in time series models linked to Norbert Wiener and Andrey Kolmogorov's stochastic processes.

Examples and applications

Practical examples span gambling odds analyzed by Gambler's Ruin models, actuarial tables used by Edmond Halley and modern insurers like Lloyd's of London, and polling averages employed by Gallup and Pew Research Center. In finance, portfolio return estimation by Harry Markowitz and risk aggregation in J.P. Morgan's models rely on LLN-type convergence. Industrial quality control methods from Walter A. Shewhart and acceptance sampling by Harold F. Dodge use sample means and proportions guided by law-of-large-numbers reasoning. In physics, statistical mechanics frameworks developed by Ludwig Boltzmann and Josiah Willard Gibbs implicitly use ensemble averages justified by ergodic forms of the LLN. Epidemiological incidence rates estimated by John Snow and modern surveillance by Centers for Disease Control and Prevention also rest on these limits.

Related limit theorems

Closely related results include the Central limit theorem which refines LLN convergence by describing fluctuations around the limiting value, and large deviations theory formalized by S.R.S. Varadhan and Harald Cramér quantifying tail probabilities. Other connected results are Bernstein's inequality, Hoeffding's inequality, and the Glivenko–Cantelli theorem used in empirical process theory by Vladimir Vapnik and Alexey Chervonenkis. Martingale convergence theorems by Joseph Doob and mixingale results by Rosenthal further generalize modes of convergence relevant in stochastic process theory developed by Andrey Kolmogorov and Norbert Wiener.