Harvard architecture

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 72 → Dedup 0 → NER 0 → Enqueued 0

| Harvard architecture | |

|---|---|

| |

| Name | Harvard architecture |

| Type | Computer architecture |

| Introduced | Early 20th century (theoretical); popularized mid-20th century |

| Notable | Von Neumann architecture, Princeton architecture, Harvard Mark I, Harvard Mark II |

| Used in | Microcontrollers, DSPs, embedded systems, signal processing |

| Related | Instruction set, Memory hierarchy, Cache, Bus, Pipeline, RISC, CISC |

Harvard architecture is a computer architecture model that separates storage and signal pathways for instructions and data, with distinct memory spaces, buses, and often separate access mechanisms. The design contrasts with designs that share a single memory pathway, and it has been influential in early electromechanical calculating machines, digital signal processors, microcontrollers, and specialized computing platforms. Major institutions, manufacturers, and designers adopted or explored the concept in diverse projects and product lines.

Overview

The model originates from the practice of providing separate storage for program code and program data, enabling simultaneous fetches and reducing contention; implementations vary from strictly separate physical memories to logically distinct regions within a unified memory system. Early academic labs such as Harvard University collections and engineering groups at Massachusetts Institute of Technology and industrial laboratories like Bell Labs and IBM explored memory and pipeline separations alongside contemporaneous efforts at Princeton University and University of California, Berkeley. Influential engineers and theorists including figures affiliated with Alan Turing-era computing and researchers at ENIAC projects contributed to discourse about memory separation, while later engineers at companies such as Intel, Motorola, Texas Instruments, ARM Holdings, and Atmel implemented practical variants in microcontrollers and digital signal processors.

Historical development

Roots of separate instruction and data storage trace to early electromechanical calculators and tabulators developed at places like Harvard University and laboratories associated with Howard Aiken and collaborators. The design was compared and contrasted with the shared-memory models promoted by researchers associated with John von Neumann and projects at Princeton University and EDVAC. During the 1950s and 1960s, research groups at Bell Labs, IBM Research, and university centers such as Stanford University and Carnegie Mellon University published papers on memory organization, influencing microprogramming and cache strategies. In the 1970s and 1980s, companies including Intel Corporation, Motorola, Inc., Texas Instruments Incorporated, and National Semiconductor integrated separate code/data buses into microcontroller and signal-processor product lines; contemporaneous advances at Digital Equipment Corporation and Sun Microsystems explored trade-offs with single-bus systems. The rise of embedded systems in the 1990s and 2000s, driven by manufacturers like Microchip Technology and organizations such as ARM Ltd. and Freescale Semiconductor, popularized Harvard-style memory mapping in service of real-time and low-power requirements.

Architecture and components

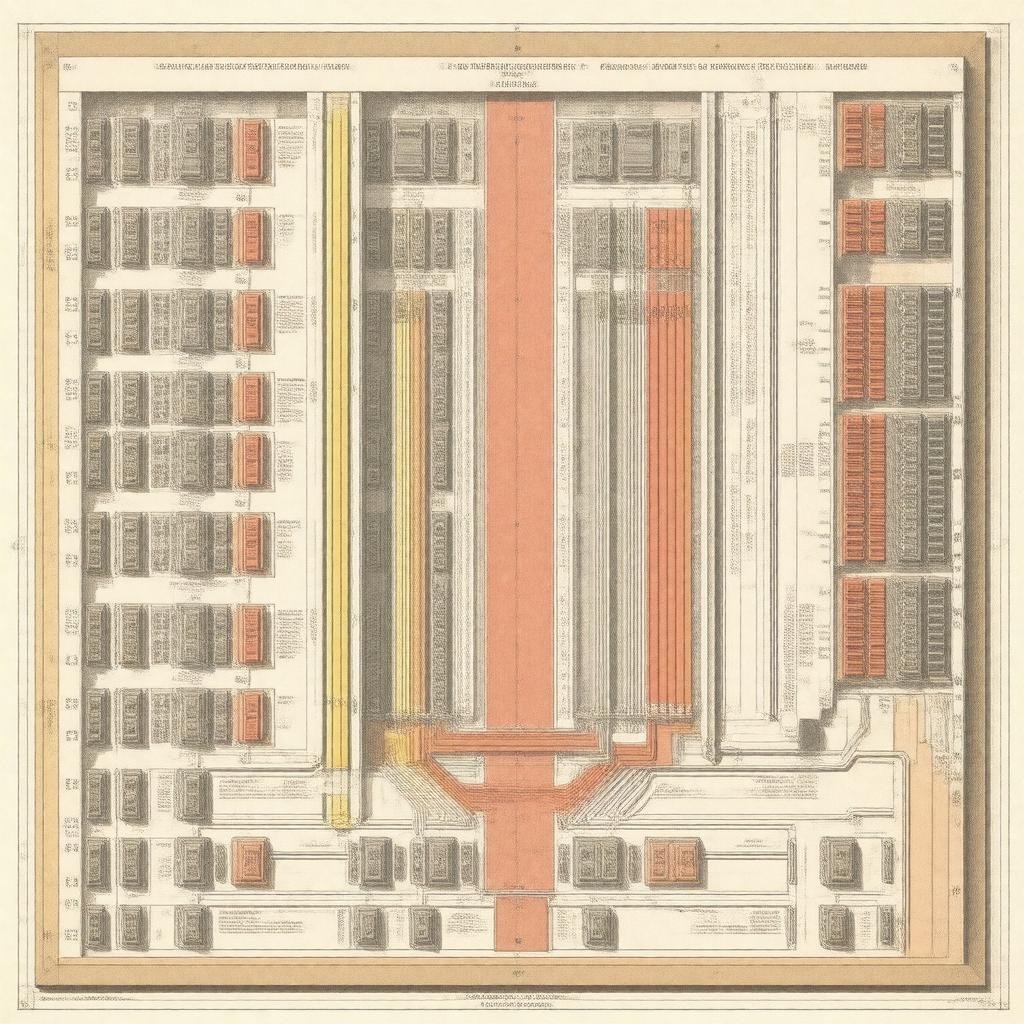

Implementations typically feature distinct memories (ROM, RAM, flash) for instructions and data, connected via separate buses, buses controlled by dedicated controllers, and sometimes independent address generators and instruction registers. Hardware building blocks and contributors include memory technologies developed at Fairchild Semiconductor, bus architectures studied at IEEE working groups, microcontroller cores designed by ARM Holdings and Atmel Corporation, and DSP cores from Texas Instruments. Peripheral and I/O subsystems in systems-on-chip from Qualcomm and NXP Semiconductors integrate DMA controllers and bus arbiters to coordinate instruction fetch and data access. Pipeline stages and hazard mitigation techniques were advanced in academic programs at MIT and University of Illinois Urbana–Champaign and in commercial CPU teams at Intel and AMD.

Advantages and disadvantages

Advantages often cited by proponents include parallel instruction and data access improving throughput (benefiting audio and video processing tasks as used by Sony and Panasonic products), strong isolation of code vs user data helpful for security models investigated by researchers at University of Cambridge and ETH Zurich, and simplified timing for deterministic real-time systems favored in aerospace projects by Lockheed Martin and Boeing. Disadvantages include increased hardware complexity and cost noted by engineers at Xerox PARC and Hewlett-Packard, potential inefficiencies when memory must be shared across domains (a concern in architectures from IBM and Oracle Corporation), and software toolchain complications addressed by compiler teams at GNU Project and integrated development environments from Microsoft and Eclipse Foundation.

Applications and implementations

Harvard-style arrangements are widely used in embedded microcontrollers from Microchip Technology (PIC series), in DSPs from Texas Instruments (TMS320 family), in audio/video processors by Analog Devices and NXP, and in specialized accelerators in products by Qualcomm and Apple Inc.. Real-time controllers in automotive systems by Bosch and Continental AG and industrial controllers from Siemens commonly employ separate code and data memories or memory regions. Academic projects at ETH Zurich, University of Michigan, and Princeton University used Harvard variations for research prototypes; military and aerospace contractors including Raytheon and Northrop Grumman have used the model in embedded avionics. Open-source platforms and toolchains from Arduino LLC communities and RISC-V Foundation projects sometimes adopt Harvard or modified Harvard memory maps.

Comparative architectures

The primary contrast is with the von Neumann or Princeton architecture popularized in work by John von Neumann and implemented in early systems at Princeton University and EDVAC designs. Processor families like x86 from Intel and AMD largely follow shared-memory models, while many RISC cores from ARM Holdings and experimental designs from Berkeley RISC projects explore hybrid approaches. Academic comparisons appear in publications from ACM and IEEE venues, and industrial comparisons were made by teams at IBM Research, Sun Microsystems, and Google when evaluating memory and cache hierarchies for server and mobile platforms.

Variations and modern adaptations

Modern systems often implement "modified" Harvard architectures with unified physical memory but logically separated instruction and data caches—approaches used in designs by Intel Corporation, ARM Ltd., NVIDIA Corporation, and research prototypes at MIT Lincoln Laboratory. Contemporary microcontrollers and SoCs from Qualcomm, MediaTek, and Samsung Electronics employ configurable bus fabrics and memory controllers blending Harvard benefits with von Neumann flexibility; academic explorations at UC Berkeley and industrial labs at Microsoft Research investigate formal verification of memory separation for security, while standards bodies like IEEE and organizations such as JEDEC influence memory interface specifications. Emerging domains in machine learning accelerators from Google (TPU) and startups incubated at Y Combinator explore specialized memory access patterns that echo Harvard principles.