Harvard architecture

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 51 → Dedup 0 → NER 0 → Enqueued 0

| Harvard architecture | |

|---|---|

| |

| Name | Harvard architecture |

| Designer | Harvard University researchers |

| Introduced | 1940s |

| Design | Modified Harvard |

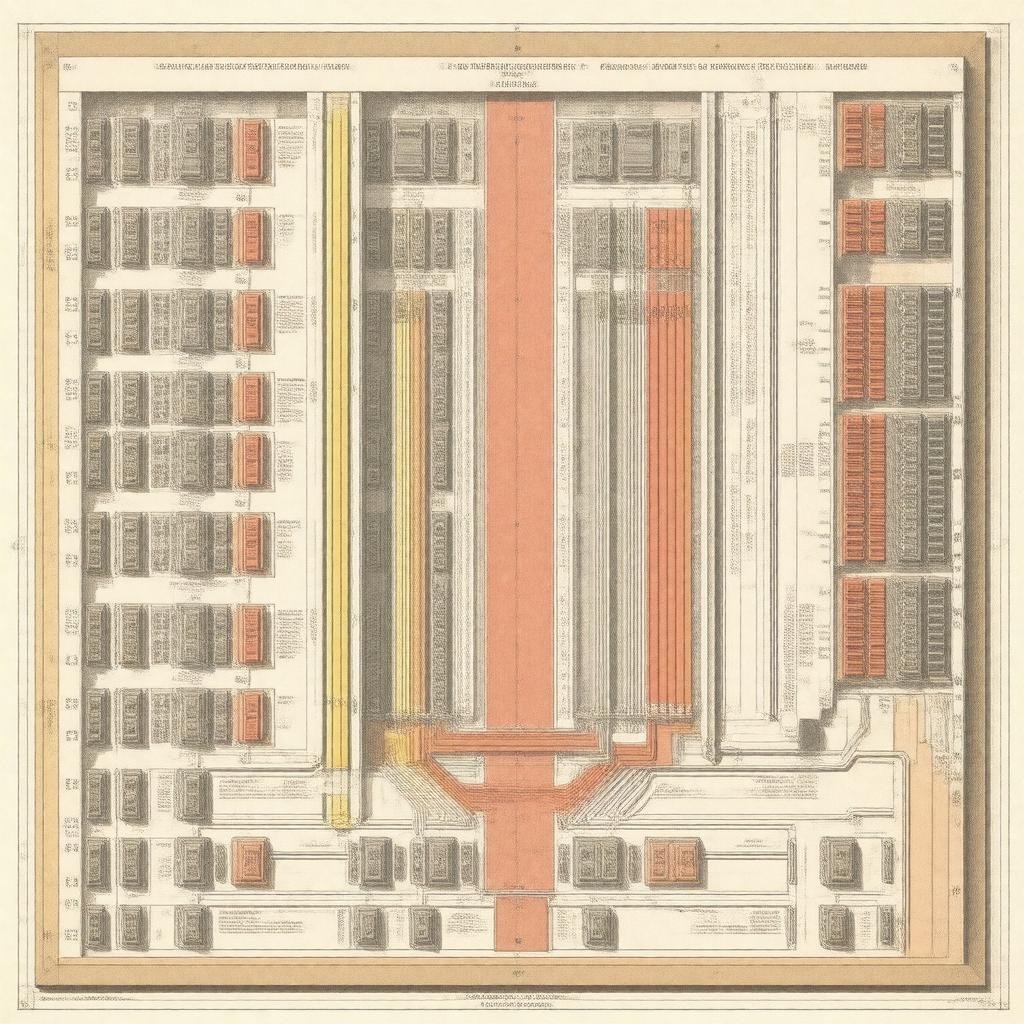

Harvard architecture. It is a computer architecture with physically separate storage and signal pathways for instructions and data. This fundamental separation distinguishes it from the more common von Neumann architecture, where both share a single memory space and bus. The design originated in early computing projects and remains influential in modern digital signal processors and microcontrollers.

Overview

The core concept involves maintaining distinct memory addresses and data pathways for a machine's program and its working information. This physical separation allows the central processing unit to fetch an instruction and access data simultaneously, without contention for a single bus. Early implementations were seen in the Harvard Mark I, a relay-based computer developed at Harvard University with support from IBM. This design philosophy was a direct response to the limitations of earlier computational models and provided a blueprint for specialized high-performance systems.

Design principles

The primary design principle mandates independent memory buses and storage units for code and data. This separation often extends to having different word lengths and timing characteristics for the two memory spaces. For instance, the instruction memory might be read-only memory or flash memory, while data memory utilizes random-access memory. Control units within the arithmetic logic unit manage these parallel pathways, coordinating instruction cycles and data manipulation operations. The architecture strictly enforces that the program counter accesses only the instruction memory space, preventing instructions from being modified as data.

Comparison with von Neumann architecture

The principal rival is the von Neumann architecture, also known as the stored-program concept, which uses a unified memory structure. In a von Neumann machine, a single bus carries both instructions and data, leading to the von Neumann bottleneck where the processor's speed is limited by memory bandwidth. Key historical figures like John von Neumann and J. Presper Eckert championed this unified approach, seen in machines like the EDVAC and IAS machine. In contrast, the separation in Harvard architecture mitigates this bottleneck, allowing concurrent access, which is a critical advantage in real-time processing applications for systems like the PIC microcontroller series from Microchip Technology.

Historical development

The architecture's namesake is the Harvard Mark I, completed in 1944 under the direction of Howard H. Aiken with engineering contributions from Grace Hopper. This electromechanical computer used separate paper tape readers for instructions and punched cards for data. Subsequent electronic computers, such as the Manchester Baby, adopted the von Neumann model, making it the dominant paradigm for general-purpose computing. However, the Harvard design persisted in specialized roles, influencing early minicomputers and the design of the first microprocessors. The Intel 8048 and Motorola 6809 are examples of early chips utilizing Harvard-derived principles for embedded control.

Modern implementations and applications

Most contemporary applications use a modified Harvard architecture, which maintains separate caches for instructions and data while allowing a unified view of main memory, as seen in many ARM and PowerPC processors. Pure implementations are prevalent in the realm of digital signal processors, such as those from Texas Instruments and Analog Devices, where deterministic, high-speed data flow is essential. This architecture is also fundamental to most microcontrollers used in automotive systems, industrial automation, and Internet of Things devices, including products from Atmel, NXP Semiconductors, and Renesas Electronics.

Advantages and disadvantages

The primary advantage is higher performance potential due to concurrent instruction and data fetch, which eliminates bus contention and is ideal for real-time computing and pipelining. It also enhances security and reliability, as the instruction memory can be protected from accidental or malicious modification. A significant disadvantage is greater complexity and cost, requiring more interconnection pathways and potentially duplicative memory resources. It can also complicate compiler design and make tasks like self-modifying code impossible in pure implementations. Furthermore, the fixed separation can be inefficient for applications with varying needs for code and data space, unlike the more flexible von Neumann architecture.

Category:Computer architecture Category:Computing terminology Category:Digital electronics