particle filter

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 61 → Dedup 0 → NER 0 → Enqueued 0

| particle filter | |

|---|---|

| |

| Name | Particle filter |

| Class | Monte Carlo method |

| Data structure | Probability distribution |

| Time complexity | O(N) |

| Space complexity | O(N) |

| Author | N. J. Gordon, D. J. Salmond, A. F. M. Smith |

| Year | 1993 |

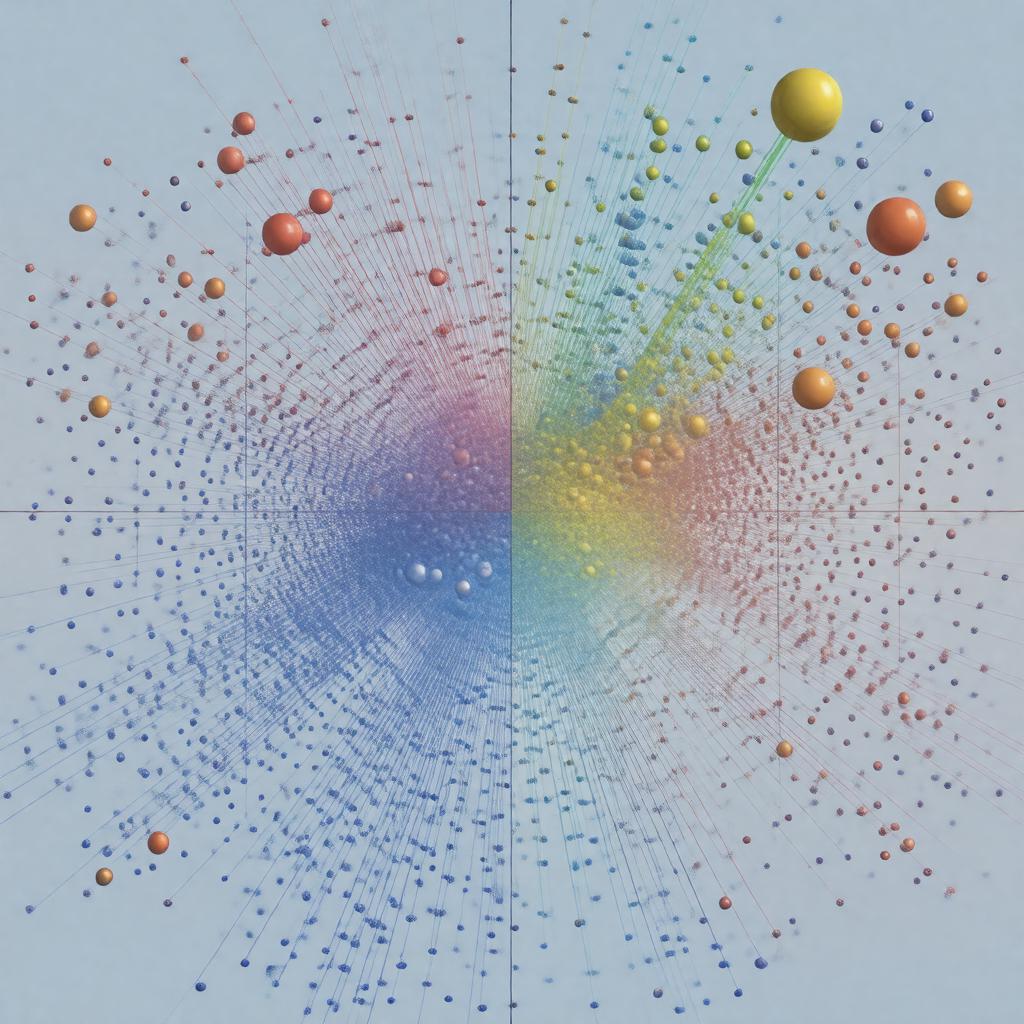

particle filter. A particle filter is a sophisticated Monte Carlo method used for estimating the internal states of a dynamic system when partial observations are made, and random perturbations are present. It is particularly powerful for handling nonlinear systems and non-Gaussian noise, where traditional methods like the Kalman filter may fail. The technique is widely applied across fields such as robotics, computer vision, and financial mathematics.

Overview

The core concept involves representing a probability distribution by a set of random samples, known as particles, which are propagated over time using a Bayesian inference framework. This approach was significantly advanced in a seminal 1993 paper by N. J. Gordon, D. J. Salmond, and A. F. M. Smith, building upon earlier sequential importance sampling ideas. The filter operates recursively, predicting future states and then updating these predictions based on new sensor data or measurements. Its ability to approximate complex posterior distributions makes it a cornerstone of modern statistical signal processing.

Algorithm

The standard algorithm, often called the Sequential Monte Carlo method, begins by initializing a population of particles from a prior distribution. Each iteration involves a prediction step, where particles are moved according to a state transition model, such as those describing kinematics in tracking problems. Following this, an update step assigns importance weights to each particle based on the likelihood of the latest observation from a measurement model. A critical resampling stage, inspired by techniques like those used in genetic algorithms, then duplicates high-weight particles and discards low-weight ones to mitigate sample impoverishment. This cycle of prediction, weighting, and resampling forms the basis for real-time estimation in systems like the Global Positioning System.

Variants and extensions

Numerous variants have been developed to address specific challenges or improve efficiency. The Rao-Blackwellized particle filter combines analytical integration with sampling to reduce variance, often applied in simultaneous localization and mapping. The auxiliary particle filter, introduced by Michael Pitt and Neil Shephard, performs resampling before propagation to better target high-likelihood regions. For high-dimensional state spaces, the ensemble Kalman filter blends particle methods with Gaussian assumptions, popular in numerical weather prediction at institutions like the European Centre for Medium-Range Weather Forecasts. Other extensions include the regularized particle filter to smooth distributions and methods incorporating Markov chain Monte Carlo steps.

Applications

Particle filters are deployed in a vast array of practical domains. In robotics, they are fundamental to Bayesian robot localization and tracking for platforms developed by Boston Dynamics. Within computer vision, they facilitate object tracking in video sequences and facial recognition systems. The financial mathematics community uses them for stochastic volatility modeling and credit risk assessment, as seen in models from J.P. Morgan. They also play crucial roles in geophysical inversion for oil exploration, target tracking in defense systems like those from Lockheed Martin, and computational biology for analyzing gene regulatory networks.

Mathematical foundation

The theoretical underpinnings rest on Bayes' theorem and the concept of recursive Bayesian estimation. The goal is to approximate the posterior density of the state given all observations, which is often analytically intractable. The filter relies on the law of large numbers to ensure convergence of the particle approximation to the true posterior as the number of particles increases. Key analysis involves studying the variance of importance weights and the properties of the resampling process, connecting to broader topics in Monte Carlo integration and ergodic theory. The framework generalizes hidden Markov models to continuous and nonlinear settings.

Limitations and challenges

A primary limitation is the curse of dimensionality, where performance degrades rapidly as the state space dimension increases, a problem also encountered in quantum Monte Carlo. Sample impoverishment can occur during resampling, leading to a loss of diversity among particles. The computational cost scales linearly with the number of particles, posing challenges for real-time applications on embedded systems like those in the Mars Rover. Tuning parameters, such as the proposal distribution, remains more an art than a science, often requiring heuristics. Despite these issues, ongoing research at laboratories like MIT Lincoln Laboratory and INRIA continues to develop more robust and efficient implementations.

Category:Monte Carlo methods Category:Estimation theory Category:Algorithms