law of large numbers

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 51 → Dedup 0 → NER 0 → Enqueued 0

| law of large numbers | |

|---|---|

| |

| Name | Law of Large Numbers |

| Field | Probability theory |

| Discovered by | Gerolamo Cardano, Jacob Bernoulli |

| Statement | The average of results from many trials tends toward the expected value as more trials are performed. |

| Related to | Central limit theorem, Ergodic theory |

law of large numbers. In probability theory, the law of large numbers is a fundamental theorem which states that the average of a large number of independent, identically distributed random variables converges to their common expected value. First rigorously proven by Jacob Bernoulli in his work Ars Conjectandi, the law provides the mathematical foundation for the intuitive principle that stable long-term results emerge from repeated random events. It is a cornerstone of statistics, actuarial science, and many empirical sciences, justifying the practice of estimation through sampling.

Statement of the law

The law of large numbers describes the stabilization of sample averages. Informally, it asserts that as the size of a random sample from a population increases, the sample mean will get closer to the population mean. For instance, while the outcome of a single flip of a fair coin is unpredictable, the proportion of heads over thousands of flips will be very close to one-half. This principle underpins the reliability of opinion polls conducted by organizations like Gallup and the predictability of casino profits over many games. The theorem requires that the random variables have a finite expected value, a condition formalized in works by Andrey Kolmogorov.

Mathematical forms

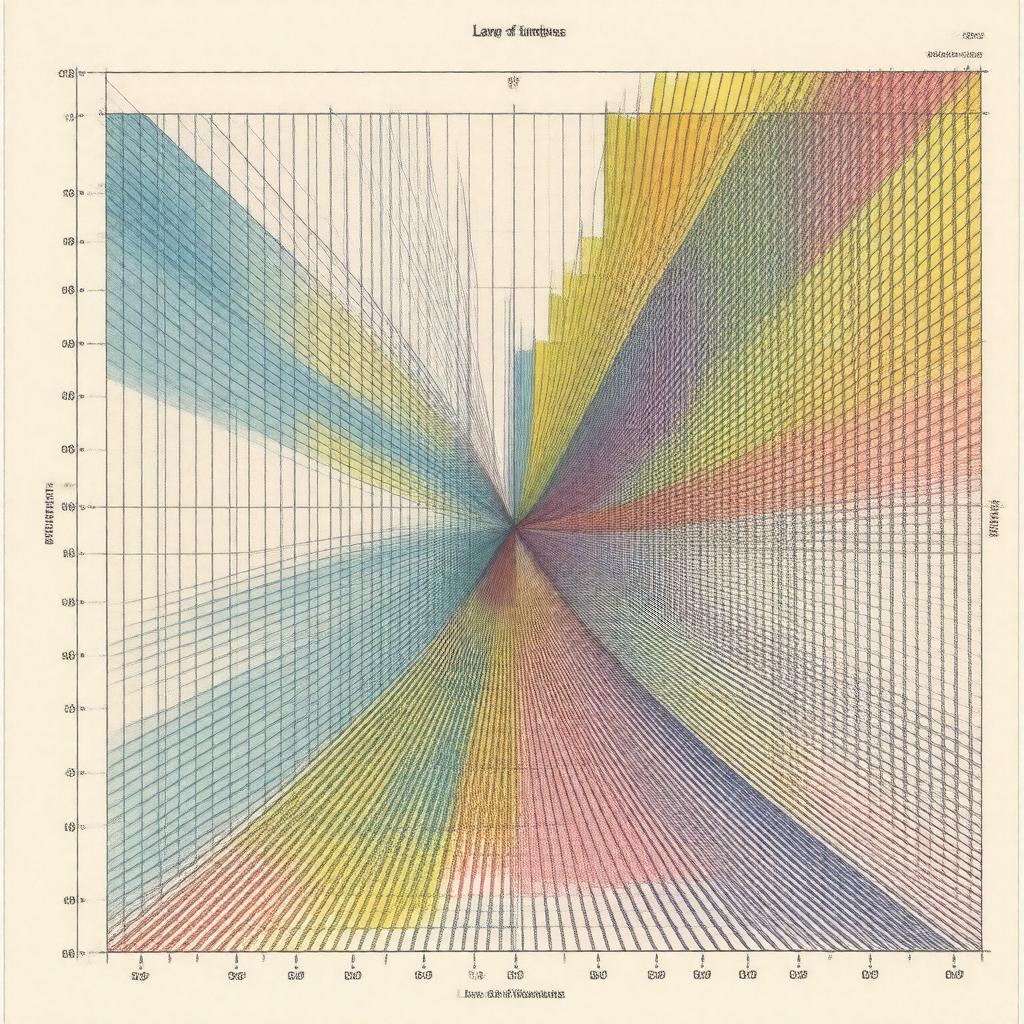

There are two primary mathematical versions: the weak law and the strong law. The **weak law of large numbers** states that for any positive number ε, the probability that the sample average deviates from the expected value by more than ε converges to zero. This was proven for Bernoulli trials by Jacob Bernoulli and later generalized by Siméon Denis Poisson. The **strong law of large numbers** states that the sample average converges to the expected value with probability one, a form of almost sure convergence established by Émile Borel and later by Andrey Kolmogorov. These results rely on assumptions about variance and independence, often utilizing Chebyshev's inequality in their proofs.

Examples and applications

The law manifests in countless real-world phenomena. In insurance, companies like Lloyd's of London rely on it to set stable premiums by pooling risks across many policyholders. In quantum mechanics, the Born rule connects probability amplitudes to observable frequencies, an application of the law. The Monte Carlo method, used in projects like the Manhattan Project, approximates complex integrals by sampling. In finance, the efficient-market hypothesis assumes asset prices reflect all available information, a concept supported by the averaging of many independent trades. The operation of the World Series of Poker depends on the law ensuring that skill prevails over chance in the long run.

History and development

The intuition behind the law dates back to Gerolamo Cardano in the 16th century, though he did not provide a proof. Jacob Bernoulli provided the first rigorous proof for Bernoulli trials in his 1713 posthumous publication Ars Conjectandi, calling it his "golden theorem". Siméon Denis Poisson later coined the term "law of large numbers" and extended it. In the 20th century, Pafnuty Chebyshev and his student Andrey Markov developed more general proofs, leading to the modern formulation by Andrey Kolmogorov in his 1933 monograph Grundbegriffe der Wahrscheinlichkeitsrechnung. This work integrated the law into the Kolmogorov axioms of probability.

Relation to other theorems

The law of large numbers is closely connected to several key results in probability. The central limit theorem describes the distribution of the sample mean, showing it approximates a normal distribution, whereas the law concerns its convergence point. The ergodic theorem in dynamical systems, developed by John von Neumann and George David Birkhoff, generalizes the idea of time averages converging to space averages. In statistics, it justifies the consistency of estimators like the sample mean. It also underpins the glivenko–cantelli theorem, which concerns the convergence of the empirical distribution function to the true distribution.

Limitations and misconceptions

A common fallacy is the gambler's fallacy, the mistaken belief that past independent events influence future ones, misapplying the law to short sequences. The law does not imply that a streak of one outcome will be immediately "corrected"; deviations can persist. It requires the trials to be independent and identically distributed, an assumption violated in systems like financial markets during events like the Wall Street Crash of 1929. The law also does not guarantee convergence for distributions without a finite mean, such as the Cauchy distribution. Furthermore, the rate of convergence can be slow, a topic studied in large deviations theory.

Category:Probability theorems Category:Statistical laws Category:Mathematical theorems