McCulloch-Pitts neuron

Generated by Llama 3.3-70B

Generated by Llama 3.3-70BExpansion Funnel Raw 85 → Dedup 24 → NER 9 → Enqueued 8

| McCulloch-Pitts neuron | |

|---|---|

| |

| Name | McCulloch-Pitts neuron |

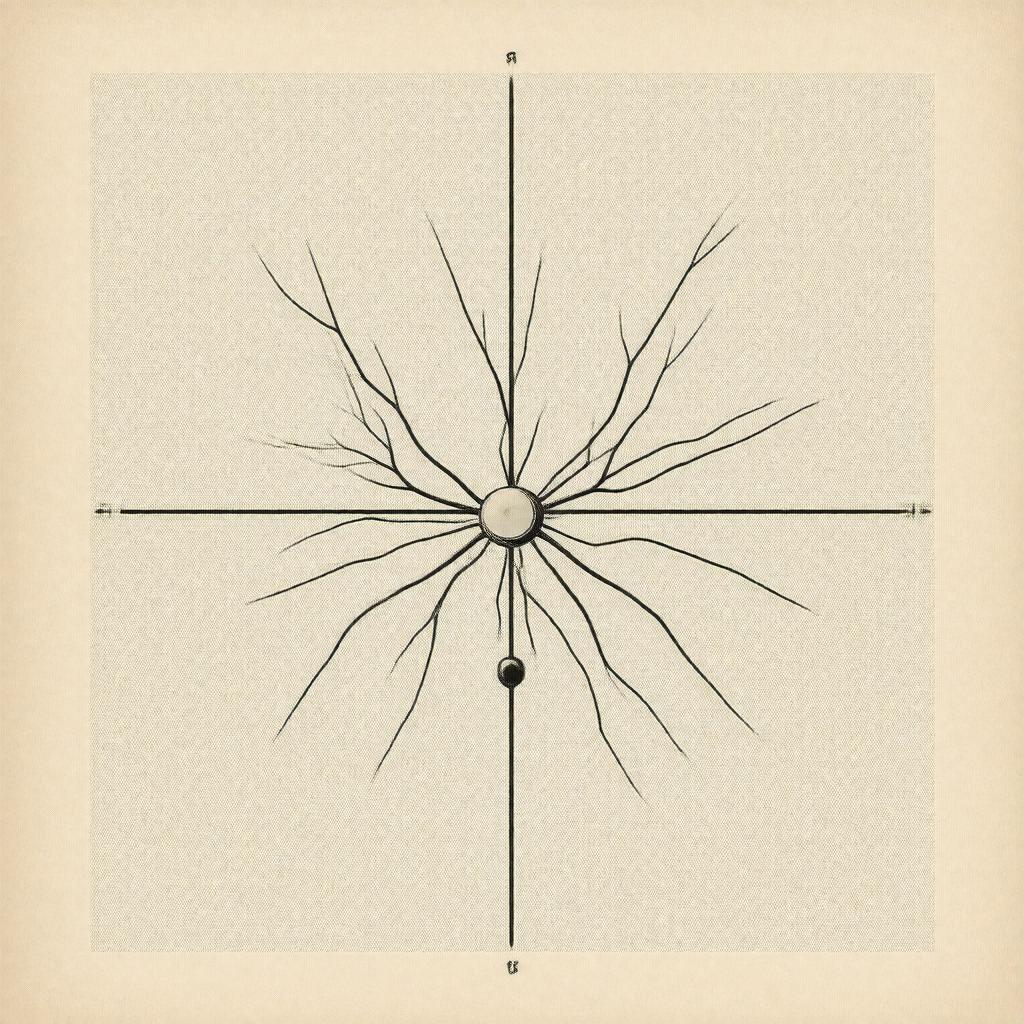

McCulloch-Pitts neuron, a foundational concept in Artificial Intelligence, was introduced by Warren McCulloch and Walter Pitts in their seminal 1943 paper, A Logical Calculus of the Ideas Immanent in Nervous Activity, published in the Bulletin of Mathematical Biophysics. This model laid the groundwork for Computer Science and Neural Networks, influencing the work of Alan Turing, Marvin Minsky, and Frank Rosenblatt. The McCulloch-Pitts neuron is considered a precursor to the development of Perceptrons and other Machine Learning algorithms, with connections to the work of John von Neumann and Kurt Gödel.

● Introduction

The McCulloch-Pitts neuron is a mathematical model of a Neuron, inspired by the structure and function of biological neurons in the Brain. It is based on the idea that neurons are simple processing units that receive and transmit Information in the form of Electrical Signals, as described by Hodgkin and Huxley. The model consists of a set of inputs, a set of weights, and an output, which is determined by the weighted sum of the inputs, as discussed by Claude Shannon and Norbert Wiener. This concept has been explored in the context of Cognitive Science by researchers such as David Marr and Tomaso Poggio.

● History

The development of the McCulloch-Pitts neuron was influenced by the work of Ludwig Wittgenstein, Bertrand Russell, and Rudolf Carnap, who explored the relationship between Logic and Philosophy of Mind. The model was also inspired by the Cybernetics movement, which emerged in the 1940s and 1950s, involving researchers such as Wiener, Von Neumann, and John McCarthy. The McCulloch-Pitts neuron has had a lasting impact on the development of Artificial Intelligence, with contributions from researchers such as Marvin Minsky, Seymour Papert, and Edwin Jaynes.

● Mathematical_Formulation

The McCulloch-Pitts neuron can be mathematically formulated as a Linear Threshold Unit, where the output is determined by the weighted sum of the inputs, as described by George Dantzig and Leonid Kantorovich. The model can be represented as a Boolean Function, where the output is either 0 or 1, depending on the weighted sum of the inputs, as discussed by Stephen Kleene and Emil Post. This formulation has been used in the development of Linear Programming and Optimization Techniques, with applications in Operations Research and Management Science, as explored by researchers such as George B. Dantzig and Richard Bellman.

● Properties

The McCulloch-Pitts neuron has several key properties, including Thresholding, where the output is determined by a threshold value, as described by McCulloch and Pitts. The model also exhibits Linearity, where the output is a linear function of the inputs, as discussed by Shannon and Wiener. Additionally, the McCulloch-Pitts neuron has been shown to be Universal, meaning that it can be used to approximate any Boolean Function, as demonstrated by Turing and Von Neumann. These properties have been explored in the context of Computational Complexity Theory by researchers such as Stephen Cook and Richard Karp.

● Applications

The McCulloch-Pitts neuron has been applied in a variety of fields, including Pattern Recognition, Image Processing, and Natural Language Processing, as explored by researchers such as Yann LeCun and Yoshua Bengio. The model has also been used in the development of Neural Networks, including Feedforward Networks and Recurrent Neural Networks, with applications in Robotics and Control Theory, as discussed by researchers such as Michael Jordan and Stuart Russell. Additionally, the McCulloch-Pitts neuron has been used in the development of Machine Learning algorithms, including Supervised Learning and Unsupervised Learning, with connections to the work of Vapnik and Boser.

● Limitations

Despite its influence, the McCulloch-Pitts neuron has several limitations, including its simplicity and lack of Biological Plausibility, as discussed by researchers such as David Marr and Tomaso Poggio. The model also assumes a Linear Threshold Unit, which may not accurately capture the complex behavior of Biological Neurons, as explored by researchers such as Hodgkin and Huxley. Additionally, the McCulloch-Pitts neuron is not well-suited for modeling Non-Linear Relationships or High-Dimensional Data, as discussed by researchers such as Bishop and Kleinberg. These limitations have led to the development of more complex models, such as Spiking Neural Networks and Deep Learning algorithms, with connections to the work of Hinton and LeCun. Category:Artificial Neural Networks