Kullback-Leibler divergence

Generated by Llama 3.3-70B

Generated by Llama 3.3-70BExpansion Funnel Raw 70 → Dedup 0 → NER 0 → Enqueued 0

| Kullback-Leibler divergence | |

|---|---|

| |

| Name | Kullback-Leibler divergence |

| Field | Information theory |

| Introduced by | Solomon Kullback and Richard Leibler |

Kullback-Leibler divergence is a fundamental concept in information theory, introduced by Solomon Kullback and Richard Leibler, and has been widely used in various fields, including statistics, machine learning, and data analysis, as noted by Andrey Markov, Claude Shannon, and Alan Turing. The concept is closely related to the work of Rudolf Carnap, Hans Reichenbach, and Karl Popper, who contributed to the development of probability theory and philosophy of science. The Kullback-Leibler divergence has been applied in various areas, such as signal processing, image processing, and natural language processing, as seen in the work of Norbert Wiener, John von Neumann, and Marvin Minsky.

Introduction

The Kullback-Leibler divergence is a measure of the difference between two probability distributions, often used in hypothesis testing and model selection, as discussed by Jerzy Neyman, Egon Pearson, and Ronald Fisher. It is closely related to the concept of entropy, introduced by Ludwig Boltzmann and Willard Gibbs, and has been used in various applications, including data compression, channel coding, and cryptography, as noted by Shannon, Turing, and William Friedman. The Kullback-Leibler divergence has been used in various fields, including physics, engineering, and computer science, as seen in the work of Stephen Hawking, Richard Feynman, and Donald Knuth. Researchers such as David Blackwell, Leo Breiman, and David Cox have also contributed to the development and application of the Kullback-Leibler divergence.

Definition

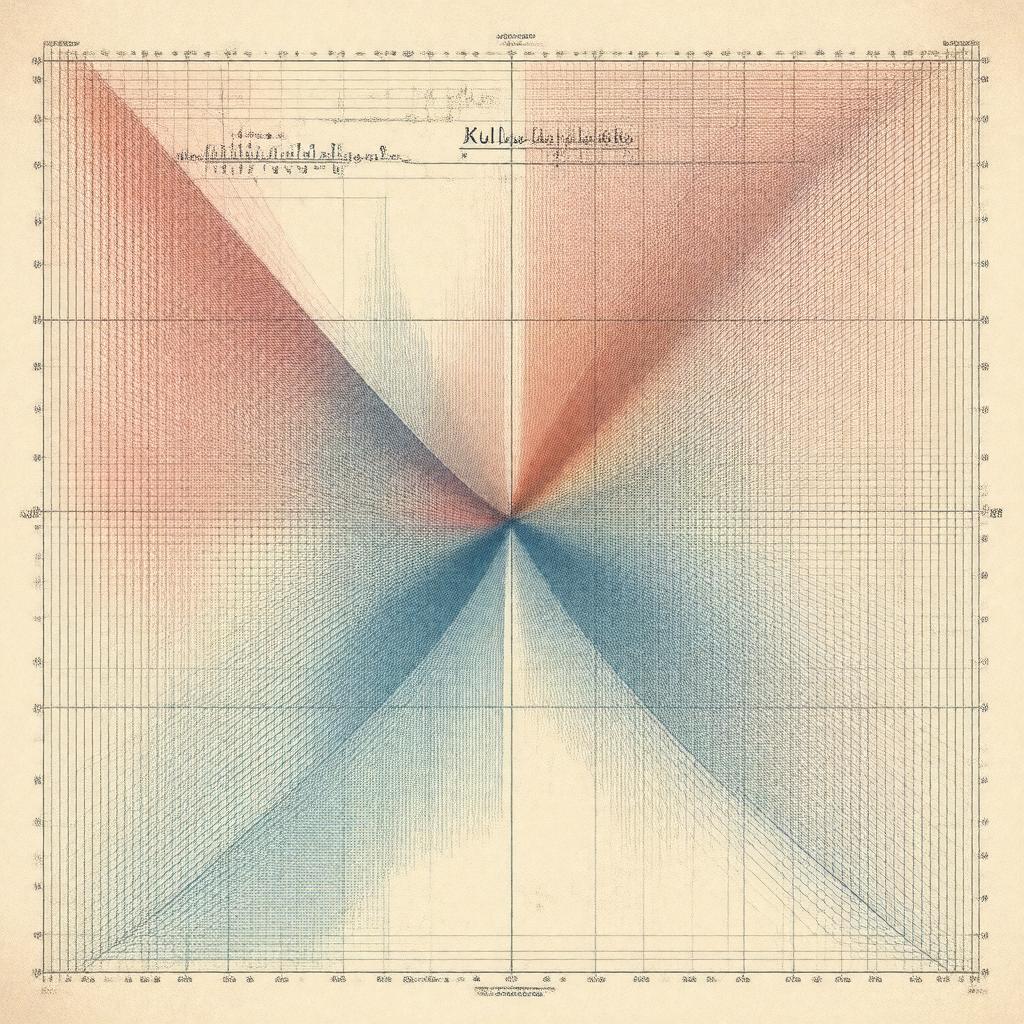

The Kullback-Leibler divergence is defined as the expected value of the logarithmic difference between the two probability distributions, as shown by Kullback and Leibler. It is a non-negative value, with a value of zero indicating that the two distributions are identical, as noted by Shannon and Turing. The Kullback-Leibler divergence is often denoted as D KL(P || Q), where P and Q are the two probability distributions, as used by Carnap, Reichenbach, and Popper. The concept is closely related to the work of Andrey Kolmogorov, Paul Erdős, and John Nash, who contributed to the development of probability theory and game theory.

Interpretation

The Kullback-Leibler divergence can be interpreted as a measure of the amount of information lost when one probability distribution is used to approximate another, as discussed by Shannon and Turing. It can also be seen as a measure of the difference between the two distributions, with a value of zero indicating that the two distributions are identical, as noted by Kullback and Leibler. The Kullback-Leibler divergence has been used in various applications, including pattern recognition, machine learning, and data mining, as seen in the work of Marvin Minsky, John McCarthy, and Yann LeCun. Researchers such as Geoffrey Hinton, Yoshua Bengio, and Andrew Ng have also used the Kullback-Leibler divergence in their work on deep learning and artificial intelligence.

Properties

The Kullback-Leibler divergence has several important properties, including non-negativity, as shown by Kullback and Leibler. It is also a convex function, as noted by Shannon and Turing. The Kullback-Leibler divergence is not a symmetric function, meaning that D KL(P || Q) is not necessarily equal to D KL(Q || P), as discussed by Carnap, Reichenbach, and Popper. The concept is closely related to the work of Emmy Noether, David Hilbert, and Hermann Weyl, who contributed to the development of mathematics and physics.

Applications

The Kullback-Leibler divergence has been widely used in various applications, including image processing, signal processing, and natural language processing, as seen in the work of Norbert Wiener, John von Neumann, and Marvin Minsky. It has also been used in machine learning and data mining, as noted by Geoffrey Hinton, Yoshua Bengio, and Andrew Ng. The Kullback-Leibler divergence has been used in various fields, including physics, engineering, and computer science, as discussed by Stephen Hawking, Richard Feynman, and Donald Knuth. Researchers such as David Blackwell, Leo Breiman, and David Cox have also contributed to the development and application of the Kullback-Leibler divergence.

Calculation

The Kullback-Leibler divergence can be calculated using the formula D KL(P || Q) = ∑P(x) log(P(x)/Q(x)), as shown by Kullback and Leibler. The calculation involves summing over all possible values of x, and can be done using various numerical methods, as noted by Shannon and Turing. The Kullback-Leibler divergence can also be calculated using Monte Carlo methods, as discussed by John von Neumann and Stanislaw Ulam. Researchers such as Andrey Markov, Rudolf Carnap, and Karl Popper have also contributed to the development of methods for calculating the Kullback-Leibler divergence.