Hamming bound

Generated by Llama 3.3-70B

Generated by Llama 3.3-70BExpansion Funnel Raw 57 → Dedup 0 → NER 0 → Enqueued 0

| Hamming bound | |

|---|---|

| |

| Name | Hamming bound |

| Field | Information theory |

| Introduced by | Richard Hamming |

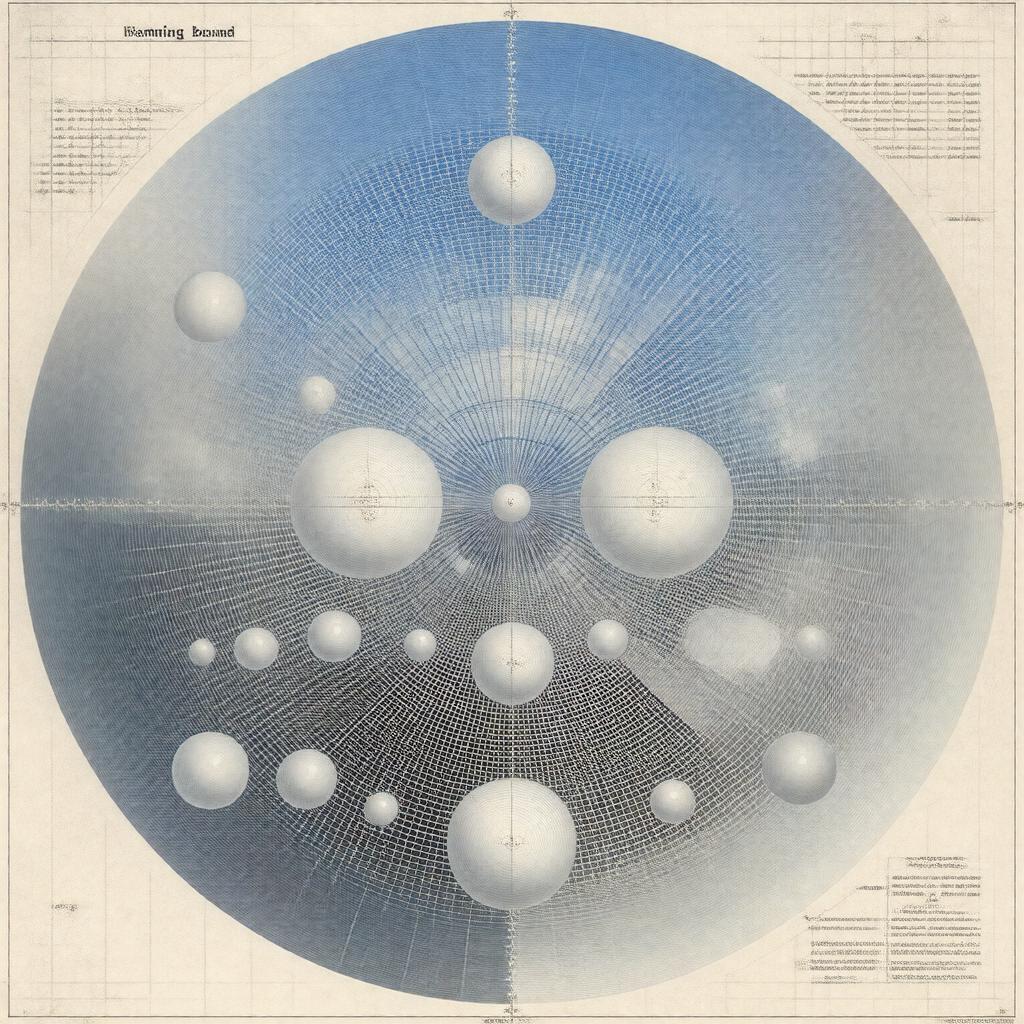

Hamming bound. The Hamming bound, also known as the Hamming limit or sphere-packing bound, is a fundamental concept in information theory and coding theory, introduced by Richard Hamming, a renowned mathematician and computer scientist, who worked at Bell Labs alongside Claude Shannon and John Tukey. This bound is closely related to the work of other notable mathematicians, such as Ralph Norris, Marcel Golay, and Vera Pless. The Hamming bound has far-reaching implications in the design of error-correcting codes, which are crucial in modern digital communication systems, including those used by NASA, European Space Agency, and Google.

Introduction to Hamming Bound

The Hamming bound is a limit on the efficiency of error-correcting codes, which are used to detect and correct errors that occur during data transmission over noisy channels, such as those encountered in satellite communications and wireless networks. This bound is closely related to the concept of channel capacity, which was first introduced by Claude Shannon in his seminal paper, A Mathematical Theory of Communication, published in the Bell System Technical Journal. The work of Shannon and Hamming has had a significant impact on the development of modern computer networks, including the Internet, and has influenced the work of other notable researchers, such as Vint Cerf, Bob Kahn, and Jon Postel.

Definition and Formula

The Hamming bound is defined as a limit on the minimum distance between codewords in a block code, which is a type of error-correcting code used in many digital communication systems, including those employed by IBM, Microsoft, and Intel. The formula for the Hamming bound is closely related to the concept of sphere packing, which is a problem in geometry and number theory that has been studied by mathematicians such as Joseph Louis Lagrange, Carl Friedrich Gauss, and Hermann Minkowski. The Hamming bound is also related to the work of other notable mathematicians, such as Emil Artin, Helmut Hasse, and André Weil, who have made significant contributions to the field of number theory and algebraic geometry.

Applications in Coding Theory

The Hamming bound has numerous applications in coding theory, including the design of error-correcting codes such as Reed-Solomon codes, BCH codes, and Golay codes, which are used in a wide range of digital communication systems, including those employed by NASA, European Space Agency, and Google. The Hamming bound is also closely related to the concept of code rate, which is a measure of the efficiency of a block code, and has been studied by researchers such as Robert McEliece, Elwyn Berlekamp, and James Massey. The work of these researchers has had a significant impact on the development of modern data storage systems, including those used by IBM, Microsoft, and Intel.

Relationship to Other Bounds

The Hamming bound is closely related to other bounds in information theory and coding theory, including the Singleton bound, the Plotkin bound, and the Griesmer bound, which are all limits on the efficiency of error-correcting codes. These bounds are all related to the concept of channel capacity, which is a fundamental limit on the rate at which information can be transmitted over a noisy channel, and have been studied by researchers such as Claude Shannon, Richard Hamming, and Ralph Norris. The work of these researchers has had a significant impact on the development of modern digital communication systems, including those employed by NASA, European Space Agency, and Google.

Proof and Derivation

The proof of the Hamming bound is based on a simple and elegant argument, which involves the concept of sphere packing and the properties of error-correcting codes. The derivation of the Hamming bound is closely related to the work of mathematicians such as Joseph Louis Lagrange, Carl Friedrich Gauss, and Hermann Minkowski, who have made significant contributions to the field of geometry and number theory. The proof of the Hamming bound has been presented in various forms by researchers such as Richard Hamming, Ralph Norris, and Vera Pless, and has been influential in the development of modern coding theory and information theory.

Implications and Consequences

The Hamming bound has far-reaching implications and consequences in the design of error-correcting codes and digital communication systems. The bound implies that there is a fundamental limit on the efficiency of error-correcting codes, which is closely related to the concept of channel capacity. The implications of the Hamming bound have been studied by researchers such as Claude Shannon, Richard Hamming, and Ralph Norris, and have had a significant impact on the development of modern computer networks, including the Internet, and data storage systems, including those used by IBM, Microsoft, and Intel. The consequences of the Hamming bound are also closely related to the work of other notable researchers, such as Vint Cerf, Bob Kahn, and Jon Postel, who have made significant contributions to the development of modern digital communication systems. Category:Information theory