end-to-end principle

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 77 → Dedup 0 → NER 0 → Enqueued 0

| end-to-end principle | |

|---|---|

| |

| Name | end-to-end principle |

| Introduced | 1981 |

| Field | Computer networking |

| Proponents | David P. Reed, Jerome H. Saltzer, David D. Clark |

| Notable for | Placement of functionality at network edges |

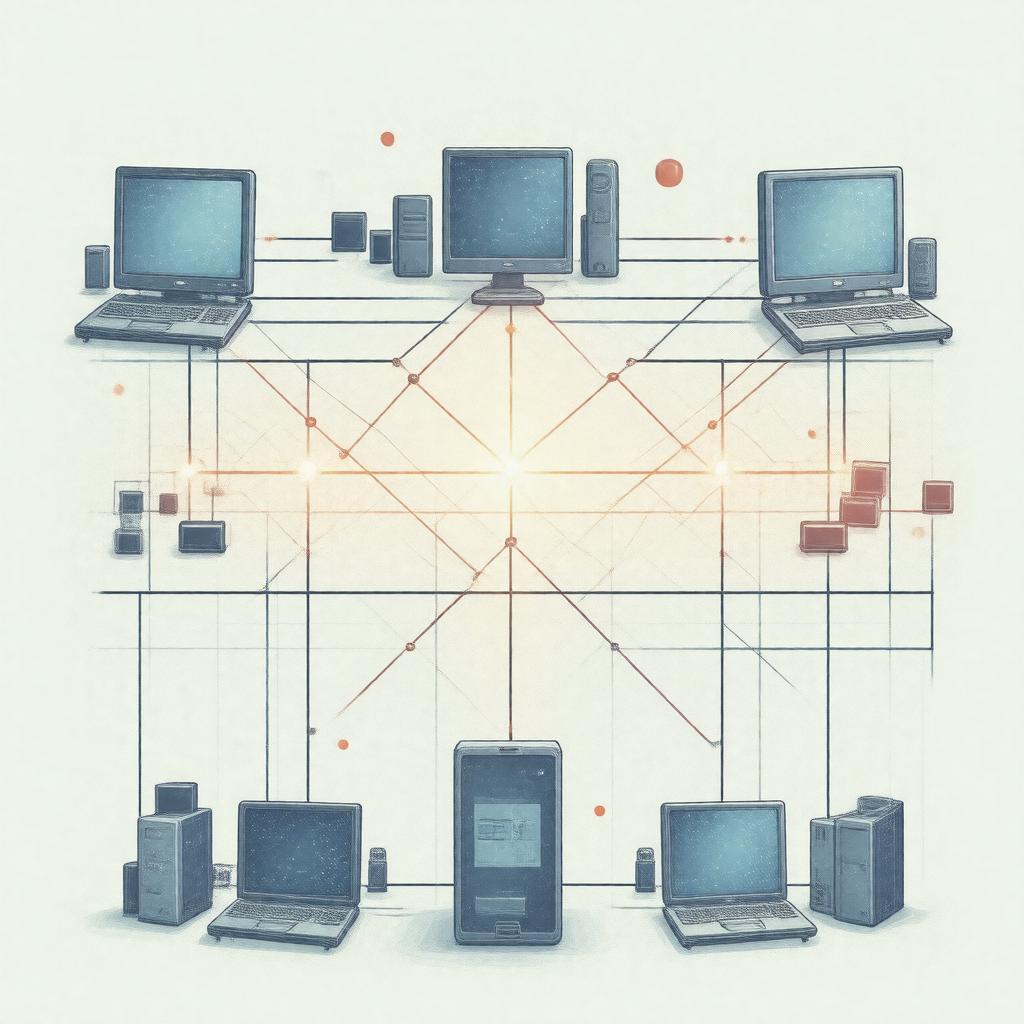

end-to-end principle is a design guideline in computer networking that argues that certain functions are best implemented at the communicating end points rather than in intermediary nodes. It influenced the architecture of the ARPANET, the Internet, and protocols such as TCP and UDP, shaping debates at institutions like the Defense Advanced Research Projects Agency and standards bodies including the Internet Engineering Task Force.

History

The formulation emerged from work by researchers associated with Massachusetts Institute of Technology, MITRE Corporation, and Xerox PARC during the development of packet switching networks in the 1970s and early 1980s. Influential papers and memos circulated among contributors at Bolt Beranek and Newman, Stanford University, and Carnegie Mellon University, while projects funded by the National Science Foundation and DARPA provided practical testbeds. The principle informed protocol design debates at the Internet Architecture Board and in documents that guided the design of TCP/IP stacks implemented by organizations such as Sun Microsystems, IBM, and DEC.

Principles and Rationale

The rationale ties to reliability and correctness as examined in seminal analyses by researchers affiliated with Harvard University and University of California, Berkeley. It advocates placing functions like error checking, encryption, and application-specific recovery at end hosts—machines run by entities such as Microsoft, Apple Inc., and Cisco Systems—rather than embedding them in transit devices manufactured by companies like Juniper Networks or overseen by carriers including Verizon Communications and AT&T Inc.. This perspective influenced standards work at the IETF and debates in forums involving European Commission policymakers and technical committees at IEEE. Proponents argued that end-host implementations preserve flexibility for application designers at organizations such as Google, Facebook, and Amazon.com while keeping the core network simple, a position echoed in analyses from Princeton University and Yale University.

Applications in Network Design

Implementations grounded in the principle underpinned the layering of the Internet Protocol Suite and the placement of functions in application layer software from vendors like Mozilla Foundation and Oracle Corporation. It shaped the use of transport-layer mechanisms in TCP implementations by teams at Berkeley Software Distribution and influenced secure communications via Transport Layer Security libraries developed by groups at RSA Security, OpenSSL Project, and Let's Encrypt. Content delivery strategies used by Akamai Technologies and streaming services from Netflix leveraged edge functionality, while mobile ecosystems from Nokia and Samsung Electronics incorporated endpoint processing for performance and battery considerations. Cloud providers such as Amazon Web Services, Microsoft Azure, and Google Cloud Platform demonstrate trade-offs between edge intelligence and centralized services.

Criticisms and Limitations

Critics from organizations including ETSI and researchers at University of Oxford and University of Cambridge have argued that strict adherence can neglect benefits of in-network services deployed by carriers like T-Mobile or enterprises such as Comcast. Debates before regulatory bodies like the Federal Communications Commission and in standards fora including 3GPP highlighted tensions with middlebox functions such as caching, firewalling, and traffic optimization used by Cisco Systems and Fortinet. Security analysts at Symantec and Kaspersky Lab noted scenarios where network-level filtering or intrusion detection by vendors like Palo Alto Networks improves protection, while economists at London School of Economics examined incentives when firms like Huawei operate infrastructure.

Variations and Extensions

Subsequent work proposed moderated approaches that blend end-host intelligence with in-network assistance; examples include proposals from research groups at Massachusetts Institute of Technology, ETH Zurich, and University of California, Los Angeles. Architectures such as content-centric networking and software-defined networking research by groups at Stanford University and Princeton University explored relocating some functions. Initiatives by companies like Cisco Systems and standards from IETF working groups considered middlebox cooperation protocols, while projects at European Organization for Nuclear Research and National Institute of Standards and Technology evaluated hybrid trust models integrating endpoint cryptography with network audits.

Impact and Legacy

The principle has been central to policy and technical discourse involving major actors such as United States Department of Defense, European Commission, and multinational firms including IBM and Google. It underlies the architecture of widely deployed systems from Cisco IOS routers to server stacks at Facebook and influenced educational curricula at institutions like Stanford University and MIT. Continued relevance appears in contemporary debates over network neutrality adjudicated by bodies such as the Federal Communications Commission and in the design of emerging infrastructures by companies like Cloudflare and research consortia at Internet Society.

Category:Computer networking