PageRank

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 76 → Dedup 21 → NER 8 → Enqueued 8

| PageRank | |

|---|---|

| |

| Name | PageRank |

| Invented | 1996 |

| Inventors | Larry Page, Sergey Brin |

| Developer | Stanford University; Google |

| Initial publication | "The Anatomy of a Large-Scale Hypertextual Web Search Engine" (1998) |

| Domain | Web search; network analysis |

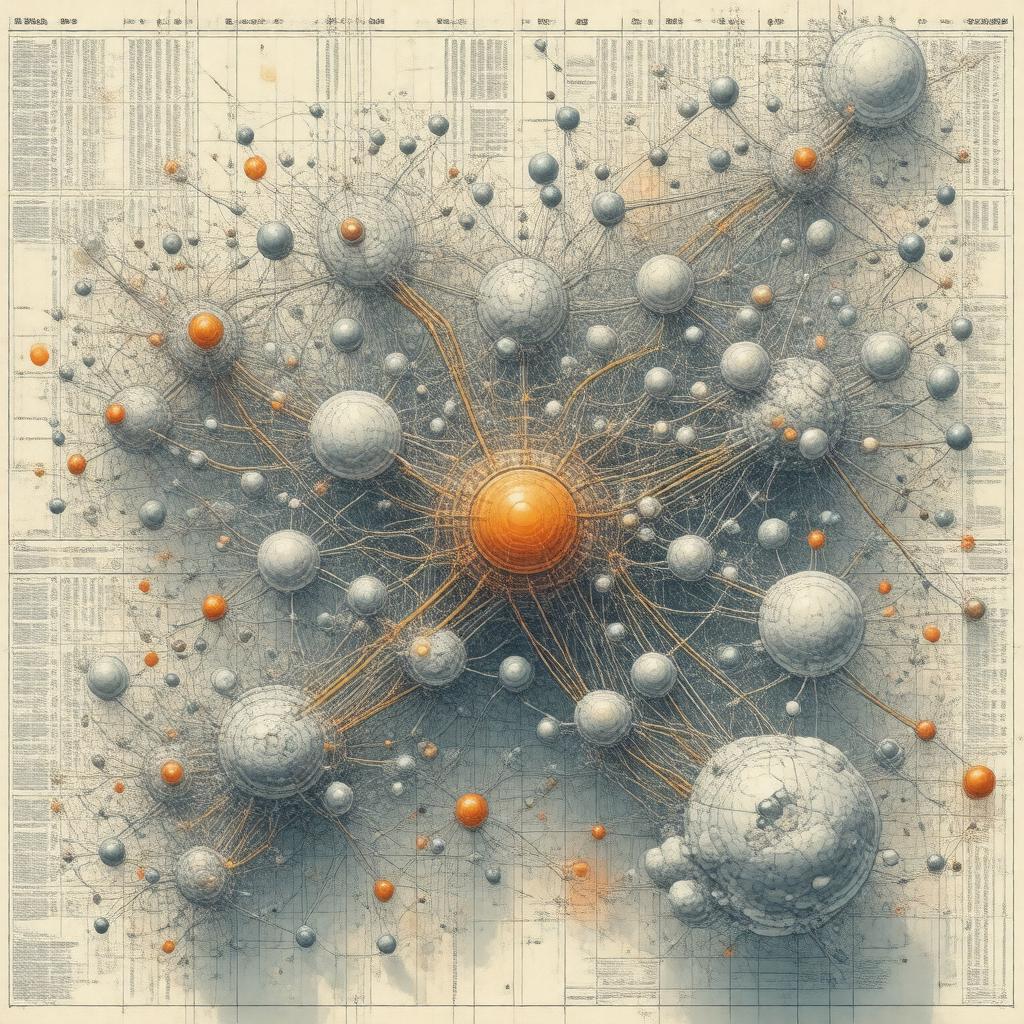

PageRank is a link-analysis algorithm developed to rank documents by importance within a directed network. Conceived at Stanford University by Larry Page and Sergey Brin, it formed a core component of the early Google search engine and influenced research across computer science, information retrieval, and network theory. PageRank models the web as a graph of linked pages and assigns a numerical score reflecting a page's centrality relative to the entire hyperlink structure. The method's impact spans search products, academic citation analysis, and graph algorithms used by organizations such as Microsoft, Yahoo!, IBM, and Facebook.

History

PageRank emerged from work at Stanford University in the mid-1990s by Larry Page and Sergey Brin, inspired by earlier efforts in citation analysis such as Eugene Garfield's citation indexing and the HITS algorithm developed by Jon Kleinberg. The 1998 paper "The Anatomy of a Large-Scale Hypertextual Web Search Engine" described the algorithm within the context of a prototype search engine that competed with contemporaries like AltaVista, Lycos, and Yahoo! Search. After incorporation of Google in 1998, PageRank became a distinguishing feature against rivals such as Ask.com and influenced academic dialogue at venues like SIGIR, WWW Conference, and KDD. Over time, companies including Microsoft Research, IBM Research, and institutions such as MIT and CMU extended the approach in studies on web spam, trust metrics, and social-network ranking.

Algorithm

The algorithm treats a hyperlinked collection of pages as a directed graph, using an iterative eigenvector computation similar to methods in numerical linear algebra developed by researchers at Bell Labs and later formalized in the context of stochastic matrices by Andrey Markov-inspired chains. The core iteration computes a stationary distribution of a random-surfer model with damping, inspired by random-walk ideas used in Markov chain theory and stochastic processes studied by Paul Lévy and Andrey Kolmogorov. Implementation in production employed techniques from sparse-matrix computations honed at Stanford Linear Accelerator Center and algorithmic optimizations used by Google engineers familiar with systems from Sun Microsystems and Intel hardware. Early implementations exploited power iteration, while later work incorporated accelerations from research at ETH Zurich, Princeton University, and UC Berkeley.

Mathematical Foundations

Mathematically, the algorithm seeks the dominant eigenvector of a modified adjacency matrix representing the web graph, connecting to spectral graph theory developed by Alfréd Rényi-era probabilists and later formalized by Fan Chung and László Lovász in random graphs and graph spectra. The use of a teleportation (damping) parameter creates an irreducible, aperiodic stochastic matrix guaranteeing a unique Perron–Frobenius eigenvector as established in work by Otto Perron and Georg Frobenius. Convergence properties derive from theory by Stewart and Golub on iterative solvers, and error bounds relate to conditioning results studied at Courant Institute and Los Alamos National Laboratory. Connections to centrality measures such as eigenvector centrality and Katz centrality trace to research performed at Columbia University and University of Cambridge.

Applications

Beyond web search at Google, the algorithm's framework has been adapted for bibliometrics at Clarivate Analytics and Elsevier, citation ranking in services similar to Google Scholar, and influence measurement in social platforms like Twitter and LinkedIn. Network-science researchers at Stanford Network Analysis Project and Santa Fe Institute used the method to study ecosystems, power grids, and protein interaction networks relevant to NIH-funded projects. Enterprise search products from Microsoft and IBM incorporated PageRank-inspired signals into relevance models, while recommender systems at Amazon and Netflix explored link-based features. In geopolitics, analysts from RAND Corporation and Brookings Institution have leveraged link-centrality analogues to assess information diffusion and influence.

Variants and Improvements

Numerous variants extend or modify the original model: topic-sensitive adaptations presented by researchers at Stanford introduced personalization vectors akin to concepts from Yale University work on personalization; TrustRank from University of California, Berkeley and Yahoo! Research integrates seed sets to combat spam; weighted and time-aware variants appear in studies at MIT and EPFL; and block-wise and distributed algorithms were advanced at Google and Microsoft Research to handle web-scale graphs. Accelerated solvers incorporate techniques from NVIDIA GPU computing and parallel frameworks like MapReduce and Apache Hadoop developed by engineers from Google and Yahoo! respectively.

Criticism and Limitations

Critics have noted susceptibility to manipulation through link farms studied by teams at Carnegie Mellon University and the emergence of web spam combated by efforts at Spamhaus and academic anti-spam research groups. The model's dependence on link structure underweights content signals emphasized in work by Stanford NLP and CMU natural-language researchers. Scalability and freshness present operational challenges addressed by engineering groups at Google and Microsoft; ethical concerns about opaque ranking were raised by scholars at Harvard University and Yale Law School studying algorithmic transparency and platform accountability.

Implementation and Performance

Production implementations relied on large-scale computing infrastructure pioneered by Google engineers and influenced by cluster designs from Sun Microsystems and supercomputing centers like Lawrence Livermore National Laboratory. Performance optimizations exploit sparse linear-algebra libraries developed at Netlib and parallel paradigms advanced at UC San Diego and Princeton. Benchmarks and empirical studies from SIGIR and WWW Conference quantify convergence rates, memory footprints, and sensitivity to damping parameters, while modern graph-processing systems from GraphLab and Neo4j provide practical platforms for applied variants.

Category:Search algorithms