Network File System

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 58 → Dedup 8 → NER 5 → Enqueued 4

| Network File System | |

|---|---|

| |

| Name | Network File System |

| Developer | Sun Microsystems |

| Released | 1984 |

| Operating system | Unix-like systems, Microsoft Windows, macOS |

| Genre | Network file system protocol |

| License | Open Network Computing / various |

Network File System Network File System provides a distributed file access protocol that allows a client to access files over a network as if they were on local storage. It originated in the era of Sun Microsystems innovation and has influenced interoperability between Unix, Linux, BSD, Microsoft Windows, and macOS environments. The protocol underpins many enterprise deployments involving Oracle Corporation databases, Amazon Web Services, Google Cloud Platform, and Microsoft Azure storage integrations.

Overview

Network File System enables transparent file sharing between machines by exposing remote directories to local kernel filesystems, kernel modules, and userland utilities. Early designs emphasized stateless servers, remote procedure calls from the Open Network Computing framework, and integration with Network Information Service for namespace consistency. Implementations interact with standards such as POSIX for semantics, often coordinated with storage technologies from vendors like Dell Technologies, NetApp, EMC Corporation, and cloud providers including Amazon Web Services and Google Cloud Platform.

History and Development

The protocol was designed at Sun Microsystems during the 1980s; engineers drew on distributed systems research from institutions such as University of California, Berkeley, MIT, and Stanford University. Its public releases coincided with the growth of UNIX System V and the rise of networked workstations from companies like Sun Microsystems and Hewlett-Packard. Subsequent milestones involve contributions from standards bodies and corporations including Internet Engineering Task Force, The Open Group, and Microsoft Corporation as interoperability became crucial for heterogeneous datacenters and collaborations with research labs like Los Alamos National Laboratory.

Protocol and Architecture

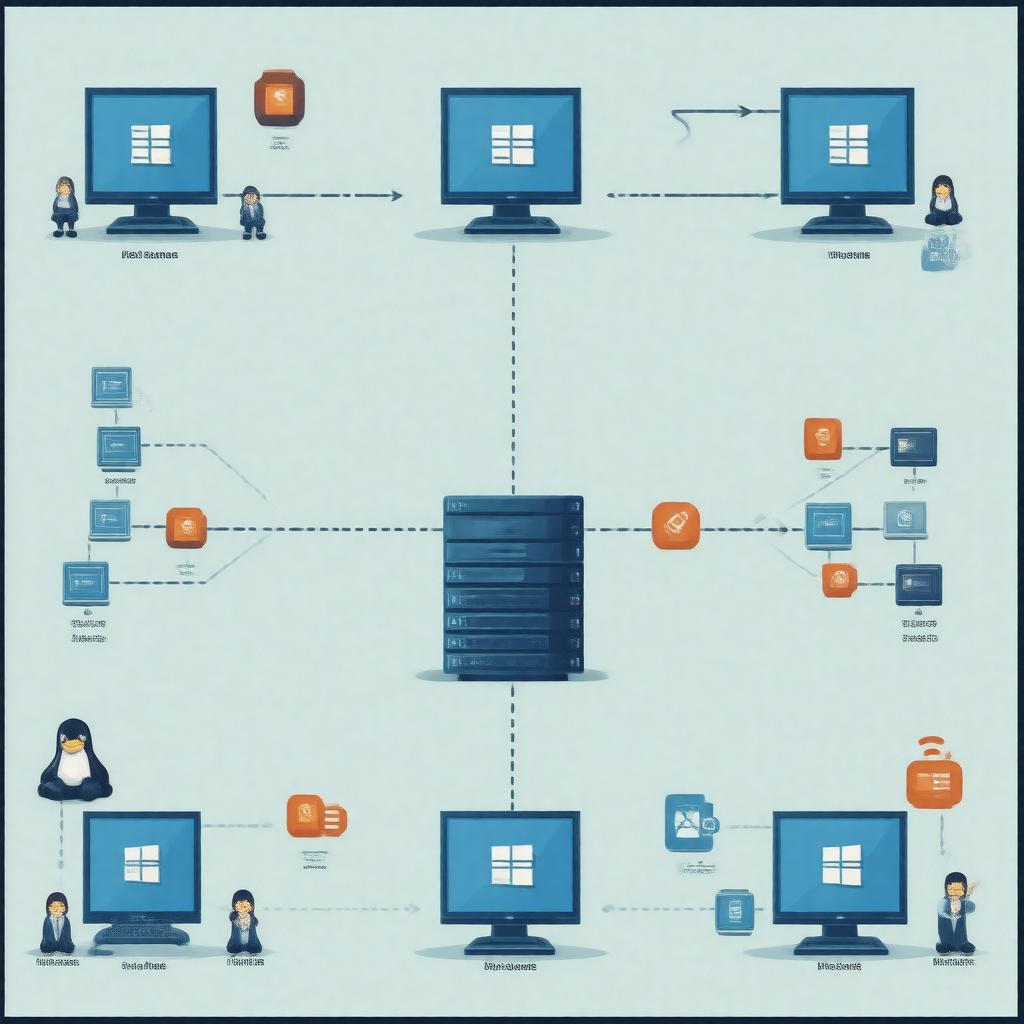

The protocol uses remote procedure call mechanisms derived from Open Network Computing to implement file operations such as lookup, read, write, and lock management. Architecturally it spans client-side kernel modules, server-side daemons, and network transport layers including TCP/IP, UDP, and emerging transport optimizations for datacenter fabrics developed by companies like Intel Corporation and Mellanox Technologies. Namespace resolution and authentication integrate with directory services such as LDAP and identity providers like Kerberos engineered by MIT. Caching strategies interact with local page cache subsystems in kernels from projects including Linux, FreeBSD, and NetBSD.

Implementations and Versions

Multiple major versions emerged from vendor and open source efforts: initial releases from Sun Microsystems, later protocol revisions formalized by the IETF and implemented in Linux kernel branches, as well as proprietary adaptations by NetApp, Oracle Corporation, and Microsoft Corporation. Open source implementations include those maintained by the Linux kernel community, FreeBSD Project, and distribution maintainers like Red Hat and Canonical (company). Commercial appliances and software from EMC Corporation, Dell Technologies, and IBM provided enterprise feature sets, while cloud vendors such as Amazon Web Services and Google Cloud Platform offered managed services exposing similar semantics.

Performance and Scalability

Designers addressed throughput and latency via techniques developed in research at Carnegie Mellon University and MIT: read-ahead, write-behind, client-side caching, and delegation of locks. Large-scale deployments in datacenters from Google LLC and Facebook, Inc. informed optimizations like parallel I/O, RPC batching, and transport offloads enabled by hardware from Intel Corporation and NVIDIA. Scalability challenges led to architectural patterns that combine distributed metadata management from projects like Ceph and GlusterFS with traditional server-based models used by vendors such as NetApp.

Security and Access Control

Security models evolved to accommodate authentication and authorization schemes including Kerberos, LDAP integration, and access control lists modeled after POSIX and vendor-specific extensions. Enterprises integrate audit and compliance tooling from firms like Symantec (Broadcom) and McAfee to meet requirements from regulators and standards organizations such as ISO. Transport-level protections leverage TLS and network segmentation practices common in environments managed by VMware, Inc. and cloud operators like Microsoft Azure to mitigate threats and support multi-tenant isolation.

Use Cases and Adoption

Adoption spans scientific computing centers such as CERN, enterprise data centers using Oracle Corporation databases, media production companies managing high-throughput storage with vendors like Avid Technology, and cloud-native applications on Amazon Web Services and Google Cloud Platform. Workflows in genomics, visualization, and content creation rely on shared namespace semantics to enable collaboration across workstations from Apple Inc. and servers from Dell Technologies, often integrated with orchestration platforms like Kubernetes for containerized workloads.

Category:Network protocols Category:Distributed computing