LeNet

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 50 → Dedup 0 → NER 0 → Enqueued 0

| LeNet | |

|---|---|

| |

| Name | LeNet |

| Caption | Early convolutional neural network architecture |

| Introduced | 1990s |

| Developers | Yann LeCun, Yoshua Bengio, Yann LeCun's collaborators |

| Field | Machine learning, Computer vision, Pattern recognition |

| Notable works | MNIST dataset |

LeNet LeNet is a pioneering convolutional neural network architecture developed in the 1990s for image recognition tasks. It played a central role in demonstrating the viability of multilayer neural networks for pattern recognition and influenced later models used by Google, Facebook, Microsoft Research, and academic groups at New York University and Université de Montréal. The design contributed to practical breakthroughs in handwriting recognition for institutions such as AT&T, Bell Labs, and influenced standards in optical character recognition used by United States Postal Service and Deutsche Post.

History

LeNet originated from research led by Yann LeCun in collaboration with researchers at AT&T Bell Laboratories and academic partners during the late 1980s and early 1990s. The work built upon earlier developments by Geoffrey Hinton, David Rumelhart, Ronald J. Williams, and concepts from Yoshua Bengio on backpropagation and representation learning. Early demonstrations used datasets curated by teams connected to Bell Labs and later benchmarked on the MNIST dataset developed by researchers from AT&T Bell Laboratories and New York University. LeNet’s successes coincided with increased interest from commercial entities such as Kodak and governmental postal services, influencing adoption in handwriting and document recognition systems deployed by United States Postal Service and private companies like Pitney Bowes. Over time, LeNet served as a conceptual ancestor to deeper networks developed at Stanford University, Carnegie Mellon University, and research groups at Google DeepMind and Microsoft Research.

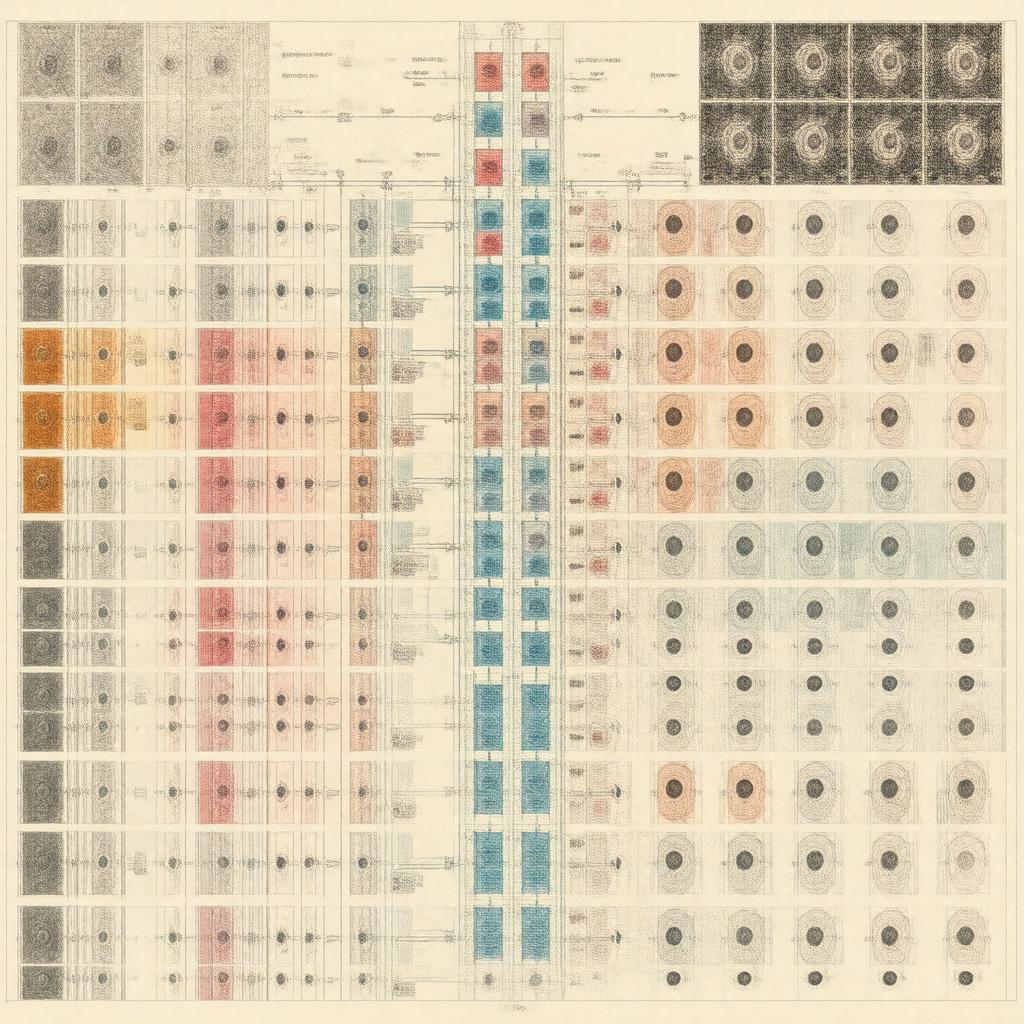

Architecture

The architecture uses stacked convolutional and subsampling layers followed by fully connected layers, a pattern later echoed in architectures from Alex Krizhevsky’s team at University of Toronto and research groups at Facebook AI Research. Input preprocessing often referenced techniques used in datasets associated with MNIST dataset and image pipelines from Bell Labs. Convolutional layers in LeNet apply learned filters, an idea with intellectual lineage traceable to work by Hubel and Wiesel in neuroscience and computational models explored at MIT and Harvard University. Subsampling or pooling layers reduce spatial resolution, an approach later refined by teams at Google and Microsoft Research. Activation choices in the original design often contrasted with later nonlinearities popularized by researchers at University of Toronto and projects like ImageNet classification. Fully connected classifiers towards the network head mirror architectures evaluated at Stanford University and used in applied systems from IBM Research and Intel Labs.

Training and Optimization

Training leveraged supervised learning with gradient-based optimization and backpropagation, building on foundational algorithms advanced by David Rumelhart, Geoffrey Hinton, and Ronald J. Williams. LeNet experiments used stochastic gradient descent and early weight-initialization methods developed in labs at AT&T Bell Laboratories and New York University. Regularization and dataset augmentation techniques applied in follow-up work referenced practices from ImageNet teams at Princeton University and University of Toronto. Hardware considerations in early training drew on compute resources from Bell Labs and campus clusters at New York University, while later reimplementations exploited accelerators from NVIDIA and infrastructure at Google Cloud Platform and Amazon Web Services. Optimization refinements, including momentum and learning-rate schedules, reflect methodological threads shared with groups at Carnegie Mellon University and Massachusetts Institute of Technology.

Variants and Extensions

Numerous variants and extensions expanded LeNet’s principles into deeper and more complex models developed by research groups at University of Toronto, Stanford University, Facebook AI Research, Google DeepMind, and Microsoft Research. Architectures incorporating rectified linear units and batch normalization were driven by teams at University of Toronto and University of California, Berkeley. Spatial transformer modules, residual connections, and inception-style motifs evolved from work at Google and Facebook, while recurrent and attention-based hybrids arose from collaborative research involving Google Brain and OpenAI. Application-specific adaptations were implemented by commercial labs including IBM Research and Intel Labs, and by academic consortia at MIT and ETH Zurich.

Applications

LeNet’s principles were applied to handwriting recognition systems used by United States Postal Service and postal operators such as Deutsche Post. Research implementations and derivatives influenced digit-recognition services in consumer products from Kodak and business systems developed by Pitney Bowes. LeNet-inspired models powered early optical character recognition projects at AT&T Bell Laboratories and informed computer vision modules in robotics research at Carnegie Mellon University and Massachusetts Institute of Technology. Medical imaging pilots and document analysis efforts at institutions like Harvard Medical School and Stanford University drew on LeNet-derived pipelines. The architecture’s influence extends to modern deployments from Google, Facebook, Microsoft, and startups incubated in technology hubs associated with Silicon Valley and Boston.

Performance and Evaluation

Original evaluations on handwritten digit recognition benchmarks such as the MNIST dataset demonstrated low error rates relative to contemporaneous methods developed at AT&T Bell Laboratories and research labs at New York University. Comparative studies often cited baseline results from institutions including Stanford University and Carnegie Mellon University. As deeper architectures emerged from teams at University of Toronto and projects like ImageNet, performance metrics shifted, but LeNet remains a pedagogical and historical benchmark used in coursework at Massachusetts Institute of Technology, New York University, and Stanford University. Contemporary reimplementations report consistent, replicable behavior and serve as reference points for reproducibility efforts promoted by organizations such as ACM and IEEE.

Category:Neural network architectures