LeNet-5

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 54 → Dedup 5 → NER 4 → Enqueued 1

| LeNet-5 | |

|---|---|

| |

| Name | LeNet-5 |

| Developer | Yann LeCun; Yoshua Bengio; Yann LeCun is proper noun already |

| Introduced | 1998 |

| Architecture | Convolutional neural network |

| Applications | Optical character recognition |

LeNet-5 LeNet-5 is a convolutional neural network designed for handwritten digit recognition and early image understanding. It was developed by researchers at AT&T Laboratories and introduced in 1998 as part of work by Yann LeCun, Yoshua Bengio (collaborative context), and colleagues; the model demonstrated the effectiveness of hierarchical feature learning applied to pattern recognition tasks used by institutions such as National Institute of Standards and Technology and companies like AT&T Corporation. LeNet-5 influenced later systems developed at Bell Labs, New York University, and research groups associated with Courant Institute of Mathematical Sciences.

Introduction

LeNet-5 emerged from research on gradient-based learning and backpropagation popularized by practitioners at AT&T Bell Laboratories, influenced by earlier work at Massachusetts Institute of Technology, Stanford University, and results from competitions organized by National Institute of Standards and Technology using the MNIST dataset. The model addressed optical character recognition needs in commercial settings linked to organizations such as Bank of America and research agendas at IBM Research and Microsoft Research. LeNet-5 combined convolution, subsampling, and supervised learning into a compact architecture that bridged innovations from teams at Université de Montréal and groups led by Geoffrey Hinton.

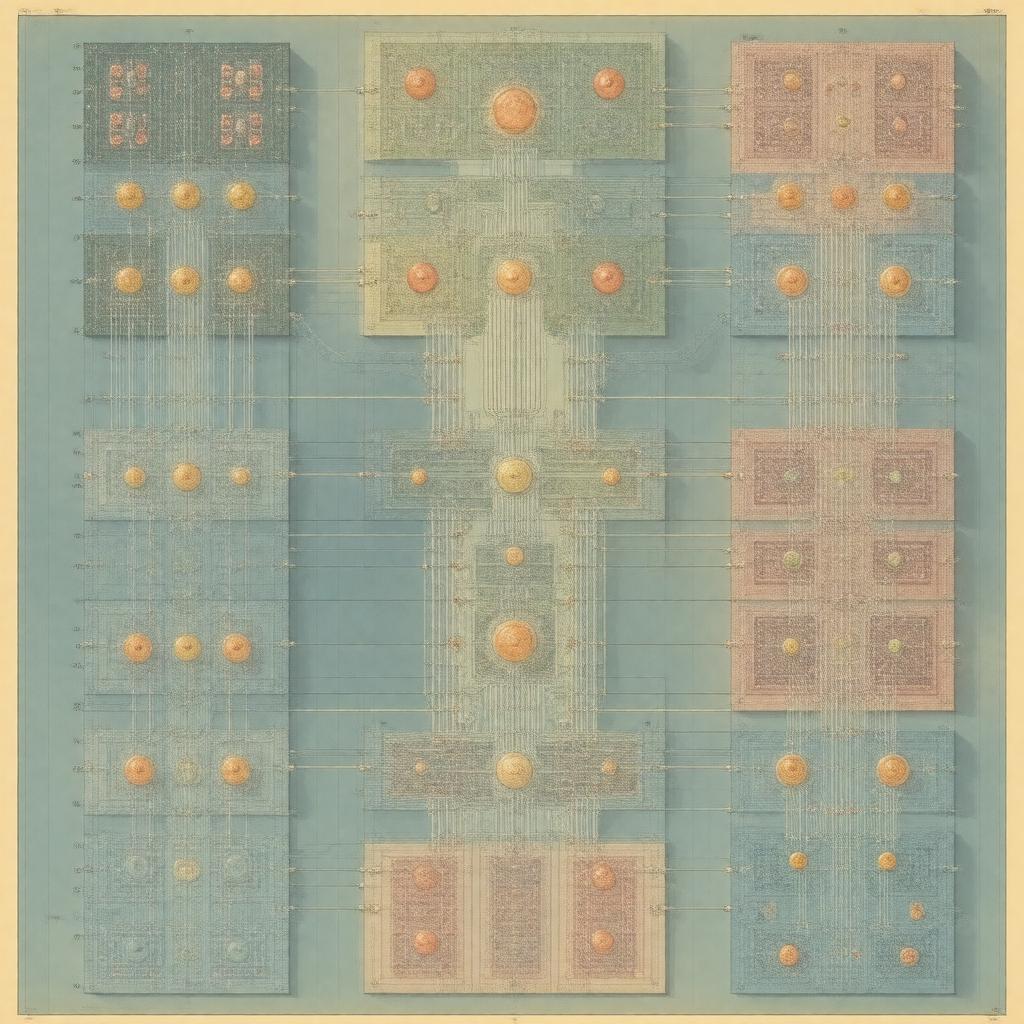

Architecture

LeNet-5’s layered design stacks convolutional and pooling operations inspired by visual cortex models studied at Massachusetts Eye and Ear Infirmary and computational frameworks developed at Columbia University. The canonical pipeline includes alternating convolutional layers and average pooling/subsampling layers culminating in fully connected layers; this layout parallels later architectures from Google Brain and Facebook AI Research. Key components correspond conceptually to modules explored in works at Carnegie Mellon University and University of Toronto that influenced deep learning toolkits by entities like OpenAI and projects at NVIDIA. The convolutional filters, parameter sharing, and local receptive fields echo neuroscience investigations affiliated with Harvard University and Salk Institute.

Training and Implementation

Training employed stochastic gradient descent with backpropagation techniques refined in publications from Bell Labs Research and algorithmic contributions by researchers associated with Brown University and University of Montreal. Implementation historically used C/C++ codebases on workstations from vendors like Sun Microsystems and computational resources available at Lawrence Berkeley National Laboratory. The dataset workflow relied on preprocessing steps similar to pipelines used by United States Postal Service OCR projects and research datasets curated by National Institute of Standards and Technology. Later reimplementations appeared in frameworks produced by Theano Project, Torch (machine learning), TensorFlow, and PyTorch teams tied to Google Research and Facebook AI Research.

Performance and Applications

LeNet-5 demonstrated strong performance on digit recognition benchmarks such as MNIST and was adapted for tasks in document analysis used by organizations like United States Postal Service and European Space Agency projects. Variants and descendants informed systems deployed by Google and Microsoft in early OCR products and research prototypes at Adobe Systems. The efficiency and compactness of the model made it suitable for embedded applications developed by hardware groups at Intel Corporation and ARM Holdings and inspired accelerator work at NVIDIA Corporation and Xilinx.

Historical Impact and Legacy

LeNet-5 catalyzed the commercialization and academic expansion of convolutional architectures, influencing subsequent breakthroughs at Google DeepMind, OpenAI, and laboratories led by Geoffrey Hinton, Yoshua Bengio, and Yann LeCun. Its concepts fed into networks that powered milestones at ImageNet challenges organized by Stanford Vision Lab and technology transfers to companies such as Apple Inc. and Amazon (company). Educational materials from MIT, Stanford University, and Carnegie Mellon University incorporate LeNet-5 as a canonical example, while research initiatives at European Research Council-funded centers cite its role in shaping modern deep learning ecosystems.