Kalman filter

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 41 → Dedup 0 → NER 0 → Enqueued 0

| Kalman filter | |

|---|---|

| |

| Name | Kalman filter |

| Invented by | Rudolf E. Kálmán |

| Introduced | 1960 |

| Field | Signal processing; Control theory; Estimation theory |

Kalman filter

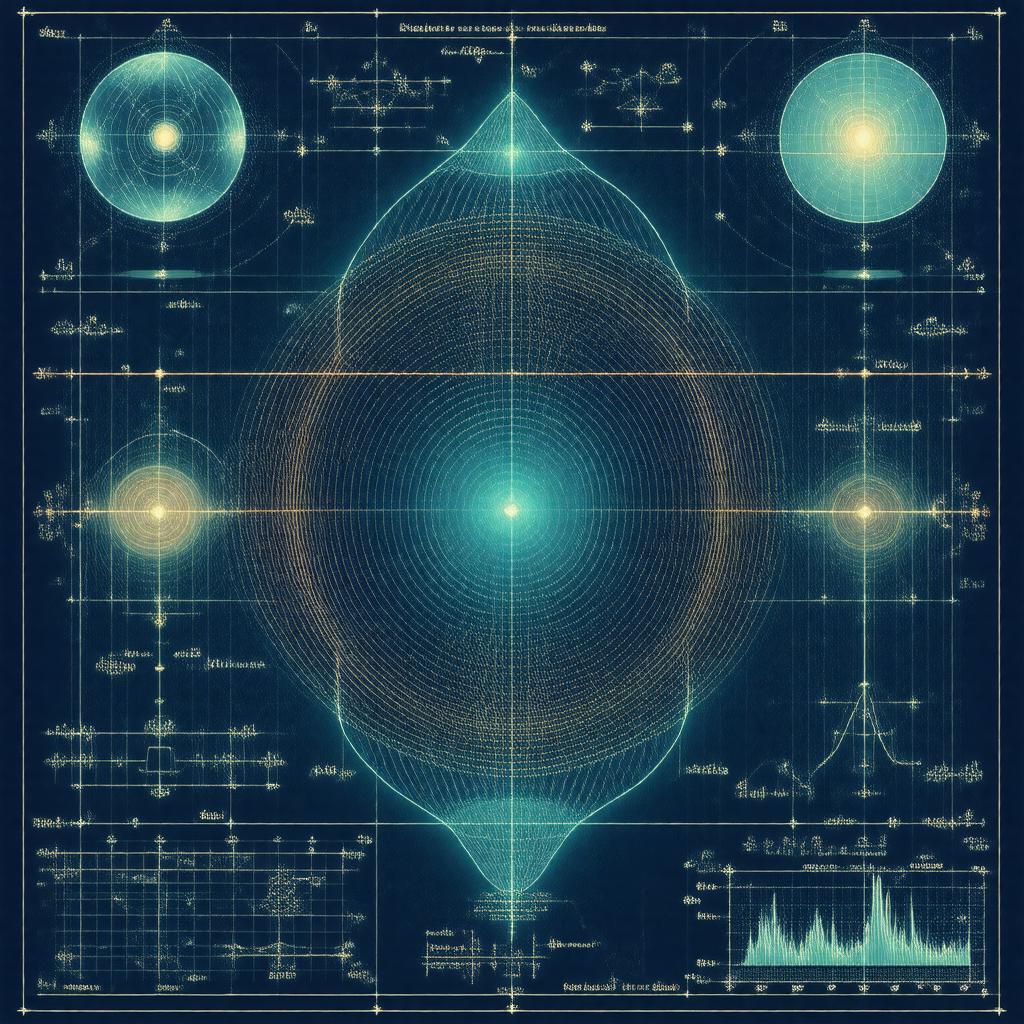

The Kalman filter is an algorithm for recursive optimal estimation of the internal state of a linear dynamical system from noisy measurements. It combines a predictive model and observational data to produce minimum mean-square-error estimates under Gaussian noise assumptions, enabling real-time state tracking in aerospace, robotics, navigation, and finance. Developed in the mid-20th century, the method brought together ideas from control theory, linear algebra, and probability, becoming a foundational tool in systems engineering and applied mathematics.

History

Rudolf E. Kálmán introduced the filter in 1960, building on earlier work in state-space models and stochastic estimation by Norbert Wiener, Andrey Kolmogorov, and Richard Bellman. The canonical paper connected linear dynamical systems studied in Bell Telephone Laboratories research, optimal control developments at Massachusetts Institute of Technology, and aerospace guidance problems at NASA and the United States Air Force. Early adopters included engineers at NASA for the Apollo program and researchers at Stanford University and University of California, Berkeley who extended the theoretical foundations. The Kalman filter’s prominence rose through applications in the Viking program and Cold War aerospace projects, and through textbooks authored by specialists at Princeton University, Harvard University, and California Institute of Technology that codified the state-space approach for control and signal processing curricula.

Mathematical formulation

Consider a discrete-time linear dynamical system with state x_k and observation z_k. The system evolves via x_{k+1} = A_k x_k + B_k u_k + w_k and produces measurements z_k = H_k x_k + v_k, where matrices A_k, B_k, H_k describe dynamics and observation maps. The process noise w_k and measurement noise v_k are modeled as zero-mean Gaussian sequences with covariances Q_k and R_k. The filter alternates a time-update (predict) step computing the a priori state estimate and covariance, and a measurement-update (correct) step computing the a posteriori estimate by forming the Kalman gain K_k that minimizes the posterior error covariance. Linear algebraic operations central to the formulation include matrix multiplication, inversion, and the solution of Riccati-type recursions. The derivation uses projection theorems from estimation theory and relies on orthogonality principles established in work at Bell Telephone Laboratories and in the mathematical statistics literature of Princeton University and Cambridge University.

Variants and extensions

Several extensions relax linearity or Gaussianity assumptions or address numerical robustness. The Extended Kalman Filter (EKF) linearizes nonlinear dynamics about a trajectory; its theoretical lineage connects to differential geometry work at University of Oxford and approximation theory from University of Chicago. The Unscented Kalman Filter (UKF) uses deterministic sigma points inspired by Gaussian quadrature and developments at Imperial College London and Georgia Institute of Technology. Ensemble Kalman Filters (EnKF) use Monte Carlo ensembles, drawing from techniques developed at Los Alamos National Laboratory and Lawrence Livermore National Laboratory for geophysical data assimilation in the European Centre for Medium-Range Weather Forecasts and National Oceanic and Atmospheric Administration. Square-root filters and factorized forms address numerical stability, reflecting linear algebra advances from Stanford University and Massachusetts Institute of Technology. Nonlinear and non-Gaussian generalizations include particle filters inspired by sequential Monte Carlo methods developed at University of Oxford and University of Toronto, and hybrid formulations combine ideas from researchers at Carnegie Mellon University and University of California, San Diego.

Implementation and numerical issues

Practical implementation requires attention to matrix conditioning, round-off error, and covariance consistency. Direct inversion of covariance matrices can be ill-conditioned; square-root and UD factorization implementations leverage stable matrix factorization techniques from National Institute of Standards and Technology numerical linear algebra work. Fixed-point and floating-point considerations arise in embedded implementations for platforms by Honeywell and Raytheon; software libraries from MathWorks and open-source projects in communities around GitHub provide tested routines. Tuning involves choice of Q and R, initialization of state covariances, and handling of missing or delayed measurements as encountered in European Space Agency missions. Stability proofs for time-varying systems draw on results developed at Princeton University and California Institute of Technology concerning observability and controllability.

Applications

Applications span aerospace guidance in NASA missions, navigation systems in Boeing and Lockheed Martin aircraft, and inertial navigation integration with Global Positioning System receivers developed by teams at Naval Research Laboratory. Robotics labs at Carnegie Mellon University and Massachusetts Institute of Technology employ the filter for localization and mapping alongside simultaneous localization and mapping (SLAM) frameworks. In geosciences, data assimilation centers such as European Centre for Medium-Range Weather Forecasts and National Oceanic and Atmospheric Administration use ensemble filters for weather and ocean state estimation. Finance groups at Goldman Sachs and J.P. Morgan use state-space models for volatility and signal extraction. Additional uses include biomedical signal processing in research at Johns Hopkins University, structural health monitoring in projects at Massachusetts Institute of Technology, and communications systems developed by engineers at Bell Labs.

Performance evaluation and limitations

Evaluation metrics include root-mean-square error, consistency tests against the predicted covariance, and likelihood-based measures. Limitations stem from model mismatch, nonlinearities, and non-Gaussian noise; in such cases EKF linearization can diverge, UKF may provide improved accuracy, and particle methods offer asymptotic consistency at higher computational cost. Real-world constraints include sensor dropouts, outliers, and computational budget on platforms designed by Intel and ARM Holdings. Theoretical guarantees—mean-square optimality for linear Gaussian systems—rely on assumptions of correct model structure and noise statistics; violations motivate robust variants developed in collaboration between researchers at Imperial College London and University of Cambridge.

Category:Estimation theory