Hamming code

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 63 → Dedup 0 → NER 0 → Enqueued 0

| Hamming code | |

|---|---|

| |

| Name | Hamming code |

| Invented by | Richard Hamming |

| First published | 1950 |

| Field | Information theory |

| Type | Linear error-correcting code |

| Parameters | (2^r−1, 2^r−r−1) |

Hamming code is a family of linear error-correcting codes developed to detect and correct bit errors in digital communication and storage. Conceived in the early 1950s, these codes balance redundancy and efficiency, enabling single-bit error correction and double-bit error detection with minimal parity overhead. Hamming codes influenced later developments in Claude Shannon‑related information theory, Norbert Wiener cybernetics, and practical systems designed by Bell Labs, IBM, and the MIT Radiation Laboratory.

History and Motivation

Richard Hamming devised the codes while working at Bell Labs; his experiments with early UNIVAC and ENIAC systems exposed the limitations of ad hoc parity checks used by operators like Grace Hopper and engineers influenced by John von Neumann. Motivated by recurring failures in stored data and the need to reduce manual error recovery, Hamming drew on concepts from Thomas M. Cover's later expositions, antecedent ideas from Harry Nyquist on signaling, and contemporary work at Pratt & Whitney‑era laboratories. The 1950 publication synthesized practice and theory that paralleled breakthroughs by Claude Shannon and fed into subsequent advances at institutions such as Bell Labs, GE, AT&T, and NASA during the Space Race.

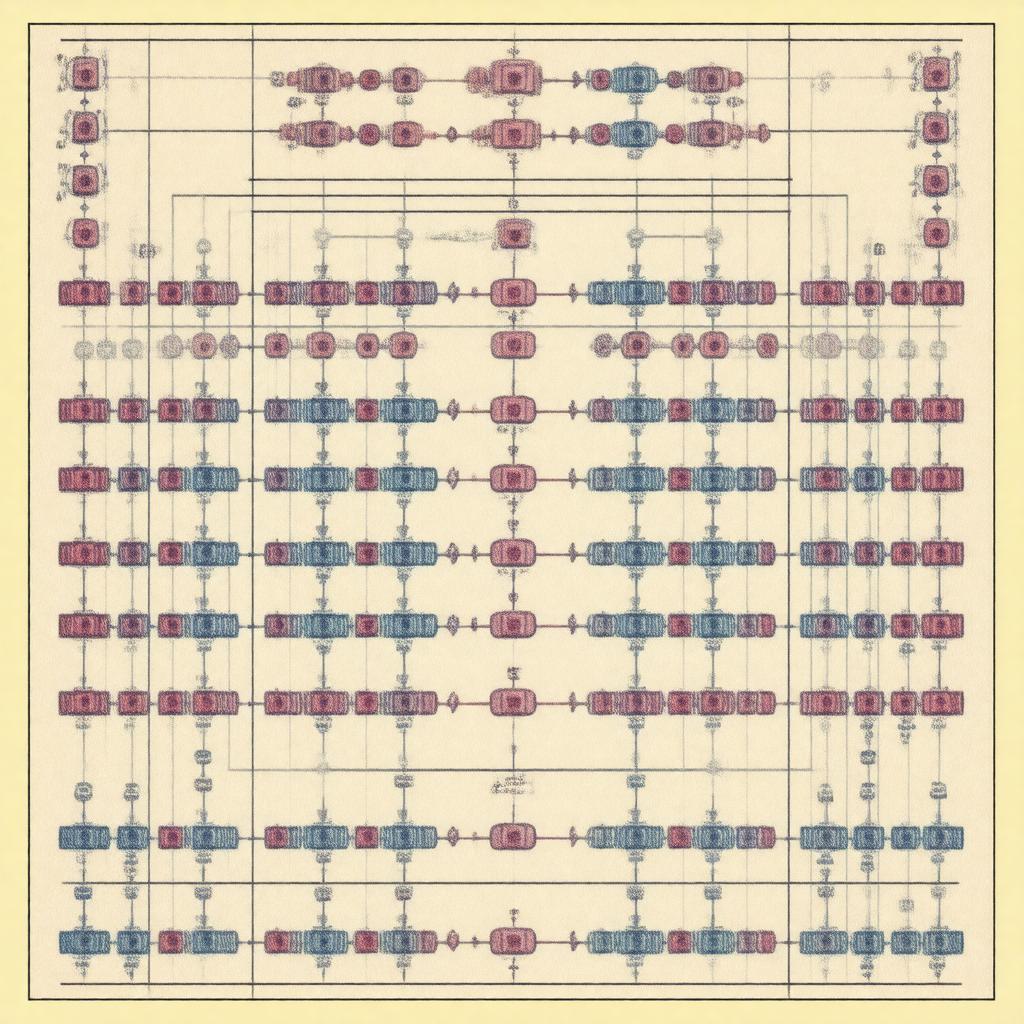

Construction and Encoding

A Hamming code is constructed over the binary field using a parity‑check matrix H with columns that are all nonzero distinct r‑bit binary vectors; this design parallels linear algebra techniques developed by mathematicians like Emmy Noether and David Hilbert and leverages matrix methods popularized in John von Neumann's circles. For r parity bits the block length is n = 2^r − 1 and message length k = 2^r − r − 1, matching parameters studied in combinatorial designs by Ronald Graham and Paul Erdős. Encoding uses a generator matrix G (studied by Richard Brauer and Issai Schur in group contexts) to map k‑bit messages to n‑bit codewords; practical implementations at IBM and Xerox PARC used hardware shift registers influenced by designs from Norbert Wiener and Claude Shannon labs. Practical encoder circuits resembled sequential logic from Texas Instruments and logic families common at Fairchild Semiconductor.

Error Detection and Correction

Hamming codes correct a single bit error by computing the syndrome s = H · r^T, a nonzero r‑bit vector indicating the erroneous bit position, an approach related to syndrome decoding methods later formalized by Marcel Golay and Gottfried Wilhelm Leibniz‑era binary reasoning. Double‑bit errors yield nonzero syndromes that do not match single‑bit patterns and thus are detectable but not correctable; this limitation prompted extensions that echo error control themes in Alan Turing's early computational error analyses and the ENIAC debugging practices. The trade‑offs between redundancy and minimum distance parallel results in Richard Bellman's optimization work and later coding bounds such as the Hamming bound and Gilbert–Varshamov bound explored by Valentin A. F. Glushkov and Vladimir Levenshtein. Practical decoders implement syndrome lookup tables as used in Intel microcontroller firmware and in FPGA designs from Xilinx and Altera.

Variants and Generalizations

Extensions include SEC‑DED (single‑error‑correcting, double‑error‑detecting) variants that add an overall parity bit—techniques adopted in IBM mainframes and DEC hardware—and generalized Hamming codes over GF(q) influenced by finite field theory developed by Évariste Galois and formalized by Emmy Noether. Binary Hamming codes generalize to Reed–Muller and Bose–Chaudhuri–Hocquenghem (BCH) families championed by David Slepian and M. Berlekamp, and connect to Reed–Solomon codes used in systems by Sony and Philips. Other adaptations include product codes and concatenated schemes used in NASA missions and broadcast standards from Dolby Laboratories and Sony Corporation.

Implementation and Applications

Hamming codes were implemented in early computers such as the IBM 701 and influenced memory error‑correcting features in DEC PDP-11 and VAX families. They remain common in semiconductor DRAM modules and ECC memory standards developed by JEDEC and used in servers by Sun Microsystems, HP, and Dell EMC. Communication applications include modem protocols standardized by ITU‑T, satellite links operated by NASA and ESA, and storage devices such as magnetic disks by Seagate and optical media engineered by Philips. In embedded systems, microcontrollers from ARM Holdings and Microchip Technology incorporate lightweight Hamming decoders; FPGAs by Xilinx implement parallel syndrome logic in spaceborne systems by SpaceX and Blue Origin. Academic and industrial curricula at MIT, Stanford University, and University of Cambridge teach Hamming codes alongside lectures referencing Claude Shannon and Alan Turing.

Category:Error correction codes