Chomsky hierarchy

Generated by GPT-5-mini

Generated by GPT-5-miniExpansion Funnel Raw 61 → Dedup 5 → NER 3 → Enqueued 1

| Chomsky hierarchy | |

|---|---|

| |

| Name | Chomsky hierarchy |

| Type | Formal language classification |

| Introduced | 1956 |

| Introduced by | Noam Chomsky |

| Fields | Theoretical computer science, Formal language theory, Linguistics |

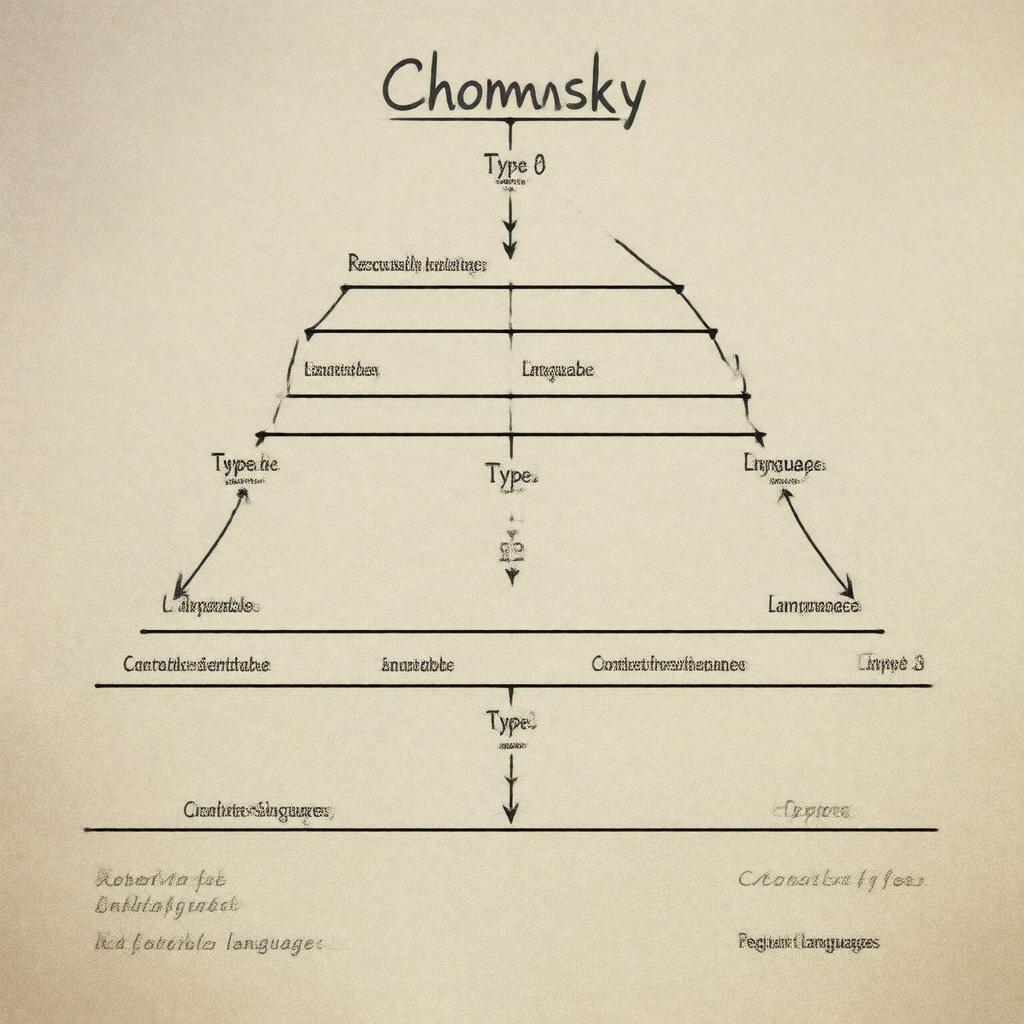

Chomsky hierarchy The Chomsky hierarchy is a theoretical classification of formal grammars that organizes language-generating systems by their expressive power and computational complexity. It connects work by linguists and computer scientists such as Noam Chomsky, Alan Turing, Alonzo Church, Stephen Kleene, and Emil Post and has influenced developments in linguistics, computability theory, lambda calculus, regular expressions, and Post correspondence problem.

Introduction

The hierarchy was proposed to compare types of formal grammars and the languages they generate, linking contributions from Noam Chomsky, Alan Turing, Alonzo Church, Stephen Kleene, and Emil Post with subsequent work by researchers at institutions such as Massachusetts Institute of Technology, Princeton University, University of Cambridge, Yale University, and Harvard University. It frames connections between machine models like the finite automaton, pushdown automaton, and Turing machine and formal systems studied in the context of the Hilbert's Entscheidungsproblem, Gödel's incompleteness theorems, and the P versus NP problem in theoretical computer science.

Formal definition

Formally, the hierarchy partitions grammars into levels defined by restrictions on production rules and corresponds to acceptance by abstract machines studied by Alan Turing, John von Neumann, Edsger W. Dijkstra, Donald Knuth, and Stephen Cook. At each level a grammar G = (V, Σ, R, S) has a set of variables V, terminal alphabet Σ, start symbol S, and production rules R; constraints on R yield the four principal types, whose formal equivalence to machine models was established by researchers including Michael O. Rabin, Dana Scott, Noam Chomsky, and Marvin Minsky.

Classes and examples

Type-3 grammars (regular) correspond to finite-state models used in tools and theories associated with Ken Thompson, Maurice Wilkes, John Backus, and Dennis Ritchie; examples include token definitions in languages implemented at Bell Labs and pattern matching via regular expressions. Type-2 grammars (context-free) relate to John Backus’s work on programming language syntax, Donald Knuth’s compiler theory, and parsers used in systems by Leslie Lamport, Niklaus Wirth, and Barbara Liskov; examples include programming language grammars such as those for ALGOL, Pascal, C and Java. Type-1 grammars (context-sensitive) have examples in transformational accounts advanced by Noam Chomsky and explored in computational models by Marvin Minsky and Leonard Adleman; they include certain natural language constructions analyzed in studies at Massachusetts Institute of Technology and Stanford University. Type-0 grammars (recursively enumerable) are equivalent to the general Turing machine model of computation developed by Alan Turing and studied in the context of the Halting problem and Post correspondence problem by Emil Post and Alonzo Church.

Properties and relationships

Inclusion relations (Type-3 ⊂ Type-2 ⊂ Type-1 ⊂ Type-0) reflect findings by Noam Chomsky, Michael O. Rabin, Dana Scott, John Hopcroft, and Jeffrey Ullman; closure properties under operations such as union, concatenation, and Kleene star were formalized by Stephen Kleene and extended by John Hopcroft and Jeffrey Ullman. Decidability and complexity results connect to major milestones like Cook–Levin theorem (related to Stephen Cook and Leonid Levin), the P versus NP problem studied by Richard Karp and Michael Garey, and lower-bound techniques influenced by work at Princeton University and MIT. Trade-offs in descriptional complexity and normal forms (e.g., Chomsky normal form) were developed in the literature by Noam Chomsky, John Backus, Donald Knuth, and later refined by researchers at Bell Labs and Stanford University.

Applications and significance

The hierarchy underpins compiler construction work by John Backus, Donald Knuth, Al Aho and Jeffrey Ullman, informs parsing algorithms used in systems at AT&T Bell Laboratories, IBM, and Microsoft, and influences natural language research at Massachusetts Institute of Technology and Stanford University. It also guides formal verification and model checking efforts at Bell Labs Research, Carnegie Mellon University, and MIT Lincoln Laboratory and appears in analyses of protocols and languages in projects by DARPA, European Research Council, and industrial labs like Bell Labs and IBM Research. Connections to cryptography and complexity theory draw on foundational work by Whitfield Diffie, Ron Rivest, Adi Shamir, Leonard Adleman, and complexity theorists at Princeton University.

Historical development

Originating in papers by Noam Chomsky in the mid-1950s, the hierarchy synthesized insights from logicians and early computer scientists including Alan Turing, Alonzo Church, Stephen Kleene, Emil Post, Marvin Minsky, and Michael O. Rabin. Subsequent formalization and machine equivalences were supplied by researchers at Princeton University and Yale University and popularized through textbooks by John Hopcroft, Jeffrey Ullman, Michael Sipser, and Donald Knuth. The framework influenced the design of programming languages at Bell Labs and IBM and continues to inform contemporary research at institutions like Massachusetts Institute of Technology, Stanford University, Carnegie Mellon University, and University of Cambridge.