PageRank

Generated by DeepSeek V3.2

Generated by DeepSeek V3.2Expansion Funnel Raw 57 → Dedup 32 → NER 4 → Enqueued 3

| PageRank | |

|---|---|

| |

| Name | PageRank |

| Class | Link analysis |

| Data structure | Graph (discrete mathematics) |

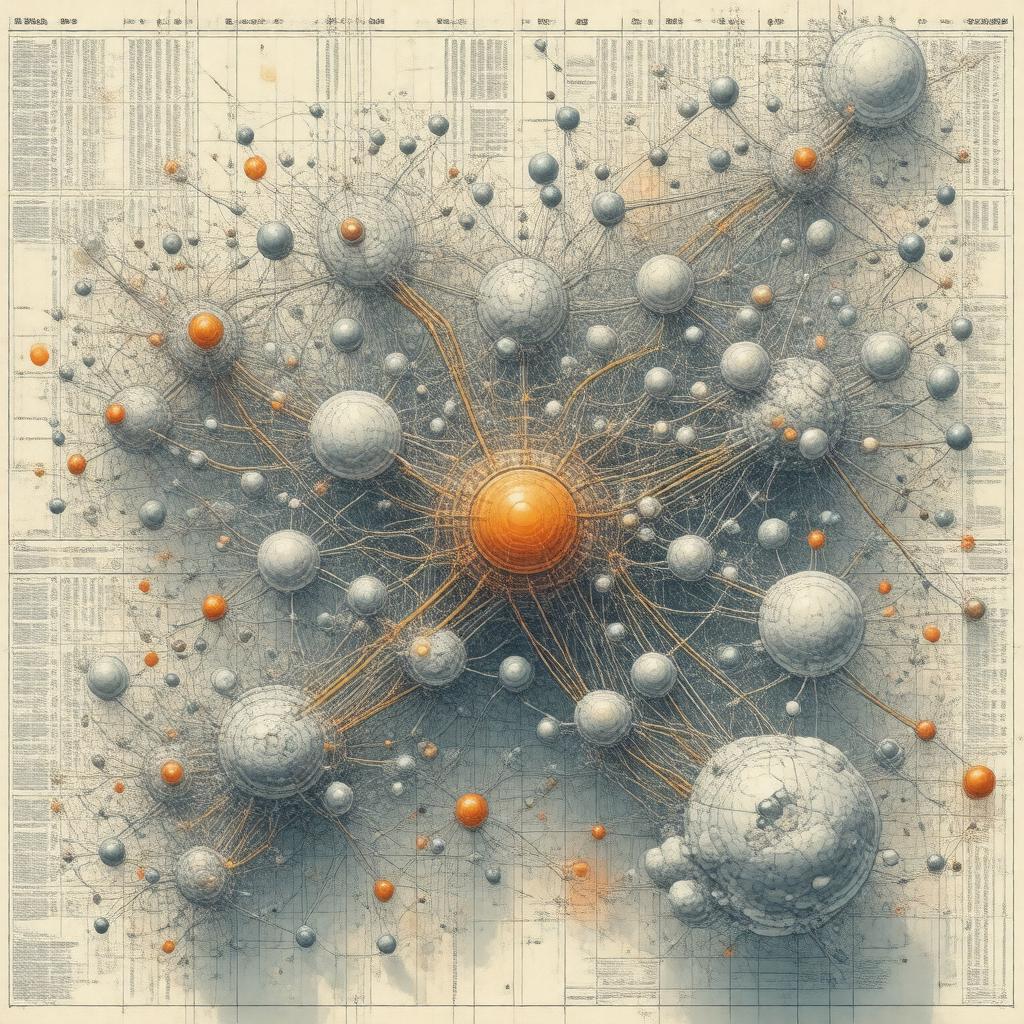

PageRank. It is a link analysis algorithm used by Google Search to rank web pages in their search engine results pages. Named after co-founder Larry Page, it assigns a numerical weighting to each element of a hyperlinked set of documents, such as the World Wide Web, with the purpose of "measuring" its relative importance within the set. The algorithm may be applied to any collection of entities with reciprocal quotations and references.

Overview

The fundamental premise is that more important websites are likely to receive more links from other websites. It interprets a link from The New York Times to a personal blog as a vote of confidence, treating the entire web graph as a vast democratic system. This concept is analogous to the impact factor of academic journals, where citations from prestigious publications like Nature (journal) carry greater weight. The random surfer model provides a theoretical foundation, imagining a user who randomly clicks links, with the probability of landing on a page defining its score.

Algorithm

The core formula iteratively computes scores based on the Markov chain formed by the web's link structure. It involves solving for the principal eigenvector of a normalized link matrix, a process related to eigenvalue problems in linear algebra. The original equation, as published by Larry Page and Sergey Brin in their Stanford University research, includes a damping factor to account for pages with no outgoing links. This mathematical framework ensures the computation converges to a stable distribution, representing the steady-state probabilities of the Markov process.

Variations and extensions

Researchers have proposed numerous adaptations to address limitations. Topic-sensitive PageRank creates biased vectors towards specific subjects, while the TrustRank algorithm, developed to combat web spam, propagates trust from a seed set of reputable pages like BBC News. Other modifications include weighting links based on anchor text relevance or the hyperlink-induced topic search (HITS) algorithm, which distinguishes between hub (network science) and authority (network science) pages. These extensions are often discussed in conferences like the International World Wide Web Conference.

Applications

Beyond its flagship use in Google Search, the underlying principles have been utilized in various fields. In bioinformatics, it helps identify key proteins in protein-protein interaction networks. Social network analysis platforms apply it to find influential users on networks like Twitter or Facebook. It has also been used to rank scientific journals, academic papers in databases like Google Scholar, and even players in sports leagues. Companies such as Microsoft and IBM have incorporated similar algorithms into their analytics tools.

History

The algorithm was developed in 1996 by Larry Page and Sergey Brin as part of a research project at Stanford University, originally named "BackRub". This work formed the foundational technology for the Google search engine, incorporated in 1998. The patent was assigned to Stanford University and later exclusively licensed to Google LLC. The original paper, "The Anatomy of a Large-Scale Hypertextual Web Search Engine", was presented at the Seventh International World Wide Web Conference. Its success influenced the entire field of information retrieval.

Computation

Practical computation for the entire web requires efficient, large-scale distributed computing. Google's infrastructure, including systems like MapReduce and later Apache Hadoop, was designed to handle these iterative calculations across massive datasets. Methods like the power iteration are employed to approximate the dominant eigenvector. Due to the dynamic nature of the web, the algorithm runs continuously in the Google data centers, updating scores as the crawler discovers new links and pages. The computational challenges are a key topic in high-performance computing research.

Category:Google Search Category:Graph algorithms Category:1996 in computing